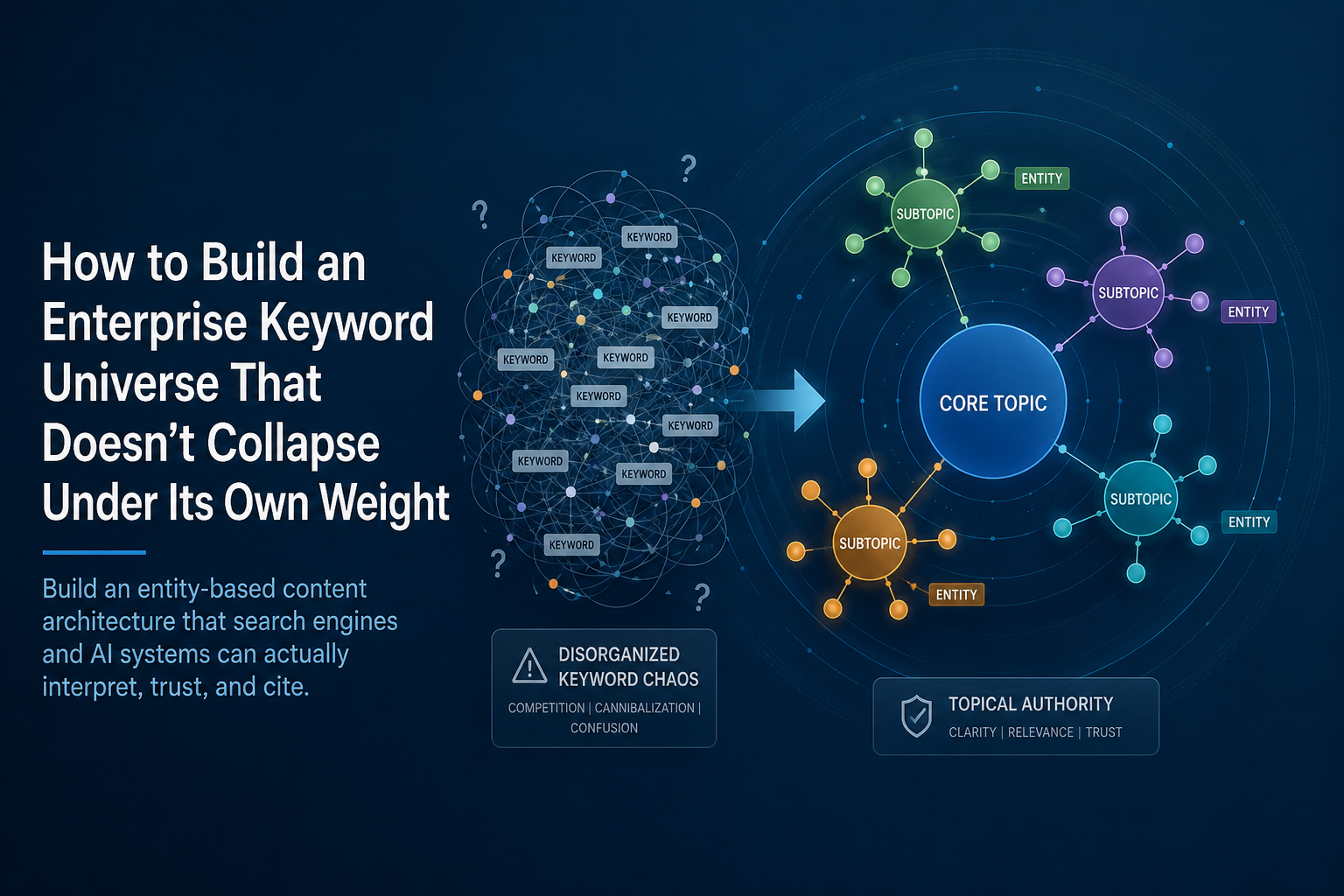

How to Build an Enterprise Keyword Universe That Doesn't Collapse Under Its Own Weight

There is a particular kind of enterprise SEO failure that does not announce itself loudly. Rankings do not fall off a cliff. Traffic does not crater overnight. Instead, the site just... stops growing. New content publishes and barely moves. Existing pages oscillate in positions they never quite break out of. The keyword tracking spreadsheet grows longer every quarter while organic pipeline contribution stays flat.

The cause is almost always the same: the organization built a keyword universe that is wider than it is deep, inconsistently structured, and competing with itself across dozens or hundreds of overlapping pages. Nobody made a bad decision to create this problem. It accumulated. A new product line launched and got its own set of pages. A content team added blog posts targeting adjacent terms. A different team built a resource hub covering the same topics from a different angle. A site migration moved URLs without rationalizing the architecture underneath them. Three years later, the site has eight hundred pages targeting two hundred topics with no coherent structure connecting any of them.

This is keyword sprawl. And it is the defining structural problem of enterprise content at scale.

This post is the framework for fixing it — not by adding more content, but by building the kind of entity-based content architecture that search engines and AI systems can actually interpret, trust, and cite. For organizations managing hundreds of product or service lines, that architectural work is not optional. It is the prerequisite everything else depends on.

Why Keyword Sprawl Happens — And Why It Keeps Getting Worse

Understanding how keyword sprawl develops is the first step toward preventing the next cycle of it. It is not the result of one bad decision. It is the predictable output of an organizational structure that treats content production as a volume metric rather than an architecture metric.

The typical enterprise content operation has multiple teams creating pages: a product marketing team producing service and feature pages, an editorial team producing blog content, a demand generation team producing landing pages for campaigns, a regional team producing location and market-specific pages. Each team has its own calendar, its own keyword research process, and its own definition of what topic it owns. None of those definitions are coordinated across teams. None of them are validated against what the other teams are building.

The result is that by the time anyone runs a comprehensive keyword cannibalization audit, the site has multiple pages targeting the same search intent, forcing search engines to split relevance signals — backlinks, click-through rates, engagement metrics — across those pages rather than concentrating them on one strong URL. The result is fragmented rankings, reduced visibility, and a digital footprint that is wide but perilously shallow. Link Juice Club

The AI search dimension compounds the problem in a new and specifically damaging way. AI search engines like ChatGPT and Perplexity cite sources based on clear topical authority. When multiple pages compete for the same topic, LLMs struggle to determine which page represents your definitive perspective. This reduces your chances of being cited in AI-generated answers. Search Engine Land

The systems deciding whether to cite your brand in a generated answer are looking for a single, clearly authoritative source on each topic. A site with seven pages addressing the same query from slightly different angles looks like a confused entity rather than a definitive one. It will consistently lose citation opportunities to competitors who have done the architectural work to establish unambiguous authority on each topic they claim.

The Keyword Universe vs. The Keyword List

The terminology distinction matters and is worth establishing precisely.

A keyword list is what most enterprise SEO programs produce. It is a collection of target terms, usually organized by volume and difficulty, assigned to pages or added to a content calendar. It grows over time as new opportunities are identified. It does not have a coherent internal structure. It does not map relationships between terms. It does not enforce distinctions between terms that belong in the same page and terms that deserve their own. It is a flat inventory, not an architecture.

A keyword universe is a structured map of the topics a brand claims authority over — organized hierarchically, with clear relationships between primary topics, supporting subtopics, and the specific queries that each level of content addresses. It has boundaries. It makes explicit decisions about what the brand will not try to rank for. It assigns ownership — each topic belongs to one page, and that assignment is enforced as a governance rule, not a suggestion.

Enterprise SEO in 2026 is no longer about managing millions of individual pages. It is about building entity-based ecosystems where a domain's authority is concentrated, coherent, and legible to both search engines and AI systems. Creative Digital

Building a keyword universe requires answering four questions that most enterprise keyword programs never ask. What are the core entities this brand represents — the specific products, services, problems, and audiences that define its domain of authority? How do those entities relate to each other hierarchically? What is the single most authoritative page for each entity? And what content exists that is not serving any of those entities and should be either consolidated or removed?

Those four questions produce an architecture. A keyword list produces a backlog.

The Three Structural Problems Enterprise Keyword Programs Produce

Before building the solution, it is worth being precise about the specific ways enterprise keyword sprawl damages search performance. They are distinct problems with distinct fixes, and treating them all the same way produces mediocre results for all of them.

Problem One: Keyword Cannibalization

Keyword cannibalization is not merely a technical error or a keyword conflict. It is a fundamental strategic failure where a domain competes against itself, fracturing its authority and forcing search engines to choose between multiple imperfect options rather than presenting a single, authoritative result. Link Juice Club

When enterprise brands audit their sites comprehensively, they typically find brand keywords triggering six to thirty-four competing URLs for the same query. The pages swap positions in SERPs constantly, never breaking into high-ranking positions because no single page accumulates the authority that a consolidated page would. ClickRank

The diagnostic is straightforward in Google Search Console: filter your Performance report by a high-value query, then click the Pages tab. If two or more URLs are accumulating impressions and clicks for the same query — especially if their average positions fluctuate wildly day to day — the site is actively experiencing keyword cannibalization and bleeding ranking potential across multiple weak pages instead of building it on one strong one. Pressfarm

The important nuance: cannibalization is not always a problem. High-authority sites — domain rating 75-plus — often hold positions one and two simultaneously for the same query. The turning point was Google's integration of neural matching, which allows the algorithm to understand that five different articles about the same subject can serve five slightly different user intents without the domain penalizing itself. The critical exception is commercial intent. Regardless of authority level, when multiple pages split traffic for a "buy" or "price" or "signup" keyword, conversion rates drop and consolidation is always the right answer. ClickRank

The practical rule for enterprise teams: cannibalization on informational content at high domain authority may be acceptable or even beneficial. Cannibalization on commercial intent pages is always a problem that needs fixing.

Problem Two: Intent Fragmentation

Intent fragmentation is subtler than keyword cannibalization and more damaging at scale. It occurs when a site has multiple pages covering the same topic at slightly different angles — a service page, a blog post, a use case page, a comparison page, a pricing page — without a clear architectural logic for which page addresses which intent and how they support each other.

The result is that each page captures some fraction of the intent signal without any page capturing it authoritatively. A user searching "enterprise content management software pricing" might find a blog post discussing pricing models, a service page listing features without prices, and a dedicated pricing page that does not have enough SEO authority to rank for the query. Three pages, none of them definitively addressing the intent, all of them diluting the authority that a single well-constructed page would command.

The fix for keyword cannibalization is intent architecture, not just redirects. Group competing pages by search intent first. If two pages target the same intent, consolidate them into one authoritative piece. If they target different intents that happen to share a keyword, differentiate the angle and use internal linking to signal which page owns which query. DevBoat Technologies

This requires making explicit decisions that most enterprise content teams have never been asked to make: which page is the commercial destination for this topic, which page is the informational resource, and which page is the comparison or decision-support content? Once those decisions are made and enforced in the content architecture, the intent fragmentation resolves. Without those decisions, the fragmentation persists regardless of how much individual page optimization gets done.

Problem Three: Topical Coverage Gaps Inside Overcrowded Topic Areas

The counterintuitive reality of enterprise keyword sprawl is that sites with hundreds or thousands of pages are simultaneously over-indexed in some topic areas and completely unrepresented in others.

Enterprises frequently fail because their content libraries were built for scale before they were built for authority. Thin location pages, repetitive blog posts, generic category copy, and lightly edited AI drafts increase indexation but rarely strengthen brand trust. In 2026, the better approach is selective depth. Ai Boost

The typical pattern: a large enterprise has forty blog posts about cloud security and zero substantive content about a specific regulatory compliance requirement that its target customers search for weekly. The forty blog posts compete with each other for the same queries. The compliance topic — which represents genuine expertise the brand has and genuine demand the market has — is completely unaddressed.

Building a keyword universe that doesn't collapse requires both eliminating the over-indexed redundancy and identifying and filling the genuine gaps. Those are not the same exercise. Most enterprise SEO programs do neither systematically.

The Entity-Based Architecture Model

The solution to keyword sprawl is not a different approach to keyword research. It is a fundamentally different organizational model for how topics are structured, owned, and governed across the entire site.

The entity-based architecture model treats the site not as a collection of pages targeting keywords, but as a structured representation of the entities the brand claims authority over. Every entity — a product category, a service line, an audience segment, a problem type, a geographic market — gets a clear home in the architecture. Every page serves a defined function within that architecture. Nothing exists outside the structure.

In 2026, a SaaS enterprise with ten thousand or more pages will outperform competitors not by publishing more blogs but by creating a clean, interconnected entity graph that AI systems can easily understand and cite. Sotavento Medios

The architecture has four tiers.

Tier one: Entity definition pages. These are the canonical pages for each primary entity the brand claims authority over. A manufacturing software company has entity pages for each product category, each industry it serves, and each core problem its products solve. These pages are the definitive answer to "what is this company's authority on topic X." They are designed to be comprehensive, authoritative, and stable. They do not chase trends. They establish the entities the brand owns.

Tier two: Pillar content. A pillar page covers a broad, high-volume topic comprehensively at a strategic level — addressing the full scope of the subject without going so deep on any subtopic that it eliminates the need for cluster content. Pillar pages require three thousand to five thousand words of comprehensive coverage. Thin pillar pages undermine the entire cluster's authority signal. Each pillar connects to the entity it belongs to and to every cluster page that supports it. SEO-Kreativ

Tier three: Cluster content. Cluster pages address specific subtopics, questions, comparisons, use cases, and supporting queries within each pillar's domain. Every cluster page must link back to the pillar using anchor text that includes the pillar's target keyword. Internal linking is the connective tissue of the cluster — not decorative but structural. The clusters serve two functions simultaneously: they address specific search intents that the pillar page cannot address in full, and they build the topical depth signal that tells search engines the brand has genuine comprehensive authority, not just a landing page. SEO-Kreativ

Tier four: Transactional and commercial pages. These are separate from the informational cluster architecture and governed by different rules. They serve commercial intent — pricing, comparison, request-a-demo, buy-now — and should never be allowed to compete with informational content for the same queries. The internal linking structure of the site should funnel users from informational cluster content toward commercial pages, not have them competing for the same positions.

The Keyword Territory Map: The Governance Document That Makes It Real

Architecture without governance is architecture that will be overwritten within six months. Every enterprise content team that has built a topic cluster model without a mandatory governance layer has watched that model dissolve as new content gets created without reference to the existing structure.

The single most effective governance tool for an enterprise keyword universe is a keyword territory map — a living document that assigns every primary keyword, every pillar topic, and every cluster subtopic to a specific page, with a clear rule: if you cannot clearly explain why two pages' keyword targets are different, one of them needs to change before the second page is built.

Maintaining a keyword territory map means assigning every page a primary keyword, a secondary, and a tertiary, with a simple rule: before any new keyword is finalized for a new or existing page, the entire sitemap is searched for overlapping terms, and different qualifiers are tested to confirm the new page genuinely addresses a distinct intent rather than fragmenting an existing one. DevBoat Technologies

At enterprise scale, this governance document needs to be integrated into the content production workflow — not as a reference document that well-intentioned teams check occasionally, but as a mandatory gate that every new page passes through before it is assigned to production. The check is simple: does a page already exist that owns this keyword intent? If yes, the new content brief needs to either justify a genuinely distinct intent or modify the existing page rather than create a new one.

Lots of companies have an SEO style guide or recommendations but nothing enforces them. In 2026, that is no longer enough. A center of excellence with real authority to impose standards — centrally managed content templates, automated checks for structured data, and routine compliance audits — is what separates enterprise SEO programs that maintain their architecture from ones that rebuild it every two years. iTech Manthra

The Audit Methodology: Starting From Where You Actually Are

Most enterprise organizations building a keyword universe are not starting from a clean slate. They are starting from years of accumulated content with no coherent structure, overlapping topics, mixed intent targeting, and significant cannibalization. The audit that reveals the actual state of the current content is the prerequisite to building the architecture that replaces it.

The audit has five stages that must happen in sequence.

Stage one: Inventory. Export every indexed URL from Google Search Console. For each URL, pull its clicks, impressions, average position, and the top three queries it generates impressions for. This is the raw material. It tells you what exists, what Google thinks each page is about, and roughly how much value each page is currently providing.

Stage two: Intent clustering. Group pages by the intent their top queries represent, not by the URL structure or the section of the site they sit in. Pages from different sections of the site often target the same intent. This grouping reveals the cannibalization clusters — the groups of pages that are all trying to serve the same search need.

Stage three: Authority assignment. Within each intent cluster, identify the strongest page — the one with the most clicks, the most backlinks, the best average position, or some combination. This is the candidate to survive as the canonical page for that intent. Every other page in the cluster is a candidate for consolidation, redirection, or differentiation.

Stage four: Gap analysis. Map your intent clusters against your keyword universe research. Pages and content hubs should be prioritized based on business impact, not just traffic potential. This is particularly important for enterprises managing thousands or millions of URLs, where resource allocation is critical. The gaps — topics where the brand has genuine authority and genuine market demand but no substantive content — are the highest-priority new content investments. They always exist, even on sites with thousands of pages, because the existing content was built for volume rather than coverage. Ai Boost

Stage five: Pruning decisions. The standard operating procedure for 2026 involves quarterly intent audits using semantic analysis to spot drifting content, and ruthless pruning — if a page has not generated meaningful traffic in twelve months and does not serve a necessary architectural function, it is merged or deleted. Link Juice Club

The pruning decision is the one most enterprise teams resist because it feels like destroying work. The data consistently shows the opposite effect. One SEO agency recorded a 110% uplift in organic sessions after consolidating competing pages into single authoritative resources. Another saw revenue from organic traffic increase by 170% after resolving cannibalization on high-intent commercial pages. Fewer, stronger pages consistently outperform more, weaker ones. Pressfarm

Applying the Architecture to Multi-Product-Line Organizations

The specific challenge for organizations managing hundreds of product or service lines is that the entity hierarchy has to be built and maintained across a much larger domain than a single-focus site. Each product line is its own entity with its own pillar, its own clusters, and its own commercial pages. The governance challenge scales accordingly.

The structural principle that prevents multi-product-line sites from collapsing into chaos is strict hierarchy enforcement. Each product line maps to one and only one primary entity page. Each primary entity page owns a defined set of pillar topics. Each pillar owns a defined set of cluster topics. No cluster topic crosses over into another product line's pillar without an explicit architectural decision and a canonical designation.

This sounds obvious stated plainly. The execution challenge is that at enterprise scale, the people making content decisions in one product line often do not know what decisions were made in another. A blog post published by the enterprise software division addressing a topic that is already covered by a cluster page in the security division creates cannibalization that neither team is aware of. The keyword territory map, applied across the entire organization rather than within individual product lines, is the only mechanism that catches these cross-divisional conflicts before they compound.

Prompt-level research — identifying the actual questions people ask in natural language and mapping those prompts to content, entities, and proof points — often reveals gaps that standard keyword tools do not surface. A cybersecurity company may target a primary category term successfully in organic search while AI users ask specific operational questions that are nowhere in the existing content. The keyword universe has to account for both the traditional keyword and the conversational query that AI systems are generating answers for. Ai Boost

The multi-product-line site that wins in 2026 does not try to rank for everything. It identifies the specific topic areas where it has genuine, documentable expertise, builds genuine depth in those areas, and maintains the architectural discipline to stop adding content outside that perimeter until the depth inside it is competitive.

The Connection to AI Search Visibility

Everything discussed above matters more in the AI search context than it does in traditional SEO, and it matters in a specific technical way that enterprise teams need to understand explicitly.

As we have written in our piece on the entity problem, AI search systems build confidence about a brand based on the coherence and consistency of its topical presence across the web. A site with a clean entity-based architecture — where each topic has one clearly designated authoritative page, where the pillar-cluster structure makes the topical hierarchy legible to a crawler, where entity definition pages establish precisely what the brand claims authority over — is dramatically easier for an AI system to model and cite with confidence than a site with keyword sprawl.

Topical authority is built through a specific content architecture that mirrors how human experts think about and explain complex subjects. When clusters are complete, topical authority compounds. Every new page strengthens the whole topic instead of starting from zero. Search engines reward sites that show intentional topic coverage, not sites that chase trends or keywords. SEO-Kreativ

The GEO implication is direct: an enterprise that has resolved its cannibalization problems and built a coherent entity-based architecture is not just better positioned in traditional search. It is building the kind of topical authority signal that makes AI systems confident enough to cite it in generated answers — which, in an environment where AI search visibility is increasingly the channel that shapes buyer perception before a purchase decision is made, is the competitive asset that compounds most durably over time.

As we have shown in our enterprise SEO alignment post, the strategy is only as valuable as the execution infrastructure that supports it. Building a keyword universe requires the same cross-functional alignment that any enterprise SEO initiative requires — content teams, product marketing, development, and leadership all operating from the same architectural document with the same governance rules. The architecture without the governance is a one-time cleanup that will re-accumulate sprawl within eighteen months. The governance without the architecture is process applied to the wrong structure. Both together are what produces a keyword universe that actually holds.

Sources cited in this piece:

GrowthOS — Enterprise SEO Guide for 2026: Strategy, Governance, and a 90-Day Plan

Digital Applied — SEO Content Clusters 2026: Topic Authority Guide

ClickRank.ai — Topical Authority SEO: The Ultimate 2026 Guide

The Creative Digital — Why Enterprise SEO Breaks in 2026: 5 AI Trends That Solve It

Decoding — Content Cannibalization: What It Is and How to Fix It in 2026

Traficxo — Content Cannibalization in 2026: Fix SEO Conflicts and Restore Rankings

Grit Daily — How to Handle Keyword Cannibalization: 11 Effective Strategies

Search Engine Land — Fix Keyword Cannibalization: Identify and Resolve SEO Issues

Wix SEO Hub — A Complete Guide to Preventing Keyword Cannibalization

SerpSculpt — Technical SEO for Enterprise SaaS: The Complete 2026 Guide

DevBoat Tech — Enterprise SEO Operating Models: 2026 Strategy Guide

Sotavento Medios — Scalable Enterprise SEO Strategies 2026: The AI and Entity Framework

Internal resources referenced:

You Don't Have a Content Problem. You Have an Entity Problem.

Why Enterprise SEO Fails: The Internal Alignment Problem No Agency Will Tell You About

Managing hundreds of product lines and not sure why your content isn't compounding? The architecture is almost certainly the problem. Let's map it together. →

Frequently Asked Questions

What is the difference between keyword sprawl and having a large content library?

A large content library is a feature. Keyword sprawl is a structural failure. The difference is whether the content has a coherent architecture underneath it. A large content library where every page serves a defined function, addresses a distinct intent, and connects to the topical hierarchy through internal linking is a competitive asset that compounds over time. Keyword sprawl is what happens when content volume grows without architectural governance — the same topics get covered repeatedly from slightly different angles, pages compete with each other for the same queries, and no single page accumulates enough authority to rank or earn AI citations definitively. You can have five hundred pages and no keyword sprawl if the architecture is sound. You can have eighty pages and significant keyword sprawl if nobody ever mapped the relationships between them.

How do I know if my enterprise site has a keyword cannibalization problem?

Open Google Search Console, go to the Performance report, filter by a high-value commercial query, and click the Pages tab. If two or more of your URLs are accumulating impressions for the same query — and especially if their average positions fluctuate between them week over week — you have cannibalization. At the portfolio level, pull your top fifty queries by impression volume and run this check for each. The pattern that reveals itself on most enterprise sites is a cluster of pages oscillating between positions eight and twenty for the same term, none of them ever breaking into the top five, because the authority that should be concentrated on one page is being split across several. The other signal is in your content calendar: if your team has ever written a piece and later realized a similar piece already exists, and both pieces remained live, you have the conditions for cannibalization.

Should we always consolidate cannibalizing pages, or are there cases where we shouldn't?

Not always, and the decision framework matters. Consolidation is clearly the right answer when two or more pages target the same intent with no meaningful differentiation between what they offer the user. Consolidation is also clearly the right answer for any commercial intent keyword — pricing, comparison, request-a-demo — where multiple pages splitting authority reduces conversion performance regardless of traffic impact. The cases where consolidation is not automatically correct are informational topics where the pages genuinely address different user journeys. A page about what a technology is, a page about how to implement it, and a page about how to evaluate vendors all touch the same broad topic but serve meaningfully different intents. Those can coexist if the internal linking clearly establishes the relationship and each page's focus is genuinely distinct. The test is whether a reasonable user would feel their question was answered by the page they landed on, or whether they would need to navigate to another of your pages to complete their intent. If they need to navigate, you have fragmentation. If they are satisfied, you have coverage.

How do we build a keyword universe for a business with four hundred product lines without it becoming unmanageable?

By building the architecture at the entity level rather than the keyword level. Four hundred product lines do not require four hundred separate keyword strategies. They require a taxonomy that organizes those product lines into a hierarchy of categories, subcategories, and individual products — and then a governance model that defines which level of the hierarchy each type of content belongs to. The category entity page owns the broad topical authority for everything in that category. The subcategory pages own the more specific topics within it. Individual product pages own the transactional queries specific to that product. Informational cluster content is tagged to whichever level of the hierarchy it supports. Once that structure is defined, the question for every new piece of content becomes simple: which entity does this belong to, and does a page already exist that owns this intent within that entity? The complexity is real but it is architectural complexity, not keyword research complexity. Solving it once through clear taxonomy design is significantly more durable than solving it repeatedly through one-off keyword decisions.

What is a keyword territory map and how do we actually implement one?

A keyword territory map is a living document — typically a spreadsheet or a structured section of your SEO governance system — that assigns every target keyword to exactly one page, with a stated intent designation and a stated owner. The minimum fields for each entry are the target URL, the primary keyword, the secondary keyword, the intent type, the content tier it belongs to in your architecture, and the last date it was reviewed. The implementation requires two things beyond creating the document: a workflow gate that checks new content against the map before production begins, and a quarterly audit that identifies pages whose actual search performance has drifted away from their stated intent designation. The most common failure mode is creating the map and treating it as a reference document rather than a gate. When content teams can publish pages without checking the map, the map becomes inaccurate within months and loses its governance value. The map only works if checking it is mandatory, not optional.

How does the pillar-cluster model apply to a B2B enterprise with highly technical product lines where the same keyword might mean different things in different contexts?

The pillar-cluster model applies directly, but the entity definition layer becomes more important than in simpler domains. The solution to technical disambiguation at enterprise scale is building entity definition pages that are extremely precise about the specific context the brand is addressing. A cloud infrastructure company and a security software company might both target the word "compliance," but their entity pages define compliance in their specific contexts — the infrastructure company for data residency and cloud compliance, the security company for regulatory and audit compliance. Those are different entities. The pillar pages and clusters that sit beneath each are built for their specific context and do not compete with each other because the entity definitions are clear. Where enterprise technical organizations go wrong is treating broad category terms as the entity without doing the disambiguation work. The keyword universe needs to be built from the entity outward, not from the keyword inward, which means starting with precise definitions of what the brand specifically addresses rather than starting with what search volume suggests is worth targeting.

How long does it take to see results from restructuring an enterprise keyword universe?

The timeline has two phases with different expectations. The technical restructuring phase — consolidating cannibalizing pages, implementing redirects, updating internal links, rationalizing sitemaps — typically produces measurable ranking improvements within four to twelve weeks as Google processes the consolidation signals and begins concentrating authority on the surviving pages. Several documented case studies show significant traffic uplifts within sixty to ninety days of aggressive consolidation work. The topical authority building phase — establishing depth in the priority topic clusters, building external citation patterns, earning the AI search visibility that comes from coherent entity representation — operates on a six-to-twelve-month timeline. The important point for enterprise stakeholders is that these are not the same timeline and should not be measured with the same expectations. Consolidation produces fast, visible results. Authority building produces durable, compounding results. Both are part of the same program.

What happens to SEO performance during a content consolidation — is there a risk of losing rankings?

Yes, and the risk is real and worth managing deliberately rather than ignoring. When you consolidate two pages into one and redirect the weaker to the stronger, there is typically a short period of ranking volatility as Google processes the consolidation and re-evaluates the surviving page. This usually resolves positively within four to eight weeks, with the consolidated page settling at a higher position than either individual page held before the consolidation. The risk is higher when the page being redirected has meaningful backlink equity or traffic in its own right — in those cases the consolidation needs to be executed carefully to ensure all link equity flows correctly to the surviving URL. The cases where consolidation produces lasting negative outcomes are relatively rare and almost always trace back to execution errors — broken redirects, internal links not updated to point to the new canonical URL, or the consolidated page not being substantive enough to represent both the traffic and authority it absorbed. Plan the consolidation carefully, monitor position data for thirty days after each batch of changes, and correct course quickly if specific pages show unexpected drops.

How should we prioritize which cannibalizing pages to fix first across a site with hundreds of overlapping issues?

Prioritize by commercial impact, not by technical severity. The cannibalization issues that deserve immediate attention are the ones where a commercial intent page — a pricing page, a request-a-demo page, a product comparison page — is losing ranking authority to an informational page targeting the same or similar queries. These cost conversions directly and fixing them produces the fastest measurable business return. The second priority is your highest-volume organic entry points — the pages generating the most impressions and clicks — where cannibalization is suppressing performance. These are where authority consolidation produces the biggest absolute traffic gains. Informational cannibalization at low traffic volume is a real problem but a low-urgency one that can be addressed in rolling quarterly cleanup cycles rather than emergency remediation. Build a prioritization matrix that scores each cannibalization cluster by commercial intent, impression volume, and estimated traffic uplift from consolidation, and work through it in that order rather than trying to fix everything simultaneously.

Does fixing keyword cannibalization help with AI search citations as well as traditional rankings?

Directly and significantly. The mechanism is the same one that makes canonical clarity important in traditional SEO, but the consequences in AI search are more immediate. AI systems synthesizing answers from web content need to identify a single authoritative source for each topic they are citing. When a brand has three pages addressing the same topic from slightly different angles, the AI system either selects one inconsistently or passes over all of them in favor of a competitor with a single clear authoritative page. Once you consolidate cannibalizing content into a single definitive page with clear entity signals, structured data, and strong internal linking establishing its authority, AI systems can identify it as the canonical source for that topic and cite it consistently. Several organizations have documented meaningful improvements in AI citation frequency following cannibalization cleanup, specifically because the architectural clarity that helps Google determine which page to rank is the same architectural clarity that helps LLMs determine which page to cite.