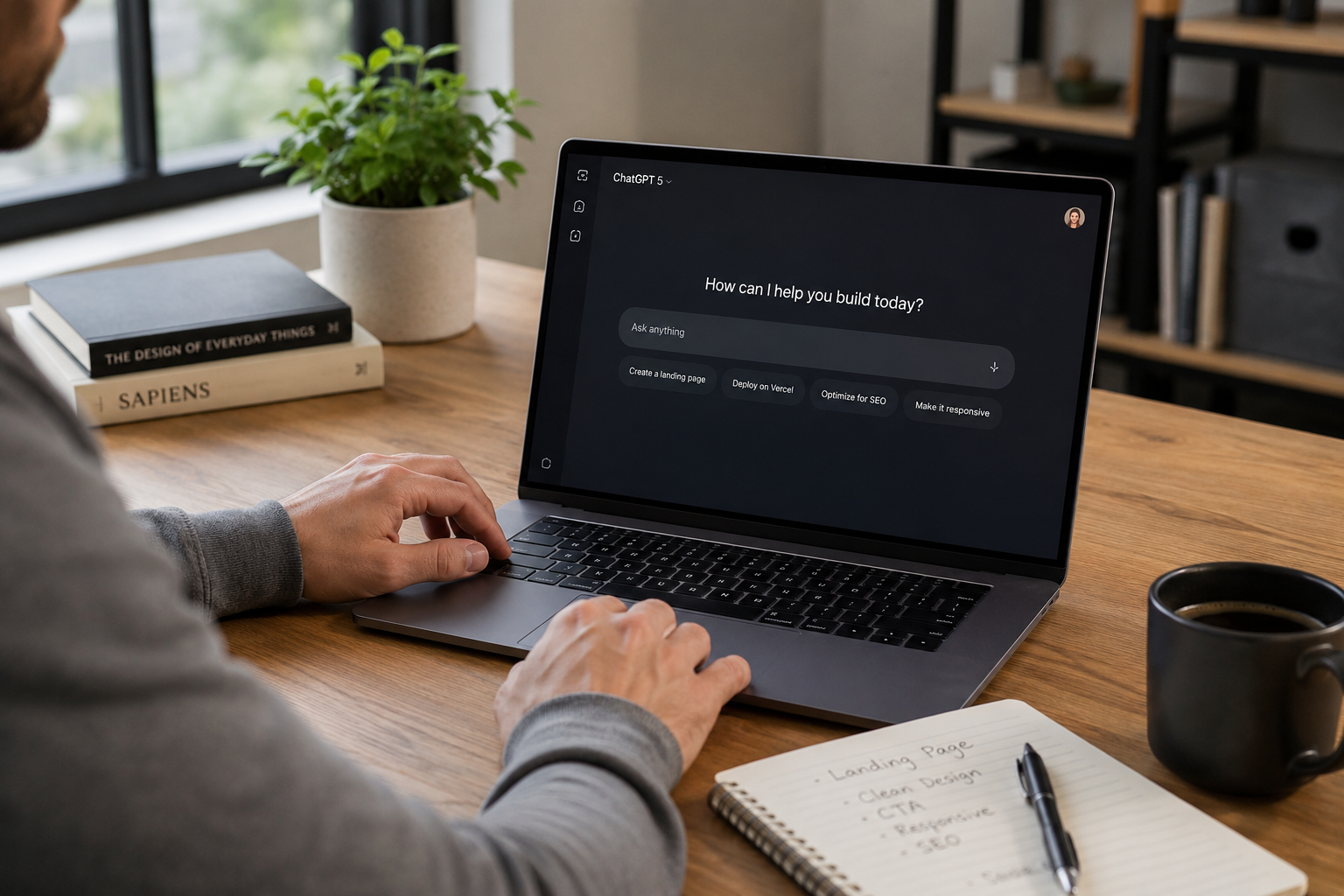

ChatGPT Just Got Seriously Good at Building Websites — Here's What Changed

If you've been sleeping on ChatGPT as a web design tool, it's time to wake up.

For most of the past year, asking an AI to build you a landing page was a humbling experience. You'd get something technically functional — the HTML rendered, the buttons were clickable — but nobody would ever mistake it for the work of a real designer. Generic layouts. Weak typography. The unmistakable aesthetic of "a robot did this." Hero sections with placeholder gradients and stock-photo energy. Feature cards that all looked identical. Footer text so bare it felt like the model gave up.

If you asked for something specific — a bold, image-forward hero, a sticky nav with a scroll trigger, a pricing section that actually communicated value — you might get a rough approximation. But the gap between what you described and what you received was wide enough to drive a truck through. Designers weren't worried. Business owners were frustrated. And most people quietly concluded that AI web design was a novelty, not a tool.

That era is over.

As of right now — May 2026 — ChatGPT is producing frontend code that is, in many cases, legitimately production-ready. Not "good for AI." Actually good. The kind of thing you could hand to a developer and say "start here." The kind of thing a client could look at and not immediately ask who built it. The leap is real, it's documented, and if you haven't tested it recently, you're operating on outdated assumptions.

So what changed? A lot, actually — and it happened in layers.

Where We Were: The Mid-2024 Baseline

To understand how far things have moved, it helps to be honest about where they started.

In mid-2024, the dominant model inside ChatGPT was GPT-4o. It was a genuinely impressive general-purpose model — strong at reasoning, capable at writing, useful for dozens of everyday tasks. But web design was not its strong suit.

The core problem wasn't that it couldn't write HTML. It could. The problem was that it defaulted to safe, overrepresented patterns that reflected the average of everything it had seen on the internet — which meant average design. It knew what a landing page looked like because it had seen thousands of them. It reproduced the median, not the best.

Ask it for a SaaS landing page and you'd get a hero with a headline in bold, a subheadline in gray, three feature cards below the fold, a testimonials section, and a CTA button. Every time. The structure wasn't wrong. It was just thoroughly unremarkable. The kind of page that blends into the background of the internet rather than standing out from it.

Typography was another consistent weakness. The model understood that fonts existed and that hierarchy mattered, but the choices were almost always conservative to the point of being generic. System fonts. Safe pairings. Nothing that communicated a real brand personality.

And perhaps most frustratingly, the code itself — while functional — wasn't always clean. Inline styles mixed with class-based styling. Redundant CSS. Structure that worked in a browser but would have made a real developer wince.

This was the baseline. And against that baseline, the progress made over the past twelve to eighteen months is dramatic.

The Upgrade Cycle That Got Us Here

The leap to where we are now didn't happen in a single release. It was a series of compounding improvements that each moved the needle — and eventually crossed a threshold where the output category changed entirely.

GPT-4.1 — April 2025

The first major signal came in April 2025 with the launch of GPT-4.1. This model was built with a specific focus on coding and instruction following, and the benchmark results reflected it clearly.

On SWE-bench Verified — a benchmark that measures the ability to solve real-world software engineering problems — GPT-4.1 completed 54.6% of tasks, compared to just 33.2% for GPT-4o. That's not a marginal improvement. That's a 21-point jump on a test designed to reflect actual developer work — fixing bugs in real codebases, implementing features from descriptions, navigating unfamiliar code. OpenAI

OpenAI described GPT-4.1 as excelling at precise instruction following and web development tasks, and positioned it as a leading model for coding work. For the first time, the model was being explicitly marketed on the strength of its development capabilities, not just its general intelligence. OpenAI

GPT-4.1 also introduced a dramatically expanded context window — up to one million tokens — a major jump from GPT-4o's 128k limit. For web development, this means the model can hold significantly more of your project in mind at once: your full codebase, your design system, your component library, your brand guidelines — all without losing track of what was established earlier in the conversation. OpenAI

The instruction-following improvements were arguably just as important as the raw coding gains. Building anything through prompts depends on the model doing what you actually asked. GPT-4.1 scored 38.3% on the Scale MultiChallenge benchmark for instruction following — a 10.5% increase over GPT-4o. That gap compounds across every iteration of a project. Fewer rounds of correction. Less time re-explaining. More time actually building. OpenAI

GPT-5 — August 2025

Then in August 2025, GPT-5 launched — and the benchmarks moved again, more significantly.

GPT-5 became the new default in ChatGPT, replacing GPT-4o, o3, GPT-4.1, and GPT-4.5 for all signed-in users. This is important context that most people missed: the majority of ChatGPT users didn't make a conscious choice to upgrade. They just opened the app one day and were on a substantially more capable model. The interface looked the same. The experience quietly wasn't. OpenAI

On SWE-bench Verified, GPT-5 scored 74.9% — compared to 69.1% for o3 and 30.8% for GPT-4o in external testing. In roughly twelve months, the default ChatGPT model had gone from solving a third of real-world coding problems to solving nearly three quarters of them. That's a different tool. Getpassionfruit

GPT-5 was also reported to be approximately 45% less likely to contain factual errors than GPT-4o with web search enabled, and around 80% less likely to contain errors when using its thinking mode. For web development specifically, this translates to fewer nonsensical CSS properties, fewer broken JavaScript patterns, and less code that looks plausible but doesn't actually work. OpenAI

GPT-5.4 — March 2026

But the most targeted upgrade for web design specifically came with GPT-5.4, released in March 2026. This is where things get genuinely interesting for anyone thinking about websites.

OpenAI stated that GPT-5.4 is a better web developer than its predecessors — generating more visually appealing and ambitious frontends — and that the model was trained with a specific focus on improved UI capabilities and use of images. That framing matters. This wasn't a side effect of being smarter generally. This was an intentional training decision. OpenAI looked at frontend output quality and decided to fix it directly. Openai

OpenAI's developer documentation for GPT-5.4 lists advances in coding, document understanding, tool use, instruction following, image perception, long-running task execution, and agentic web search as key improvements over the prior generation. Aicritique

And then OpenAI did something they hadn't done for any previous model: they published a dedicated guide specifically on how to get the best frontend results out of GPT-5.4. The existence of that guide is itself a signal. It means the capability is real enough and specific enough to warrant documentation.

What GPT-5.4 Actually Does Differently for Frontend Design

Breaking down the specific improvements helps explain why the output feels so different from what came before.

It uses images as genuine design input. This is one of the most practically significant changes. GPT-5.4 was trained to use image search and image generation tools natively, allowing it to incorporate visual reasoning directly into its design process. You can hand it a screenshot of a site you like, a mood board, or even a rough sketch, and it will use those inputs to inform the actual output — not just acknowledge them and proceed with generic choices. OpenAI recommends instructing the model to generate a mood board or several visual options before selecting final assets, and to guide it toward strong visual references by describing attributes like style, color palette, composition, and mood. OpenaiOpenai

It thinks about content strategy, not just structure. One of the persistent failures of earlier AI-generated web design was that the copy was always obviously placeholder. It knew to put words in a hero section, but those words never sounded like a real brand. According to the GPT-5.4 developer documentation, providing the model with real copy, product context, or a clear project goal is one of the simplest ways to improve results — that context helps it choose the right site structure, shape clearer section-level narratives, and write more believable messaging instead of falling back to generic placeholder patterns. The model isn't just arranging HTML elements anymore. It's making decisions about what a section should say and why. Openai

It produces functionally complete interfaces. Earlier models had a habit of generating designs that looked reasonable in a screenshot but broke the moment you actually clicked something. The developer guide notes that pairing GPT-5.4 with tools like Playwright — which lets the model inspect rendered pages, test multiple viewports, and navigate application flows — significantly improves the likelihood of producing polished, functionally complete interfaces. The model can now, in the right setup, check its own work visually and verify that what it built matches the intended design. Openai

It understands design restraint. This is subtle but important. OpenAI's documentation describes GPT-5.4 as having learned a wide spectrum of design approaches — understanding many different ways a website can be built — and notes that great design balances restraint with invention, drawing from patterns that have stood the test of time while introducing something new. The earlier tendency to default to overrepresented, generic patterns was explicitly identified as a problem they trained to solve. The model now has a better internal model of what makes design feel intentional rather than assembled. Openai

The Code Quality Shift

It's worth spending a moment on this because it's where the practical difference is most felt by anyone who has to actually work with the output.

OpenAI's release notes confirm that an updated version of GPT-4o — a precursor to the current generation — was specifically improved to generate cleaner, simpler frontend code, more accurately think through existing code to identify necessary changes, and consistently produce better results. That focus on code cleanliness carried forward and amplified through each subsequent release. OpenAI Help Center

What this means in practice is that the HTML coming out of the current generation of ChatGPT is actually readable. Semantic structure. Consistent class naming conventions. CSS that is organized rather than scattered. JavaScript that does what it says without mystery side effects. It's the difference between getting a first draft you can iterate on and getting a mess you have to rewrite before you can even start.

For teams that use AI-generated code as a starting point — which is rapidly becoming the standard workflow for smaller projects — this is enormously valuable. You're not spending the first two hours cleaning up before you can build anything. You're starting from something that a developer can actually touch without frustration.

What This Means for Business Owners

Here is where we get direct, because this is a marketing site and not a tech publication.

AI-generated websites have crossed a quality threshold that matters. That's real and worth acknowledging honestly. If you run a small business and you need a clean, professional-looking landing page on a limited budget, the current generation of ChatGPT can get you meaningfully closer to that than it could eighteen months ago. That's true and we're not going to pretend otherwise.

But here is what AI still cannot do — and what the benchmark improvements don't measure.

It cannot make strategic decisions about your business. It doesn't know that your conversion rate has been dropping because your CTA is buried below the fold. It doesn't know that your competitors are ranking for a keyword you're not targeting. It doesn't know that your homepage headline is speaking to the wrong audience segment. It generates what you tell it to generate. The quality of what you tell it is entirely on you.

It cannot build SEO architecture. A site that looks great is not the same as a site that ranks. Technical SEO — proper heading hierarchy, schema markup, page speed, mobile performance, internal linking structure, crawlability — doesn't come out of a ChatGPT prompt automatically. Neither does the keyword and content strategy that determines what you're trying to rank for in the first place.

It cannot optimize for AI search visibility. This is the newer frontier that most agencies aren't even thinking about yet. Appearing in ChatGPT's own responses, in Perplexity, in Google's AI Overviews — that requires a specific kind of content architecture and authority-building that goes well beyond generating clean code. The irony of using ChatGPT to build your site while ignoring whether ChatGPT would ever recommend your business is real.

It cannot convert. Design and conversion are related but not the same. A page that looks polished doesn't automatically move visitors to take action. Conversion rate optimization is a discipline built on testing, behavioral data, and an understanding of buyer psychology that no prompt has yet replaced.

And it cannot differentiate you. When everyone has access to the same AI tools, the design floor rises for everyone simultaneously. What was impressive six months ago is table stakes today. The businesses that stand out will be the ones that bring a genuine point of view, a real brand, and a strategic investment in being found and chosen — not just the ones who generated the most polished AI starting point.

The Honest Summary

ChatGPT's web design output in May 2026 is genuinely not the same product it was a year ago. The evidence is in OpenAI's own benchmarks, their release notes, and their decision to publish a dedicated frontend design guide for the first time. GPT-5.4 is the first model where the improvements to UI quality were trained in intentionally, not just emergent from general capability gains.

If you test it today and compare the output to what you would have gotten in June 2024, the difference is obvious. The floor has risen. The generic, placeholder-energy output of early AI web design is being replaced by something that, in the right hands with the right prompts, can genuinely serve as a production starting point.

But the ceiling hasn't moved. The businesses that will win online over the next several years aren't the ones who generate the best AI-assisted code. They're the ones who pair that efficiency with real strategy — who understand that being found in search, being cited in AI answers, and being chosen over competitors is a discipline that requires expertise, not just a better prompt.

That gap is where we work.

Ready to build something that actually performs?

Ritner Digital builds websites that don't just look the part — they rank in Google, get cited in ChatGPT and Perplexity, and convert visitors into customers. If you want a web presence that drives real business growth, let's talk about what that looks like for you.

Start a Project → ritnerdigital.com/#contact

FAQs

Is ChatGPT free to use for building websites?

Yes and no. The free tier gives you access to GPT-5 with usage limits — enough to experiment and prototype. But for serious, iterative web development work where you're going back and forth refining a design, a paid plan at $20/month removes those limits and gives you consistent access to the full model without hitting a wall mid-project. If you're using it for anything business-critical, the paid tier is worth it.

What model should I be using inside ChatGPT for web design?

As of May 2026, GPT-5 is the default model when you open ChatGPT — which means most users are already on it without realizing it. For frontend-specific work, GPT-5.4 is the most capable option available and the first model OpenAI explicitly trained for UI quality. If you're on a paid plan, make sure you're selecting it from the model picker rather than defaulting to whatever auto-selects.

Can I actually publish a website that ChatGPT builds?

Technically yes. ChatGPT will generate working HTML, CSS, and JavaScript you can host anywhere. Practically, you'll still need a hosting provider, a domain, and some comfort moving files around. What it won't handle automatically is CMS setup, domain configuration, page speed optimization, backend functionality, forms that actually send somewhere, or any of the infrastructure that makes a site work as a real business asset rather than a static file.

What's the difference between what ChatGPT builds and what a web design agency builds?

The gap is mostly invisible on the surface and enormous underneath. A well-prompted ChatGPT build can look genuinely good right now — that's the point of this post. What it won't have is intentional SEO architecture, schema markup, conversion-optimized page structure, accessibility compliance, mobile performance tuning, AI search visibility, or any of the strategic decisions that determine whether a site actually generates leads. It's the difference between a car that looks like a racecar and one engineered to perform like one.

Do I need to know how to code to use ChatGPT for web design?

No — and that's one of the genuinely significant shifts with the current generation. You can describe what you want in plain English and get working code back. You don't need to understand the output to use it. That said, knowing even a little HTML goes a long way when you want to customize something specific or explain a problem to a developer you bring in to refine the work. It's not a requirement, but it removes friction.

Will Google penalize a website built with AI?

Google has been clear that it doesn't penalize content or code based on how it was produced — it penalizes low-quality, unhelpful, or spammy output regardless of source. An AI-generated site with solid structure, real content, fast load times, and genuine value for visitors is treated the same as a hand-coded one. The risk isn't that it's AI-generated. The risk is that people publish thin, generic sites and skip the work that makes a site actually useful — to visitors and to search engines.

If AI can build websites now, do I still need a web design agency?

For a proof-of-concept or a simple brochure site on a tight budget, possibly not. For a site that needs to rank in search, appear in AI-generated answers, convert visitors into customers, load fast on mobile, and grow with your business over time — yes. AI is exceptional at generating a polished starting point quickly. Agencies earn their place in the strategy, the optimization, the technical infrastructure, and the ongoing work of making sure what you built actually performs. The tools got better. The expertise required to use them well didn't disappear.

What does it mean for my site to appear in ChatGPT or Perplexity answers?

When someone asks ChatGPT or Perplexity a question like "what's the best [your service] in [your city]," those platforms pull from indexed web content to generate their answers — and they cite sources. If your site has the right content structure, authority signals, and topical coverage, it can be one of those sources. That's called Generative Engine Optimization, or GEO, and it's a separate discipline from traditional SEO. Having a good-looking website doesn't get you there. Having a strategically built one can.