How Google Calculates Crawl Budget — And What Actually Gets You More of It

Most SEO conversations focus on what Google thinks of your content after it's been crawled. Rankings, authority, relevance — these are downstream outcomes. But there's a step that happens before any of that, one that most businesses and a surprising number of marketers barely think about, and it has a direct effect on how quickly your site responds to the work you're doing.

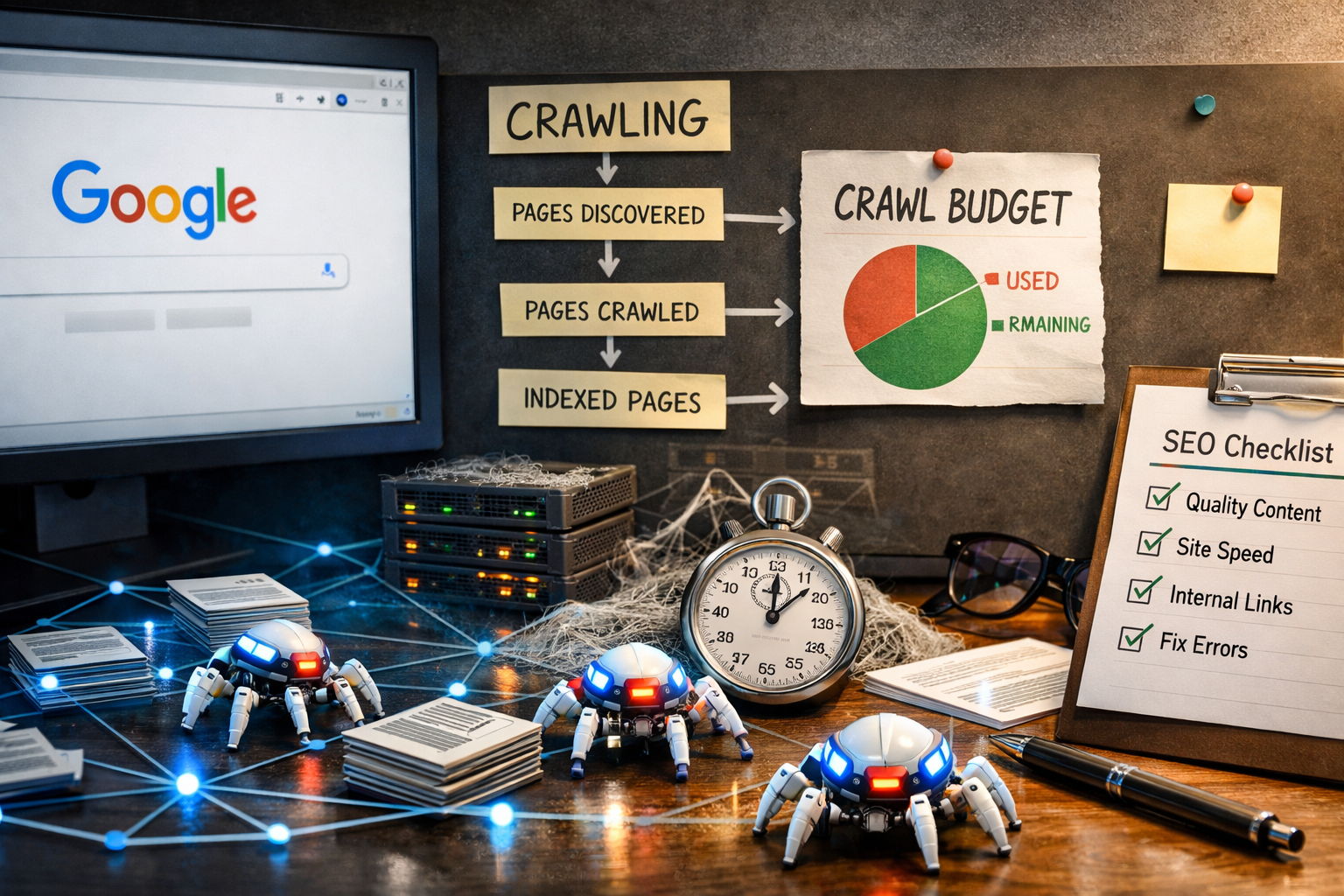

That step is crawling. And the resource Google allocates to it — crawl budget — is something you can influence, deplete, waste, and earn more of. Understanding how it works is especially important for growing sites, large sites, sites that publish frequently, and any site that has recently gone through a redesign or migration.

Here's how Google actually calculates crawl budget, what causes it to get wasted, and what reliably earns you more of it.

What Crawl Budget Actually Is

Crawl budget is the number of URLs Googlebot will crawl on your site within a given timeframe. It is not a fixed number assigned to your domain permanently. It fluctuates based on signals your site sends over time, and it is divided between two core factors that Google has been explicit about: crawl rate limit and crawl demand.

Crawl rate limit is how fast Googlebot is willing to crawl your site without causing server problems. Googlebot is designed not to overwhelm servers. If your site responds slowly, times out frequently, or returns errors under load, Googlebot will back off — crawling less frequently and less deeply to avoid destabilizing your server. Conversely, if your server responds quickly and reliably, Googlebot becomes more willing to crawl aggressively.

Crawl demand is how much Google actually wants to crawl your site based on perceived value. If your pages are popular — earning links, generating search traffic, being referenced across the web — Google will want to recrawl them more often to make sure its index stays current. If your pages are rarely linked to and generate almost no engagement, Googlebot has less reason to prioritize them.

Crawl budget is essentially the product of these two factors operating in tandem. A fast, reliable server increases your crawl rate limit. High-value, well-linked content increases crawl demand. The combination of both is what earns you a healthy crawl budget. Either one lagging will constrain it.

How Google Decides What to Crawl First

Even within your allocated crawl budget, Googlebot doesn't crawl everything with equal priority. It uses a prioritization system based on several factors.

PageRank and internal link signals. Pages with more internal links pointing to them, particularly from authoritative pages on your own site, get crawled more frequently and more reliably than orphaned pages or pages buried deep in your architecture. This is one of the underappreciated reasons internal linking structure matters — not just for user experience or passing equity, but for crawl prioritization.

Freshness signals. Pages that change frequently — news articles, product pages with updating inventory, blog posts that get updated — signal to Googlebot that regular recrawling is worthwhile. Static pages that haven't changed in two years get recrawled less often. This isn't necessarily a problem unless the static page needs to be discovered or re-evaluated for ranking.

Historical crawl data. Google maintains a record of how useful crawling a particular URL has been historically. If a URL has consistently returned 200 status codes, contained indexable content, and been found worth including in the index, it gets treated as a reliable crawl target. If a URL has historically returned errors, redirected repeatedly, or been excluded from the index, it gets deprioritized.

Sitemaps. Submitting an accurate, updated XML sitemap signals to Google which URLs you consider important and want crawled. Sitemaps don't force crawling and they don't guarantee indexing, but they do give Googlebot a prioritized starting point — particularly useful for large sites or new pages that haven't yet accumulated enough internal links to be reliably discovered through crawling alone.

What Wastes Your Crawl Budget

This is where most sites leak. Crawl budget is finite, and anything that directs Googlebot toward URLs that provide no indexing value is a waste of that budget at the expense of pages that actually matter.

Duplicate content and URL parameters. Faceted navigation, session IDs, tracking parameters, sorting options, and filter combinations can generate enormous numbers of unique URLs that all serve essentially the same content. A single product category page might spawn hundreds of parameter-based variants — /products?sort=price, /products?sort=new, /products?color=blue&sort=price — each one a separate crawl target. Googlebot will crawl them. It will find near-duplicate content. It will waste budget it could have spent on your actual content, and it may struggle to identify which version you want indexed.

Soft 404 pages. A soft 404 is a page that returns a 200 OK status code but displays a "no results found" or "page not available" message. From a server standpoint it looks fine. From a crawl standpoint it's a dead end — Googlebot crawled a URL, got a successful response, found nothing of value, and wasted the crawl on it. Large e-commerce sites with retired product pages, expired promotions, or empty search result pages are particularly prone to this.

Redirect chains and loops. A single redirect is fine. A URL that redirects to another URL that redirects to another URL before reaching the destination forces Googlebot to follow multiple hops for a single crawl credit. Redirect chains of three or more are a crawl efficiency problem. Redirect loops — where a URL eventually redirects back to itself — stop Googlebot entirely and waste the credit with nothing to show for it.

Broken internal links returning 404s. Every internal link to a URL that returns a 404 is a wasted crawl. Googlebot follows it, gets an error, gains nothing. At scale — on a large site with years of content and link decay — broken internal links can consume a meaningful share of crawl budget without contributing anything to your index.

Low-value pages that are technically crawlable. Tag archives, author pages on single-author blogs, thin category pages, internal search result pages, paginated archives beyond page two or three — these are pages that exist for structural reasons but have little or no independent search value. If they're crawlable and Googlebot is spending budget on them, that budget is not being spent on your best content.

Blocked resources that create uncertainty. If your CSS, JavaScript, or image files are blocked in robots.txt, Googlebot can't fully render your pages. It may crawl a URL but be unable to understand the page properly, which limits its ability to index it accurately. The crawl credit is spent but the outcome is diminished.

What Actually Earns You More Crawl Budget

This is the part that matters for growing sites. Crawl budget is not static. You can actively earn more of it by consistently signaling to Google that your site is fast, reliable, and valuable.

Server speed and reliability above everything else. This is the most direct lever you have on crawl rate limit. If your server responds to Googlebot's requests in under 200 milliseconds consistently and never times out, Googlebot will be willing to crawl more pages more frequently. If your server is slow, overloaded during crawl sessions, or returns a meaningful rate of server errors, Googlebot interprets that as a signal to back off. Investing in hosting infrastructure, CDN configuration, and server response time optimization is an investment in crawl budget directly.

Earning high-quality backlinks. Crawl demand is tied to perceived importance. Pages that earn links from authoritative external sources signal to Google that they matter — that recrawling them to keep the index current is worthwhile. A page with no external links pointing to it and no internal links of consequence can sit unrecrawled for months. A page that gets linked to from a high-authority domain may be recrawled within days. Link acquisition is as much a crawl strategy as it is a ranking strategy.

Publishing high-quality content consistently. Sites that publish valuable content on a regular basis train Googlebot to return more frequently. The logic is straightforward: if every time Googlebot visits, it finds something new worth indexing, it will start coming back more often. Sites that publish sporadically or rarely update existing content give Googlebot less reason to allocate crawl budget to them.

Cleaning up crawl waste systematically. Every URL you eliminate from Googlebot's crawl queue that wasn't worth crawling in the first place is crawl budget redirected toward URLs that are. Canonicalizing duplicate content, blocking parameter-based URL variants in robots.txt or via canonical tags, noindexing thin or low-value pages, fixing redirect chains, and resolving broken internal links — all of these reduce waste and concentrate your crawl budget on the pages that actually contribute to your rankings.

Strong internal linking architecture. Structuring your site so that your most important pages receive the most internal links — from your homepage, from related content, from navigation elements — signals their priority to Googlebot. Pages that are hard to reach from your homepage (requiring more than three or four clicks) are pages that will be crawled infrequently, if at all. A flat, logical site architecture where important pages are never too many clicks from the root domain is one of the most durable crawl budget investments you can make.

Keeping your sitemap accurate and current. An XML sitemap that includes only indexable, canonical, non-redirecting URLs — and that is updated whenever new content is published — gives Googlebot a reliable signal of what you want crawled and indexed. A sitemap that includes redirected URLs, noindexed pages, or URLs returning errors actively confuses the crawl prioritization process. Audit your sitemap regularly and treat it as a living document, not a set-and-forget technical task.

Reducing crawl errors over time. Google Search Console's Coverage report shows you crawl errors Google has encountered on your site. A sustained pattern of 404 errors, server errors, and crawl anomalies signals instability and depresses crawl rate limit over time. Methodically working through that error list — fixing what can be fixed, redirecting what should be redirected, properly retiring what no longer exists — improves the signal your site sends with every Googlebot visit.

Does Crawl Budget Matter for Every Site?

For small sites — under a few hundred pages, relatively static, no technical debt — crawl budget is rarely a practical constraint. Google will crawl small sites thoroughly enough that budget limitations don't affect how quickly new content gets indexed or how reliably your pages appear in search results.

Crawl budget becomes a meaningful concern when your site is large, when you publish frequently and need new content indexed quickly, when you've recently launched a significant number of new pages, when you've done a migration or redesign, or when you've accumulated meaningful technical debt over years of growth. In any of these situations, understanding and actively managing your crawl budget is the difference between Google's index of your site being current and accurate versus stale and incomplete.

It also matters if you've been doing SEO work — publishing new content, building links, updating pages — and not seeing results as quickly as you'd expect. Before assuming the work isn't effective, check whether the work is actually being crawled.

The Bottom Line

Crawl budget isn't the most glamorous topic in SEO. It doesn't have the visibility of rankings or the immediate feedback loop of paid campaigns. But it is foundational — it is the mechanism by which all of your other SEO work gets seen by Google in the first place.

A site that wastes crawl budget on duplicate URLs, broken links, and thin pages while burying its best content in a deep, poorly-linked architecture is a site that is working against itself. A site that earns crawl budget through speed, quality, and clean architecture — and then directs that budget efficiently toward its most valuable content — is a site that compounds over time.

Fix the foundation. Everything else builds on it.

Thinking About Your Site's SEO Infrastructure?

If you've never done a technical SEO audit, there's a reasonable chance your site has crawl issues you don't know about. Wasted budget, orphaned pages, redirect chains, soft 404s — these things accumulate quietly and suppress results without ever throwing an obvious error.

At Ritner Digital, technical SEO is part of everything we do. We don't just publish content and hope — we build the infrastructure that makes content perform. If you want to know where your site actually stands, start with a conversation.

(703) 420-9757 | hello@ritnerdigital.com | ritnerdigital.com

Ritner Digital is a full-service digital marketing agency serving clients nationwide.

Frequently Asked Questions

How do I actually check my crawl budget in Google Search Console?

There's no single "crawl budget" report in Search Console, but you can piece together a clear picture from a few places. The Coverage report shows you which URLs Google has crawled, indexed, excluded, and errored on. The Crawl Stats report — found under Settings — shows you Googlebot's crawl activity over the last 90 days, including total crawl requests, average response time, and the breakdown of responses by status code. If your average response time is high or your error rate is elevated, those are your first problems to address. The URL Inspection tool lets you check individual URLs to see when they were last crawled and what Googlebot saw when it got there.

My site only has 30 pages. Should I even be thinking about this?

Probably not as a priority. Crawl budget constraints are rarely a practical issue for small sites. Google is perfectly capable of crawling a 30-page site thoroughly and keeping it current without any intervention from you. Where crawl budget starts to matter is when you're publishing new content regularly and noticing delays in indexing, when your site has grown significantly over time and accumulated technical debt, or when you've recently done a migration or restructure. If you're a small site with clean architecture and no significant technical issues, your crawl budget is almost certainly fine. Spend your time on content and links instead.

What's the fastest way to get a new page indexed after publishing?

The most reliable method is submitting the URL directly through the URL Inspection tool in Google Search Console and clicking Request Indexing. This doesn't guarantee immediate indexing but it puts the URL in the priority crawl queue. Beyond that, linking to the new page from an existing page that gets crawled frequently — your homepage, a high-traffic blog post, a main navigation page — gives Googlebot a path to the new URL through its normal crawl cycle. Making sure your XML sitemap is updated and resubmitting it in Search Console also helps. What doesn't work reliably: waiting and hoping. If a page isn't getting internal links and isn't in your sitemap, it can sit unindexed for weeks.

We have a large e-commerce site with faceted navigation. How bad is the crawl budget problem?

It can be significant. Faceted navigation — filters for size, color, price, brand, availability, and combinations thereof — is one of the most common sources of crawl budget waste on e-commerce sites. A category with 200 products and a modest set of filter options can generate tens of thousands of unique URL combinations, nearly all of which serve near-duplicate content with no independent search value. Googlebot will crawl them if they're accessible, and it will do so at the expense of your actual product and category pages. The standard solutions are using canonical tags to point parameter-based URLs back to the clean canonical, blocking crawling of parameter combinations via the URL Parameters tool in Search Console or robots.txt, and ensuring that any faceted URLs you do want indexed have genuinely distinct and valuable content. If you haven't audited your parameter situation, there's a reasonable chance a significant share of your crawl budget is being spent on pages that should never have been crawled in the first place.

How do redirect chains affect crawl budget specifically?

Each hop in a redirect chain costs Googlebot a separate crawl request. A URL that redirects to a second URL that redirects to a third URL before reaching the final destination is three crawl requests to index one page. At scale — and on sites with years of history, multiple migrations, and accumulated redirects — this compounds quickly. Google recommends updating internal links and external links where possible to point directly to the final destination URL rather than relying on redirect chains to get there. Chains of three or more hops are where the crawl cost becomes meaningful. Chains that loop back on themselves stop Googlebot entirely and produce a wasted crawl with no result. Auditing your redirect structure periodically and collapsing chains down to single hops where possible is basic crawl hygiene that most sites neglect.

Can robots.txt actually hurt my crawl budget if set up wrong?

Yes, in two ways. First, if you're blocking URLs that Googlebot needs to crawl in order to render your pages correctly — CSS files, JavaScript files, image directories — Googlebot may crawl the page but be unable to understand it properly. The crawl credit is spent but the indexing outcome is degraded. Second, if your robots.txt is blocking pages that other pages link to internally, Googlebot will still see those links and still attempt to follow them — it just won't crawl the destination. The crawl budget implication is that you've created link paths that go nowhere useful. Robots.txt should be used deliberately, with a clear understanding of what you're blocking and why. Blocking crawl waste like parameter URLs and internal search results is good practice. Blocking resources Googlebot needs to render pages is a common and costly mistake.

We did a site migration six months ago and traffic still hasn't recovered. Could crawl budget be the issue?

It's one of several things worth checking. Migrations are one of the highest-risk events for crawl budget because they often introduce large numbers of redirects, change URL structures that Googlebot has built up a crawl history on, and occasionally leave orphaned old URLs discoverable through backlinks or old sitemaps. If your migration left redirect chains rather than direct 301s, if your new sitemap still contains old URLs, if canonical tags weren't updated to reflect the new URL structure, or if internal links weren't updated and are still pointing to pre-migration URLs — any of these can create crawl inefficiency that slows down the re-indexing of your new site. A post-migration technical audit specifically looking at crawl behavior is usually the right starting point when recovery is slower than expected.

Questions about your site's technical SEO? Talk to us directly — we're happy to take a look.