How AI Marketing Agencies Create 10x More Content Without Sacrificing Quality

Two years ago, a marketing team producing ten pieces of quality content per month was doing well. Today, their AI-assisted competitors are publishing forty, fifty, or more — at comparable or better quality — and compounding the gap every single month.

This isn't hype. It's showing up in the data, in competitive search landscapes, and in the gap between agencies that have built AI-integrated content workflows and those still operating on legacy production models.

93% of marketers use AI to generate content faster, and companies using AI publish 42% more content each month. Dailyaimail But the headline number understates what's actually happening at the leading edge. Teams operating at full AI content workflow maturity produce five to ten times more content at 75 to 85% lower cost per article, with compound organic growth that non-AI teams mathematically cannot replicate. Averi

The question isn't whether AI can scale content production. That's settled. The question is how to scale it without producing the kind of thin, generic, undifferentiated content that damages your brand, fails to rank, and gets ignored by both readers and AI citation systems. This post answers that question directly.

The Scale Problem — and Why It's Now a Competitive Disadvantage

For most of marketing's modern history, content quality and content quantity existed in tension. You could have one or the other at scale, but not both. The best agencies produced carefully crafted, deeply researched content — and published less of it because each piece required significant time and expertise.

That tension has been largely resolved by AI-assisted workflows. But most businesses haven't restructured their processes to take advantage of it, which means they're watching a widening gap open between themselves and competitors who have.

Non-AI blog creation has dropped from 65% of all blog content to just 5% in just two years. Shno The overwhelming majority of content being published today involves AI at some stage of production. The teams not using AI aren't maintaining quality — they're simply producing less content while their competitors flood topics with well-structured, human-reviewed, AI-assisted pieces that rank and get cited.

The median payback on AI tooling investments is now 4.2 months, down from 7.8 months in 2024. For content-heavy teams, payback arrives in under three months. Digital Applied This is no longer a speculative investment with uncertain returns — it's a demonstrably fast ROI with compounding benefits over time.

What "10x More Content" Actually Means

The 10x figure isn't a marketing exaggeration. It's a description of what happens when AI handles the tasks it's genuinely good at — and humans focus on the tasks only they can do.

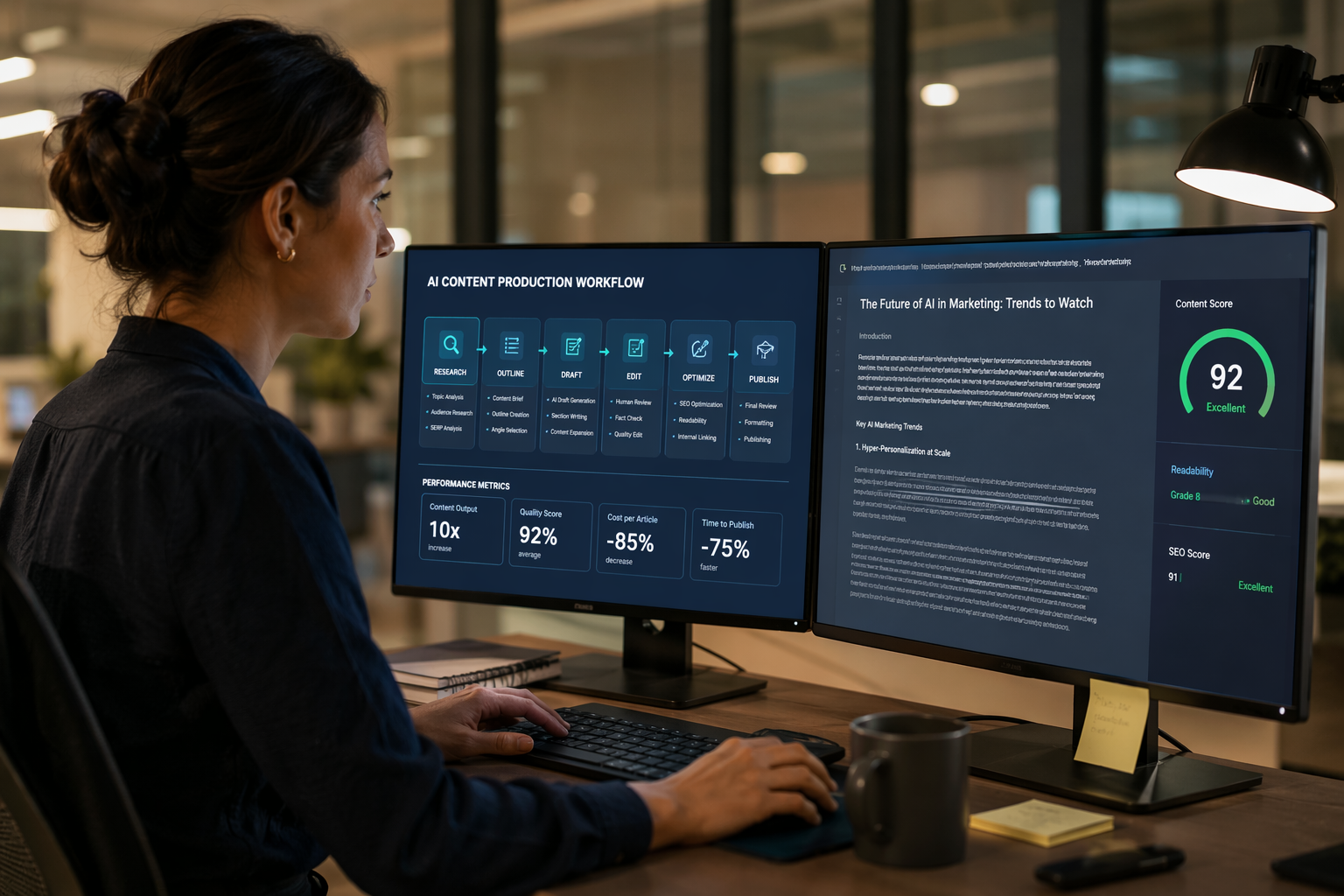

Here's the distribution of labor in a mature AI content workflow:

What AI handles well: Research aggregation across multiple sources, first-draft generation from structured briefs, metadata writing including title tags and meta descriptions, social copy creation for multiple platforms, content reformatting across formats, basic fact compilation, outline generation, and FAQ drafting.

What humans must retain: Strategic angle development, brand voice editing and consistency, original insight and firsthand expertise, fact-checking and source verification, client-specific context, ethical judgment calls, final quality approval, and relationship-driven storytelling.

The efficiency gains come from AI handling research aggregation, first-draft generation, metadata writing, and social copy creation, while humans handle fact-checking, brand voice editing, strategic angle development, and final approval. Averi

When this division of labor is implemented correctly, a writer who previously produced two or three fully researched, well-structured posts per week can produce eight to twelve — because the mechanical work that consumed 60 to 70% of their time has been delegated to AI, leaving them to focus on the 30 to 40% that actually requires human judgment and expertise.

Why Most AI Content Fails — and How Leading Agencies Avoid It

The agencies producing volume without quality are making a predictable set of mistakes. Understanding them is what separates a 10x content strategy from a ten-times-more-garbage strategy.

Mistake 1: Skipping human editorial oversight. Teams trying to skip human review stages to further reduce costs typically see quality degradation that erodes performance metrics within three to six months. Averi The efficiency gains are real. The human oversight is non-negotiable. These are not in contradiction — AI handles the production work, humans maintain quality control.

Mistake 2: Publishing generic content that adds nothing new. The greatest risk of AI-assisted content at scale is producing well-structured, technically correct content that says exactly what every other piece on the topic says. 89% of top-ranking AI-assisted content includes human editorial signatures — named authors, first-person perspective, and original data. Averi AI can synthesize existing information. Only humans can add the firsthand experience, proprietary insight, and original perspective that makes content worth reading and worth citing.

Mistake 3: Losing brand voice. Content that sounds like every other AI-generated piece in your category is a brand liability. The solution is brand context training — feeding AI tools your brand's voice guidelines, positioning, ideal customer profiles, and competitive differentiation before it generates a single word. 73% of the strongest-performing approaches combine AI with human writing. Averi The AI learns your voice framework; humans enforce it in editing.

Mistake 4: Ignoring the quality signals that AI search now requires. Scaling content that isn't structured for AI citation is scaling in the wrong direction. 78% of top-ranking AI-assisted content uses question-based H2 headings, 83% includes 40–60 word direct answer blocks after each heading, 91% contains five or more hyperlinked statistics from external sources, and 67% includes dedicated FAQ sections. Averi These aren't nice-to-haves — they're the structural characteristics of content that earns citations across ChatGPT, Perplexity, and Google AI Overviews.

The Workflow Architecture That Makes It Work

The agencies producing genuinely high-quality content at scale aren't just using AI tools ad hoc — they've built structured workflows where every stage has a defined role for AI and a defined role for humans.

Stage 1: Strategic brief development. This is human work. A strategist identifies the target query, defines the audience segment, determines the unique angle, maps the content to the buyer journey stage, and establishes what original insight or data the piece will contain. AI cannot do this strategically — it can suggest topics, but the strategic framing requires human judgment about what will differentiate.

Stage 2: Research and source compilation. AI handles the aggregation — pulling relevant studies, statistics, and third-party sources across the web. Humans select which sources to use, verify their credibility, and identify gaps the AI missed. This stage alone typically takes an hour of human time in a traditional workflow and ten minutes in an AI-assisted one.

Stage 3: Outline and structure. AI generates a structural outline based on the brief. Humans review and revise it — checking that the angle is unique, that the structure matches the intended reader journey, and that the headings are phrased as the questions the target audience is actually asking.

Stage 4: First draft generation. AI generates the draft. This is where most agencies think the work ends. It's where the work actually begins.

Stage 5: Human editorial layer. This is the stage that separates quality AI content from generic AI content. A human editor rewrites the introduction to add genuine voice, injects firsthand experience and original insight, verifies all statistics and adds proper attribution, adjusts the tone to match the brand, adds the answer capsules and FAQ sections that AI search requires, and applies final quality judgment. 86% of marketers say AI saves them more than an hour daily on creative tasks Adobe — that saved time is reinvested into this editorial layer, not eliminated.

Stage 6: SEO and AI search optimization layer. A separate check ensures the content meets the structural requirements for both traditional rankings and AI citation: answer capsules beneath headings, question-phrased H2s, FAQ schema, internal linking, proper schema markup, and a visible author attribution with credentials.

Stage 7: Metadata and distribution assets. AI generates the title tag, meta description, social copy for each platform, and email subject line variations. Human reviews and refines for brand voice and click intent. This stage, which would take 30 minutes manually, takes five.

The Quality Signals That Separate Elite AI Content from Average AI Content

Volume means nothing if the content isn't performing. The agencies genuinely achieving 10x production with quality outcomes share a set of quality signals that their less sophisticated competitors miss.

Original data. LLMs disproportionately cite content that contains information unavailable elsewhere — original research, proprietary data, firsthand case studies, and expert interviews give models a reason to reference your content specifically. Hubstic Agencies building AI-scaled content programs that don't include original research are building on sand. Even a small internal survey, an analysis of client results, or a proprietary benchmark can transform a well-written but generic post into a citation anchor that earns links and AI references for years.

Named expertise. 89% of top-ranking AI-assisted content includes human editorial signatures — named authors, first-person perspective, and original insight. Averi Anonymous content, regardless of how well it's structured, underperforms named expert content across both traditional and AI search. Every piece needs an author, every author needs a bio, every bio needs credentials.

Semantic depth, not just topical breadth. It is no longer enough to target individual keywords — the entire topic must be covered with expert-level nuance and clear logical connections to ensure AI recognizes your site as the primary authoritative source. Shiwaforce Ten shallow pieces on a topic are worth less than two deep ones that cover every angle a reader could need.

Freshness infrastructure. Content updated within 30 days receives 3.2 times more citations than older material. Position Digital A 10x content program that doesn't include a quarterly refresh cycle for existing content will see its returns decay over time. AI-assisted content refresh — updating statistics, adding new context, revising examples — is one of the highest-ROI uses of AI in a mature content program.

The ROI Picture

68% of businesses report increased content marketing ROI from AI implementation. Averi 71% of marketing leaders who adopted AI tools in 2024–2025 report positive ROI within six months. Digital Applied

But the ROI calculation for AI-assisted content extends beyond direct content metrics. AI saves marketers 11 to 13 hours per week, depending on the study Dailyaimail — time that can be reinvested into strategy, client relationships, and the creative work that drives differentiated results. Marketers are 44% more productive, saving an average of 11 hours per week thanks to AI. Adobe

For agencies specifically, the model shift is significant. 38% of US digital agencies have moved at least one service line from hourly billing to retainer-plus-performance or outcome-based pricing in 2026. Digital Applied When AI reduces production time by 60 to 70%, the agencies that restructure their pricing around value delivered — not hours logged — capture the margin expansion that AI makes possible.

The businesses failing to capture these gains are typically the ones still treating AI as a tool for individual writers to use occasionally, rather than a workflow infrastructure that transforms the entire production model.

What This Means If You're Not an Agency

The principles that allow marketing agencies to produce 10x more content apply equally to in-house marketing teams. The same workflow architecture — strategic brief, AI-assisted research and drafting, human editorial layer, AI-assisted distribution — scales down to a team of two as effectively as it scales up to a team of twenty.

67% of small and medium-sized businesses use AI in marketing, showing that AI is no longer limited to large enterprise teams. Shno The competitive pressure to adopt AI-assisted content workflows isn't coming only from large agency competitors — it's coming from equally small businesses in your niche that have figured out how to produce more, publish more frequently, and build topical authority faster than a traditionally operated team can match.

Only 5% of teams rely mostly on AI without human oversight Averi — which confirms that the businesses winning with AI aren't replacing human judgment. They're amplifying it.

The Compounding Advantage

The most important thing to understand about AI-assisted content at scale is that the benefits compound. More content means more keyword coverage, more internal linking opportunities, more topical authority signals, and more chances to be cited in AI search. Each piece of content makes every other piece marginally more valuable through internal link density, topical cluster completion, and entity authority signals.

Teams operating at Level 3 AI content workflow maturity produce five to ten times more content at 75 to 85% lower cost per article, with compound organic growth that Level 1 teams mathematically cannot replicate. Averi

That "mathematically cannot replicate" framing is not an exaggeration. When a competitor is publishing at ten times your velocity, building topical coverage at ten times your rate, and compounding domain authority at ten times your speed — there is no traditional content strategy that closes the gap. The only response is to build an equivalent production infrastructure.

The businesses building that infrastructure now are establishing advantages that will be very hard to displace two or three years from now.

Ready to Build a Content Program That Scales Without Sacrificing Quality?

At Ritner Digital, we build AI-assisted content programs that combine the production velocity of AI workflows with the strategic depth, brand voice, and original insight that makes content actually perform — in traditional search, in AI citations, and with real human readers.

If your content program is producing less than it should — or producing more than it should with less quality than it needs — we can help.

Contact Ritner Digital today to schedule a free content strategy session and find out what a properly structured AI-assisted content program looks like for your business.

Sources: Averi, Digital Applied, Adobe, Daily AI Mail, Typeface, All About AI, HubSpot State of Marketing 2026, McKinsey

Frequently Asked Questions

Can AI really produce content at 10x the volume without the quality dropping?

Yes — with the right workflow architecture. The key distinction is between teams using AI as a shortcut to skip quality steps versus teams using AI to eliminate the mechanical work while reinvesting that saved time into the editorial steps that actually determine quality. Teams that skip human review to maximize volume typically see performance metrics erode within three to six months. Teams that use AI for research aggregation, first-draft generation, and metadata creation — then apply a rigorous human editorial layer — consistently produce more content at comparable or higher quality than fully manual production. The 10x output figure reflects the former approach done correctly, not AI publishing without oversight.

What does the human editorial layer actually involve in an AI-assisted content workflow?

It's the stage where a human writer or editor takes the AI-generated draft and transforms it into something genuinely worth reading. In practice this means: rewriting the introduction to add real voice and a compelling angle, injecting firsthand experience and original insight the AI cannot have, verifying every statistic and adding proper source attribution, adjusting tone to match the brand's specific voice and audience, adding the answer capsules and FAQ sections that AI search requires, and making the final quality judgment on whether the piece is ready to publish. This stage typically takes 30 to 60 minutes per piece in a mature workflow — compared to the three to five hours a fully manual piece would require. The time savings come from not starting from a blank page, not from removing editorial judgment.

How do you prevent AI content from sounding generic and indistinguishable from competitors?

Three practices make the biggest difference. First, brand context training — feeding your AI tools a comprehensive brand voice document that covers your tone, positioning, ideal customer profile, key differentiators, and language preferences before generating any content. Second, original data and firsthand insight — every piece should contain something AI cannot synthesize from existing sources, whether that's a client case study, an internal benchmark, a proprietary framework, or a named expert perspective. Third, human editorial rewriting — the sections that most define voice and differentiation are the introduction, the key insight moments, and the conclusion, and these should always be substantially rewritten by a human rather than lightly edited.

Does AI-generated content actually rank on Google and get cited by AI search platforms?

Yes, when it meets the same quality standards as any other content. Google has consistently stated that its focus is on content quality rather than how content is produced. The structural characteristics that predict strong performance — question-based headings, direct answer blocks, external source citations, named author attribution, original data, comprehensive topic coverage — apply equally to AI-assisted and human-written content. In fact, 65% of companies report that AI-generated content improved their SEO performance. The content that fails to rank is thin, generic, unsourced AI output published without human review — not AI-assisted content that meets professional editorial standards and includes the structural elements that both traditional and AI search reward.

What tasks should always remain with humans in an AI content workflow?

Strategic angle development — deciding what makes a piece worth writing and what unique perspective it will take — is fundamentally human work because it requires judgment about differentiation and audience psychology that AI cannot replicate reliably. Original insight and firsthand experience cannot come from AI because AI has no experience. Fact-checking and source verification must be human because AI systems hallucinate and misattribute with enough frequency to make unsupervised publishing a liability. Brand voice calibration requires human judgment because brand voice is ultimately about relationships and trust, not pattern matching. And final quality approval needs a human who can assess whether a piece actually serves the reader's needs — not just whether it passes a structural checklist.

How much time does AI actually save in a content production workflow?

Studies consistently report that AI saves marketers 11 to 13 hours per week across all tasks, with content production representing the largest share of those savings. At the piece level, a fully researched 2,000-word blog post that previously took five to seven hours of writer time typically takes one to two hours in a mature AI-assisted workflow — a 70 to 80% time reduction on the production stages, with the human editorial layer adding back 30 to 60 minutes. The time savings are largest at the research aggregation and first-draft stages, which are the most mechanical parts of content production. The stages that require strategic judgment, original insight, and brand voice calibration see smaller time savings but remain essential.

Should we be worried about Google penalizing AI-generated content?

No — Google's official position has been consistent and clear: content quality is what matters, not how content was produced. What Google penalizes is low-quality content that doesn't serve readers, regardless of whether it was written by a human or generated by AI. The real risk with AI content isn't a Google penalty — it's publishing content that is technically correct but adds no genuine value, which will underperform not because of how it was made but because readers don't find it useful and AI citation systems don't find it citable. The solution is the same whether you're worried about Google or AI search: editorial standards, original insight, verified facts, named authorship, and a genuine commitment to serving the reader's actual needs.

How do we measure whether our AI content program is actually delivering ROI?

Track four metrics that traditional content measurement often misses. Content velocity — pieces published per team member per month — establishes your production baseline and lets you measure the efficiency gain directly. Cost per content unit — total content production cost divided by pieces published — captures the economic impact. Topical coverage rate — the percentage of your target query set with published content — measures whether you're actually closing the coverage gaps that compound into authority over time. And AI citation share — how often your brand appears in ChatGPT, Perplexity, and Google AI Overview responses for your target queries — is the emerging metric that connects content volume and quality to the AI search visibility that increasingly determines how your customers find you. Only 19% of content teams currently track AI-specific KPIs, which means the 81% that don't are making optimization decisions without the data they need.