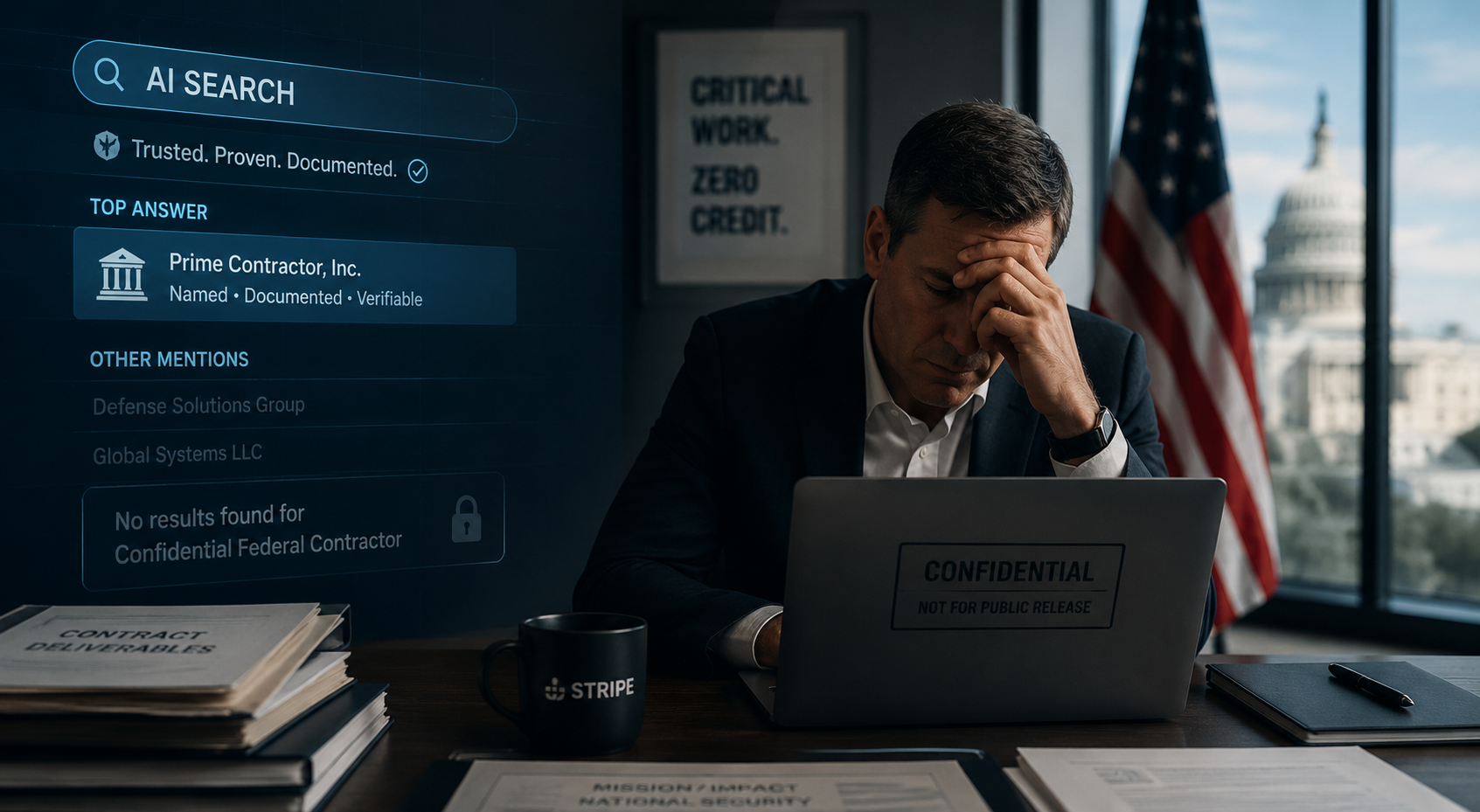

The AI Search Penalty That's Quietly Strangling Federal Contractors

There is a rule in AI search that nobody printed in a manual but everyone who studies it has figured out: specificity wins.

Not vague expertise claims. Not category authority dressed up in confident language. Specific names. Specific outcomes. Specific numbers attached to a specific client.

ChatGPT, Perplexity, Google AI Overviews — the systems powering the next generation of search are trained to surface content that makes confident, verifiable claims. A law firm that says it "helps clients navigate complex litigation" loses to a law firm that says it "reduced client liability exposure by 42% across 14 corporate defense cases in 2024." The detail is the signal. The name is the proof.

Federal contractors are among the most accomplished operators in the American economy. They build intelligence systems, manage critical infrastructure, run IT modernization programs for agencies that touch every American life. Many of them have results that would make a commercial company's marketing department weep with envy.

And almost none of them can talk about it publicly.

That structural silence is costing them in ways that are only beginning to come into focus — because the dominant search channel of 2026 is not the one that rewards quiet excellence. It's the one that rewards documented proof.

How AI Search Actually Decides Who Gets Cited

To understand why this matters, you have to understand what AI search engines are actually doing when they generate a response to a query like "best cybersecurity firms for federal agencies" or "top IT modernization contractors."

These systems are not running a traditional keyword match. They are synthesizing answers from sources they have determined to be authoritative — and the single strongest signal of authority, across every major AI platform, is the presence of specific, grounded, verifiable claims backed by data.

Research into AI citation behavior makes this unmistakably clear. Case studies, unique datasets, and first-hand findings give content an edge because competitors cannot duplicate them — even modest original research noticeably boosts citation potential. That finding is consistent across every GEO study published in the last eighteen months. SEOPress

The numbers are stark. Comparative listicles — "Best X for Y" formats — account for 32.5% of all AI citations, making them the highest-performing content format. Longer content at 2,900 or more words averages 60% more citations than content under 800 words. The AI wants depth, specificity, and social proof. Virayo

Named authors, real bios, relevant credentials, publication dates, references to firsthand experience, case studies, and testimonials all function as trust signals — and when the system needs to choose between two pages that say similar things, visible trust signals are often decisive. ALM Corp

Here is where the government contracting community runs directly into a wall.

The GovCon Credibility Paradox

Federal contractors are, by almost any objective measure, operating at some of the highest levels of complexity and consequence in the private sector. The work is sophisticated, the stakes are real, and the track records are — in many cases — extraordinary.

But the nature of that work creates a credibility paradox that has no clean commercial analogue.

Marketing for government contractors is far from straightforward. Unlike commercial markets, government contracting demands a specialized approach that accounts for strict regulations, complex procurement systems, and a highly competitive environment. Each government buyer has unique needs, operates under tight restrictions, and requires absolute trust in your capabilities. Cross & Crown

That trust requirement cuts both ways. The same security framework that makes a contractor trustworthy to a federal agency makes it nearly impossible for that contractor to demonstrate trustworthiness to the outside world.

The problem operates on several layers simultaneously.

Layer one: classified and sensitive program work. The National Industrial Security Program Operating Manual establishes requirements for the protection of classified information disclosed to or developed by contractors to prevent unauthorized disclosure. For contractors working on classified programs, the work itself — the agency name, the contract scope, the outcomes achieved — cannot be publicly discussed. The results don't exist in any form accessible to an AI search engine. Federal Register

Layer two: controlled unclassified information. Even for unclassified work, OPSEC requirements create real constraints. Contractor personnel shall not disclose to unauthorized third parties or post to unofficial sites — including social networking sites — any images, data, or information related to covered government information. Non-disclosure requirements remain in effect during the duration of the awarded contract. That covers a significant portion of what a contractor might otherwise want to publish as a case study. NAVFAC Northwest

Layer three: contractual NDAs and FAR compliance. When entering into contracts with federal agencies, businesses must understand that a government NDA is not the same as a typical private-sector non-disclosure agreement. The restrictions on what can be disclosed, to whom, and under what circumstances are more extensive than anything a commercial client NDA would typically require — and they often outlast the contract itself. UpCounsel

The result is that a contractor who spent three years modernizing the IT infrastructure of a major federal agency — delivering measurable efficiency gains, reducing system downtime, and saving taxpayers millions of dollars — may be completely unable to reference any of that publicly. No client name. No specific outcomes. No dollar figures. No timeline. A ghost case study, or more often, no case study at all.

What AI Search Engines See When They Look at a Federal Contractor's Website

Put yourself inside the retrieval logic for a moment.

An AI system crawling a defense or IT services contractor's website encounters a capabilities page that lists service areas in careful, generic language. A past performance section that references "federal agency clients" and "classified programs." A leadership team with impressive credentials but no named client wins attached to them. Maybe a blog with thought leadership content — industry perspectives, regulatory updates, capability overviews — but nothing with a number in it that traces to a specific outcome for a named organization.

Now that same AI looks at a commercial IT firm's website. There is a case study page with twelve entries. Each one has a client name, a challenge statement, a specific solution, and a results section with hard metrics: 34% reduction in infrastructure costs, 99.97% uptime achieved, implementation completed 18 days ahead of schedule. The blog has posts citing proprietary research. The team page links to named authors with verified credentials.

A 2026 AI SEO report analyzing 1,000 enterprise brands found that 62% of those brands were invisible to generative AI models — despite 94% of those same companies investing heavily in traditional SEO. For federal contractors, the invisibility rate is almost certainly higher — and the cause is structural, not strategic. ALM Corp

Brands referenced positively across four or more independent sources are 2.8x more likely to appear in ChatGPT responses than brands mentioned only on their own website. Federal contractors, by definition, often cannot generate those independent citations. Their clients cannot publicly confirm the engagement. Their agency partners cannot post testimonials. Third-party validation — the lifeblood of AI citation — is often legally off the table. Issuewire

The Scale of the Problem

To appreciate how disproportionate this disadvantage is, consider the scale of the government contracting market.

The government contracting market, with federal spending on contracts projected to grow toward $100 billion in the near term, is a massive but highly competitive environment. There are approximately 12,500 cleared contractor entities under active oversight by the Defense Counterintelligence and Security Agency alone. Across all of SAM.gov, hundreds of thousands of businesses are registered to compete for federal work. Cross & Crown

These are not small operators. Many of them are sophisticated, well-resourced firms that have been winning competitive procurements for decades. They have demonstrated past performance records on file with federal agencies — detailed, scored assessments of exactly how well they did their jobs. Those records exist in CPARS, the federal contractor performance database, and they are not publicly searchable in a form that AI engines can index.

The credentials are real. The track record is real. The proof is buried in classified systems that no web crawler has ever seen.

Meanwhile, brands in the top 25% for web mentions get 10x more AI visibility than others, and the top 50 brands receive about 28.9% of all mentions in AI Overviews. The companies accumulating those web mentions are largely commercial enterprises with the freedom to publish, promote, and publicize their client work. Superlines

Why This Matters Now More Than Ever

The federal contractor marketing disadvantage is not new. The inability to publicly discuss classified client work has always created challenges for business development and reputation building. What has changed is the search channel where that disadvantage now hits hardest.

Traditional SEO, for all its complexity, at least rewarded technical optimization, domain authority, and backlink profiles — things a contractor could build without publishing classified outcomes. You could rank for "federal IT services" by building a technically sound website and earning links from industry publications. The visibility was imperfect, but it was achievable.

AI search is structurally different. AI has a huge recency bias. From available data, when content becomes more than three months old, AI citations to that page drop off sharply. AI systems learn about your brand from across the entire web, not just your own site. The system is continuously re-evaluating who is worth citing based on a web of mentions, citations, and documented claims. If that web doesn't exist for your firm, recency doesn't help you — you're invisible regardless of how fresh your content is. LLMrefs

Organic CTR has dropped 61% for queries where a Google AI Overview appears. But when your brand is cited inside that AI Overview, CTR is 35% higher than traditional organic results. The stakes of being cited have never been higher, and the mechanism for earning those citations has never been more dependent on exactly the kind of public documentation that federal contractors cannot produce. Frase

AI search traffic grew dramatically through 2025 into 2026, and Google's AI Overviews now appear on 48% of all search results pages, reaching more than 2 billion monthly users. This is not a future problem. It is a present one. Position Digital

The Ghost Case Study Problem

There is a term used informally in the federal contracting marketing community: the ghost case study. It refers to the work that was genuinely excellent, genuinely impactful, and genuinely unpublishable. The contractor knows it happened. The agency knows it happened. The CPARS record reflects it. And no one outside that closed loop will ever be able to verify it.

Ghost case studies are not a failure of the contractor. They are the expected output of a system designed to protect national security and sensitive government operations. The security requirements that generate them are legitimate and necessary.

But in the current AI search environment, ghost case studies are the equivalent of a star athlete whose best performances were played in a stadium with no cameras, no press, no scoreboard, and no surviving audience. The performance happened. The result was real. The record doesn't exist in any form the outside world can see.

Ungrounded claims reduce citation likelihood and increase the chance an answer engine paraphrases you without attribution. Content should include primary sources, quote exact metrics, and state limitations. Federal contractors are often unable to ground their claims in any publicly verifiable way — not because the claims aren't true, but because the verification material is classified or contractually protected. Geol

The practical consequence: when a procurement officer, a prime contractor looking for a teaming partner, or an agency official's staff researcher asks an AI search engine who the leading contractors are in a given capability area, the answer is disproportionately shaped by whoever published the most detailed, named, numbered case studies. Those are almost exclusively commercial or quasi-commercial firms — not the cleared contractors who may have the strongest actual track records.

What Actually Can Be Done

The problem is real, but it is not without solutions. Working within the constraints of federal security requirements, there are legitimate and effective approaches to building AI search visibility without compromising classified or sensitive information.

1. Publish what you can, with everything you have.

Not all government contract work is classified. Not all clients are restricted. Many contractors have commercial clients, state and local government clients, or federal work in non-sensitive areas where full case studies are permissible. Those case studies should be published with maximum specificity — real names, real numbers, real timelines. One well-documented public case study does more for AI visibility than twenty pages of capability descriptions.

2. Build authority through process documentation, not just outcome documentation.

If you cannot run large-scale studies, run smaller but honest experiments. Publish before-and-after implementation notes. Document what changed, what did not, and what you learned. Originality does not need to mean huge volume — it needs to mean non-commodity information. A federal contractor can publish detailed methodology content — how they approach a particular type of problem, what frameworks they use, how they structure program management — without revealing anything about specific clients or programs. ALM Corp

3. Use declassified and publicly available outcome signals where they exist.

Contract award data is public on USASpending.gov and SAM.gov. A contractor can reference award values, contract vehicles, and agency relationships that are already in the public record. "We hold a $47M IDIQ contract vehicle with the Department of Homeland Security for cybersecurity services" is publicly verifiable and specific. That kind of claim signals credibility to AI systems without disclosing anything that isn't already public.

4. Invest in third-party validation that doesn't require client disclosure.

Industry certifications, awards, rankings, and analyst citations are legitimate external validators. A contractor recognized by a credible industry publication for technical excellence in a specific capability area has third-party documentation that AI engines can find and cite. 86% of AI citations came from brand-controlled content and listings rather than forums, but the remaining 14% — earned media, industry mentions, editorial coverage — carries disproportionate weight as a trust signal. Superlines

5. Build entity clarity across the entire web.

AI systems build confidence about a brand based on how consistently and clearly the brand is described across all sources they can access. A contractor whose website, LinkedIn profile, GSA schedule listing, industry directory entries, and media mentions all consistently describe the same capabilities, the same differentiators, and the same named leadership team is far more likely to be cited than one whose public presence is fragmented or inconsistent. Citation suppressors include unclear authorship, inconsistent terminology, and technical barriers — AI systems can still use these pages sometimes, but they are less likely to cite them when cleaner, more attributable alternatives exist. Geol

6. Develop a deliberate thought leadership content program.

Brand mentions across authoritative sources correlate 3:1 over backlinks for LLM citation specifically. A principal or practice lead who consistently publishes substantive, named, credentialed content on platforms like LinkedIn and industry publications — and who gets cited, quoted, or referenced by other credible sources — generates the kind of brand mention signals that AI systems use to build authority profiles. The expertise is real. The challenge is making it visible. Virayo

The Deeper Irony

There is something genuinely unfair about the structural position federal contractors are in right now, and it's worth naming directly.

The government contracting community represents, in aggregate, some of the most sophisticated technical and operational expertise in the American private sector. The barriers to entry are high — security clearances, complex compliance requirements, rigorous past performance evaluation. The work is consequential. The operators who survive and thrive in this environment have typically earned their position through demonstrated performance, not marketing.

AI search, as currently structured, inverts that meritocracy. It rewards the loudest documented track record, not the strongest actual one. It favors transparency over excellence. It cites the firm that can publish, not necessarily the firm that performed.

Federal contractors built their reputations through procurement processes that were specifically designed to evaluate substance behind the silence — CPARS records, technical proposals, oral presentations, reference checks conducted through official channels. That system was designed for an environment where the buyers had access to information that the public did not.

That system still exists. But the channel that shapes perception before procurement even begins — the AI search response that tells a researcher which firms are worth calling — operates on entirely different rules. And those rules, right now, systematically disadvantage the contractors who followed the security protocols correctly.

This Is a Marketing Problem With a Marketing Solution

The good news is that the disadvantage is real but not insurmountable. It requires a different approach than traditional government contracting business development — one that takes AI search visibility seriously as a strategic priority, not an afterthought.

The firms that figure this out first will have a compounding advantage. AI citation visibility, once established, tends to reinforce itself. A firm cited regularly in AI responses for a particular capability area builds brand recognition among the researchers and staff who use those tools — and that recognition shapes who gets included in competitive procurement conversations before the formal process begins.

The window to build that visibility before the market consolidates around a handful of well-cited names is not infinite. The firms investing in this now — building deliberate, specific, public documentation of what they can discuss, developing thought leadership programs that generate external mentions, cleaning up their entity presence across the web — are building a durable asset.

The ones waiting until AI search is "more established" are already behind.

Sources cited in this piece:

Frase.io — Mastering AI Citations: The Complete GEO Playbook (March 2026)

LLMrefs.com — Generative Engine Optimization: The 2026 Guide to AI Search Visibility

Virayo — LLM SEO: The B2B Guide to Getting Cited in AI Search (April 2026)

GEOL.AI — Generative Engine Optimization: The Comprehensive Pillar Guide

ALM Corp — GEO Complete Guide & AI Search Trust Signals (2026)

SEOPress — How to Optimize Content for AI Overviews and Generative Search (February 2026)

Superlines — AI Search Statistics 2026: 60+ Data Points on Visibility and Citations

Position.Digital — 150+ AI SEO Statistics for 2026

Leapd.ai — How ChatGPT, Google AI Overviews, and Perplexity Source Information in 2026

Cross & Crown / CACPro — The Hidden Challenges of Marketing for Government Contractors (April 2025)

UpCounsel — Government NDA Basics: Protecting Federal Contract Secrets (October 2025)

Federal Register — National Industrial Security Program Operating Manual (NISPOM), December 2020

U.S. Army / NAVFAC — Operations Security (OPSEC) Guides for Defense Contractors

SAME / Deltek GovWin — Federal and SLED Contracting Trends 2025–2026

Ready to build AI search visibility that works within your compliance constraints?

We work with contractors, regulated industries, and businesses navigating exactly this kind of challenge. No pitch decks. No generic playbooks. Just strategy that accounts for how your business actually operates.

Talk to Ritner Digital →

Ritner Digital is a Philadelphia-based AI search and demand generation agency. We build the kind of presence that gets found — in Google, in ChatGPT, in Perplexity, and everywhere your buyers are looking.

Frequently Asked Questions

Can federal contractors appear in AI search results at all?

Yes — but it requires a deliberate strategy that works around security constraints rather than pretending they don't exist. AI search visibility is not exclusively dependent on published case studies. Entity clarity, consistent public documentation, methodology content, publicly available contract data from SAM.gov and USASpending.gov, thought leadership from named subject matter experts, and third-party citations from industry publications all contribute to how AI systems build confidence in a brand. The contractors who appear in AI-generated responses are not necessarily the ones with the most impressive classified track records — they're the ones who built the most coherent, specific, verifiable public presence with whatever they were allowed to publish.

What is the biggest mistake federal contractors make with their public-facing content?

Writing everything in generic capability language and calling it marketing. Phrases like "full-spectrum solutions," "mission-critical support," and "trusted federal partner" tell an AI search engine nothing it can use to cite you confidently. They also tell a procurement officer nothing they can act on. The instinct to stay vague — born, understandably, from years of operating under security constraints — ends up producing content that satisfies nobody. The goal is not to disclose sensitive information. The goal is to be as specific as possible about everything you are permitted to discuss, and to make that specificity visible, structured, and findable.

Does traditional SEO still matter for government contractors, or is GEO the only thing worth investing in?

Both matter, and they are more interdependent than most people realize. Traditional SEO — technical site health, domain authority, backlink profiles, on-page optimization — still influences whether AI systems can find and trust your content. Several studies have found that pages ranking well in organic search have a meaningful correlation with AI citation rates, particularly for Google AI Overviews. That said, the relationship is loosening. Roughly 80% of URLs cited by AI platforms do not rank in Google's top 100 for the original query. GEO-specific signals — entity clarity, named authorship, grounded claims, structured content — now operate somewhat independently of traditional ranking position. The right answer for most federal contractors is a program that handles both, with a content strategy built specifically around what AI systems reward.

How do publicly available federal contract databases factor into AI search visibility?

More than most contractors realize. USASpending.gov, SAM.gov contract award data, GSA Schedule listings, and published contract opportunity notices are all publicly accessible and, in principle, indexable by AI crawlers. A contractor who has been awarded a significant IDIQ vehicle, holds a GSA Schedule, or appears in published award data has publicly verifiable proof of federal relationships that does not require disclosing anything about program content. Referencing these on your own site — with specificity, not just as a logo farm — creates grounded, verifiable claims that AI systems can cross-reference. "We hold a $47M indefinite-delivery contract vehicle with [Agency] for IT modernization services" is a citable fact, not a disclosure risk.

What content types work best for federal contractors trying to build AI search visibility?

The formats that perform best given the constraints are methodology and process content, regulatory and compliance analysis, original frameworks and assessment tools, technical explainers tied to specific capability areas, and named executive thought leadership. These formats allow a contractor to demonstrate genuine expertise and specificity without requiring client disclosure. A detailed post on how your team approaches a particular type of assessment — the questions you ask, the frameworks you apply, the failure modes you watch for — is far more citable than a general overview of your service line. Add named authors with visible credentials and you've built a trust signal that AI systems can actually use.

How does security clearance work affect a firm's ability to build AI search visibility?

The clearance itself is not the limiting factor — the contractual and regulatory constraints attached to classified work are. Many cleared contractors have significant commercial work, state and local government work, and unclassified federal work that is fully publishable. The problem is that most of them apply the same vague, restricted communication style to everything, even content that has no disclosure risk. The strategic move is to segment your content program deliberately: identify everything you are legally permitted to discuss in full detail, and invest disproportionately in making that content as specific, structured, and externally visible as possible. The classified work builds the CPARS record. The unclassified content builds the AI search presence.

How long does it take for a federal contractor to see meaningful AI search visibility?

Longer than a commercial firm in an unrestricted industry, and for reasons that compound. Building AI visibility requires accumulating external citations, consistent brand mentions across authoritative sources, and a content footprint that AI systems can index, trust, and cite — none of which happen overnight. For most organizations, meaningful citation frequency in AI-generated responses takes six to twelve months of consistent effort. For federal contractors starting from a thin public presence, the realistic timeline is toward the longer end of that range. The firms that start now will reach critical visibility mass while their competitors are still debating whether this matters. The firms that wait will face a steeper climb into an environment where early-mover citation patterns are already entrenched.

Is this a problem unique to defense contractors, or does it affect all government contractors?

It affects the broader government contracting community, but the severity scales with the sensitivity of the work. A contractor doing facilities management or document services for civilian agencies faces fewer disclosure constraints than one supporting intelligence community programs — and can build a more robust public case study portfolio as a result. The structural disadvantage is most acute for cleared defense contractors, IC-adjacent firms, and any contractor working under ITAR, CUI, or Special Access Program restrictions. But even contractors in lower-sensitivity categories often under-invest in public documentation simply because the culture of the industry defaults to discretion. The mindset shift required is recognizing that discretion about classified content and specificity about everything else are not in conflict — they are compatible, and the combination is exactly what a smart GEO strategy looks like.