Why New Domains Die in the Index Queue — and Why Checking Daily Is the Difference Between Weeks and Months of Lost Visibility

The Invisible Wall Every New Domain Hits

You've done everything right. You hired a writer, published your first five blog posts, submitted your sitemap, and requested indexing through Google Search Console. You refresh your analytics dashboard expecting to see traffic materialize.

Nothing.

A week passes. Still nothing. Two weeks. A month.

The content is live. The site is technically sound. You can visit every page in your browser without issue. But to Google, your domain barely exists. And more importantly — to the buyers searching for what you sell — you don't exist at all.

This is not a content quality problem. It is not a keyword problem. It is an indexing problem — specifically, the indexing constraint that every new domain faces, that almost no one outside of technical SEO circles fully understands, and that without active daily management, can set your go-to-market timeline back by weeks or months with no obvious indication that anything is wrong.

This post explains exactly what is happening, why it happens, what the quota limits actually are, and why the difference between an agency that checks for indexing windows daily and one that doesn't is often the difference between a company that gains search visibility in month two and one that is still waiting in month six.

Part One: The Crawl Budget Problem — Why Google Treats New Domains Like Suspicious Strangers

To understand the indexing constraint, you first need to understand what crawl budget is and why new domains have almost none of it.

The amount of time and resources that Google devotes to crawling a site is called the site's crawl budget. Crawl budget is determined by two main elements: crawl capacity limit and crawl demand. Googlebot calculates a crawl capacity limit — the maximum number of simultaneous parallel connections it can use to crawl a site and the time delay between fetches — to provide coverage of all important content without overloading servers. The crawl capacity limit can go up or down based on site health: if the site responds quickly for a while, the limit goes up. Google

The key phrase is "based on a few factors" — and for new domains, every single one of those factors starts at zero.

New sites with zero authority get microscopic crawl budgets. Domain age matters — brand-new domains are treated like suspicious strangers. Google doesn't trust new domains yet. Established domains that have been around for years get rolled out the red carpet. CrawlWP

The factors that play a significant role in determining crawl demand are perceived inventory and popularity: URLs that are more popular on the internet tend to be crawled more often to keep them fresher in the index. Google

A new domain has no popularity signals. It has no backlinks pointing to it. It has no historical engagement data. It has no track record of publishing content that Google's users have found valuable. As a result, Google allocates it the crawling equivalent of a slow drip rather than a steady stream — and that drip is what determines how quickly your content can move from "published" to "visible in search results."

The practical timeline this creates is well-documented in the SEO community, even if Google won't put official numbers on it. Brand-new domains face a predictable progression: in the first two to four weeks, Google barely crawls the new domain — maybe a few pages get indexed, but slowly. The crawl budget is tiny while Google decides whether the domain is legitimate or spam. In weeks four to eight, if quality content has been published consistently and a few backlinks have been earned, crawling increases and more pages begin to get indexed. In months three to six, things start to click — if done correctly, Google starts treating the site like a real website, crawl budget increases, rankings appear, and traffic grows. CrawlWP

That three-to-six-month window assumes everything is being done correctly. Most new domains are not doing everything correctly, because most new domains do not have someone monitoring their indexing status with the rigor the early days require.

Part Two: The Quota Wall — What Founders Don't Know Is Costing Them

Here is the specific technical constraint that most founders learn about only after they have already been burned by it.

When you publish a new page and want Google to know about it quickly, you have two primary mechanisms. The first is submitting your sitemap and waiting for Googlebot to crawl it on its own schedule — which, for a new domain with minimal crawl budget, could be days, weeks, or longer. The second is manually requesting indexing through the URL Inspection tool in Google Search Console — essentially raising your hand and saying "this page exists, please check it."

The URL Inspection tool is the faster path. But it has a limit.

Google doesn't spell out the exact number, but most practitioners who use the tool regularly report being able to manually request indexing for about 10 to 20 URLs per day — per account, not per website. Once you hit the daily limit, you see the "Quota Exceeded" message and cannot submit more until the window resets. UltimateWB

Many SEO users report being allowed to submit only approximately 10 to 12 requests per 24 hours before hitting the quota limit. After exceeding the limit, the typical wait before Google resets the quota is approximately 24 hours. Google's John Mueller has said that the quota for the "Request Indexing" function is unlikely to be dramatically raised because of misuse and spam concerns. Gabidobi

Let that number sit for a moment. Ten to twelve URLs per day.

If you publish a content-forward go-to-market strategy — say, 20 blog posts in your first month, plus a services page, an about page, case study pages, and a resource library — you have easily 40 or 50 URLs competing for your 10 daily manual indexing slots. At that rate, it takes you five days just to work through a single week's publishing output through manual requests. And that assumes your quota resets cleanly every 24 hours, which it sometimes does not.

The perception that quota is "already consumed" often stems from simultaneous actions by multiple users, automated scripts, or repeated inspection attempts. Some limits operate on rolling minute-based windows while others reset daily — and these resets may not align with your local timezone's change of date. Incremys

This creates a situation where the founder or marketing manager who checks indexing status once a week is systematically falling behind. Every day the quota window resets and no one submits anything is a day of indexing capacity that is gone permanently. You cannot carry unused quota forward. You cannot bank it. If you miss Monday's window, Monday's quota does not roll into Tuesday. It simply disappears.

Part Three: The Index Window — What "Checking Daily" Actually Means

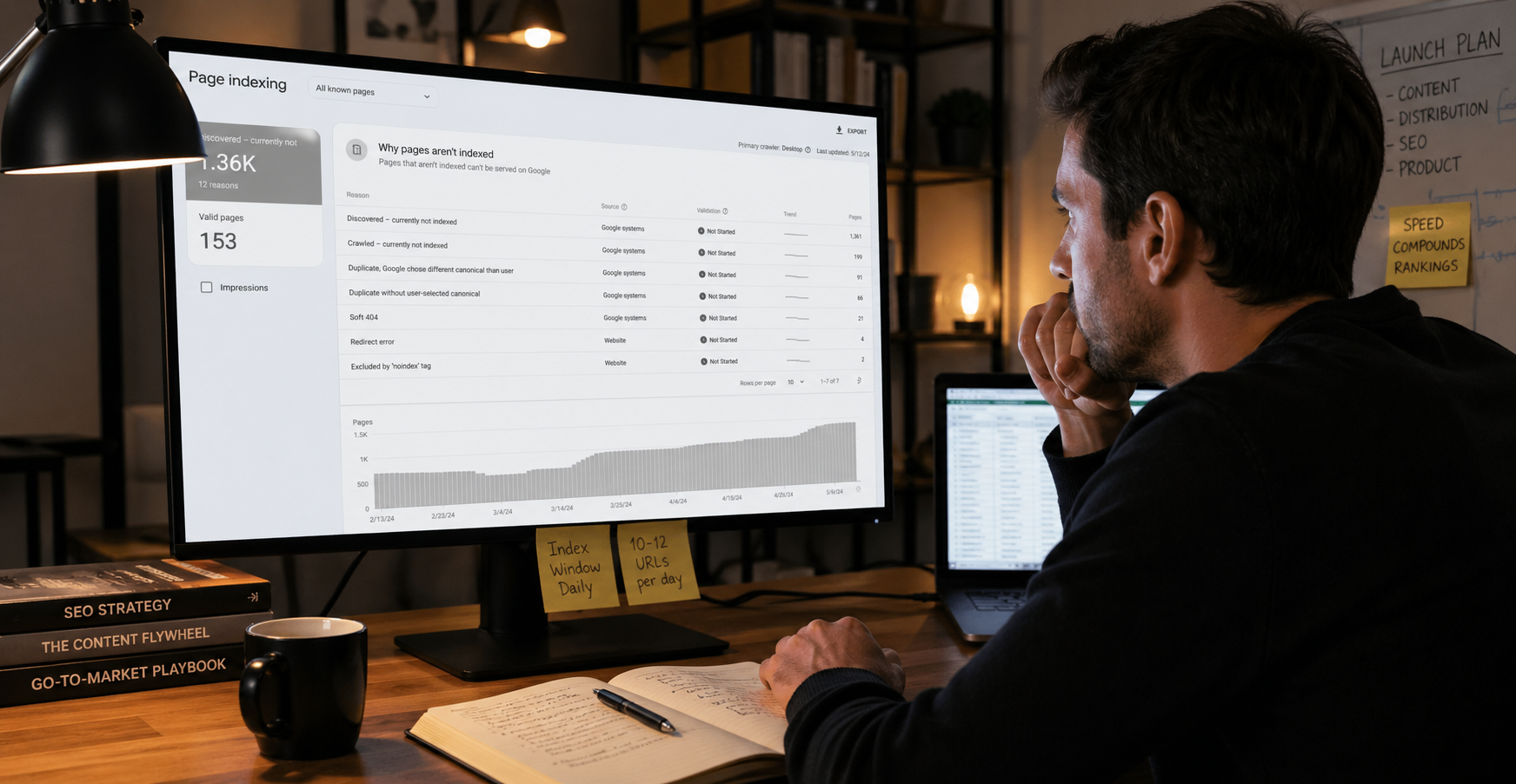

The phrase "checking for index windows" refers to a specific operational discipline: monitoring your indexing queue status daily, submitting the highest-priority available URLs the moment the quota resets, and tracking which pages are indexed versus still in the "Discovered — currently not indexed" status in Search Console.

This sounds simple. In practice, it requires a structured daily process that most internal marketing teams — especially at early-stage companies where everyone is doing five jobs — simply do not maintain.

Here is what the daily index window monitoring process actually involves:

Morning quota check. The first task each day is checking whether the previous day's quota has reset. Because resets don't always correspond to midnight in your timezone, the reset window can fall anywhere across a 24-hour period. An SEO team monitoring this daily knows when their quota typically resets and can submit the next batch of priority URLs immediately, maximizing the number of days on which quota is used productively.

Priority queue management. With a limit of 10 to 12 manual submissions per day, you cannot afford to submit low-priority URLs. Every slot goes to the content that matters most for your go-to-market: the service pages that convert, the comparison content that buyers search late in their decision process, the case studies that build credibility. Managing this priority queue correctly requires understanding both your content calendar and your buyer journey — knowing which content needs to be indexed this week versus which can wait for natural crawl.

Coverage report triage. Google Search Console's Coverage report shows the status of every URL Google knows about on your domain. The statuses you are monitoring for are "Discovered — currently not indexed" (Google knows the page exists but hasn't crawled it yet), "Crawled — currently not indexed" (Google crawled it but decided not to index it, which is a content quality signal worth investigating), and "Indexed" (live in search results). Sites with a large portion of their total URLs classified by Search Console as "Discovered — currently not indexed" are the exact sites for which crawl budget management is most critical. Google

Sitemap hygiene. Google reads your sitemap regularly, so keeping it up to date and including the <lastmod> tag for updated content helps Google stay informed about what is available to crawl. Google A sitemap that is not updating dynamically — or that is including URLs you do not want crawled — is wasting what little crawl budget a new domain has.

Crawl error monitoring. Soft 404 errors will continue to be crawled and waste your budget. Pages that serve 4xx HTTP status codes are wasting crawl budget. Making pages efficient to load allows Google to read more content from the site. Google For a new domain where every crawl unit is precious, letting technical errors consume crawl budget is the equivalent of paying for media buys that go nowhere.

Part Four: The Compound Cost of Not Monitoring

The reason daily index window monitoring matters so much in the early months of a new domain is the compounding nature of search visibility.

A page that gets indexed in week three of your domain's existence can start accumulating engagement signals — impressions, clicks, dwell time — that feed back into Google's assessment of your crawl demand. A page that sits in "Discovered — currently not indexed" for six weeks accumulates nothing. When it eventually gets indexed, it is starting from the same zero baseline it would have started from on day one — except you have lost six weeks of compounding.

Brand-new domains typically see the following progression: first two to four weeks with almost no crawling, weeks four to eight with slowly increasing crawl activity if quality signals are accumulating, and months three to six when things begin to click if everything has been done correctly. CrawlWP The "if everything has been done correctly" clause is doing significant work in that sentence. The difference between a domain that exits month three with meaningful search visibility and one that is still struggling in month six is almost always traceable to what happened in months one and two — specifically, whether someone was actively managing the indexing queue during those critical early weeks.

Consider the math for a company with an aggressive content strategy publishing ten pieces per month:

Without daily indexing management: Pages get submitted whenever someone remembers to do it. Quota is sometimes left unused. Some pages sit in "Discovered — currently not indexed" for three to four weeks before getting manually submitted. Natural crawl eventually picks up some pages, but slowly. By month three, perhaps 60% of published content is indexed.

With daily indexing management: Every quota reset is captured. Priority content gets submitted immediately. The Coverage report is reviewed daily and any pages moving into problematic statuses are flagged and investigated. Sitemap is current and clean. By month three, 90%+ of published content is indexed and accumulating authority signals.

The difference between those two scenarios is not just the indexing percentage. It is the compounding authority those pages build during the months they are indexed. A page indexed in week three of month one has three more months of authority accumulation than a page not indexed until month four. At the early stages of a new domain, that gap is the difference between starting to rank in month four and still waiting for month seven.

Part Five: What a Best-in-Class SEO Team Does That Generalists Don't

The distinction between a rigorous SEO team and a generalist marketing team is nowhere more visible than in index window management, because the value of the discipline is invisible until you see the alternative play out.

A generalist marketing team — or an internal hire doing SEO as part of a broader role — will typically:

Submit new pages when they remember to

Check Search Console occasionally, not daily

Not understand the quota reset timing for their specific account

Not maintain a priority queue for manual submissions

Not triage the Coverage report systematically

Not notice when crawl budget is being wasted on soft 404s or thin pages

None of these failures are negligent. They reflect the reality that daily index window monitoring is a specific, somewhat tedious operational discipline that requires both the technical knowledge to understand what to do and the organizational systems to actually do it every day without fail.

A rigorous SEO team treats the indexing queue like a pipeline:

They know their quota reset window. Through daily monitoring, they identify when their account's quota typically resets and structure their submission workflow around that timing — not around when someone happens to open Search Console.

They maintain a living priority queue. Before a single piece of content is published, it is assigned a priority tier based on its strategic importance to go-to-market. Tier one gets submitted the day it publishes. Tier two gets submitted when tier one is covered. This discipline ensures that the 10 to 12 daily slots always go to the highest-value content, not whatever someone submitted last.

They monitor crawl budget consumption actively. To reduce dependency on manual indexing requests, rigorous SEO teams prioritize URLs with genuine business value such as commercial pages and high-conversion-potential content, strengthen internal linking architecture, maintain clean and up-to-date sitemaps to facilitate natural discovery by Google, and reserve manual indexing requests exclusively for genuinely critical situations. Incremys

They investigate "Crawled — currently not indexed" statuses immediately. When Google crawls a page but declines to index it, that is a quality signal that requires investigation — not a check-in-two-weeks situation. A page in this status means Google saw your content and decided it was not worth including in the index. That decision needs to be understood and addressed before you publish more content with the same characteristics.

They track indexing lag as a KPI. The time between when a page is published and when it first appears in the index is a measurable, trackable metric. A rigorous SEO team tracks this for every page, uses it to identify anomalies (a page that should have been indexed in three days but is still pending at two weeks is a flag), and uses the trend data to assess whether crawl budget is growing appropriately as the domain matures.

Part Six: The Indexing API — The Faster Path and Its Limits

For teams willing to invest in a slightly more technical setup, the Google Indexing API offers a meaningfully larger daily quota than the manual URL Inspection tool.

With a daily quota of 200 requests per project, the Indexing API is a significant upgrade from the handful of manual submissions available through the URL Inspection tool in Search Console. Sight AI However, the Indexing API was officially designed for job posting and livestream content — Google has not formally extended its intended use to general web pages, which means its effectiveness for non-job-posting content is variable and subject to change.

The Google Indexing API is free at the default quota of 200 requests per day. Additional quota above that does not come free — it requires a billing account. To increase quota, you navigate to the Google API Console's Quotas and System Limits tab, tick the quota you want to increase, and apply for a higher quota with an explanation of why the default is insufficient. Magefan

For new domains launching with a large content library that needs to be indexed quickly, the Indexing API setup is worth the technical investment — but it does not eliminate the need for daily monitoring. The 200-request daily limit still requires prioritization. And the Coverage report still needs to be reviewed regardless of which submission mechanism you use, because the Indexing API submission does not guarantee indexing any more than the manual request does.

Part Seven: The GTM Timeline Implications

For founders and marketing leaders planning a go-to-market launch on a new domain, the indexing constraint has direct implications for how you should sequence your content strategy and how you should set expectations for organic search as a channel.

Do not plan for organic search visibility in month one. For a new domain, the realistic expectation is that meaningful organic traffic begins appearing in months three to five — assuming rigorous indexing management from day one. Planning for earlier visibility is setting yourself up for disappointment and potentially for the wrong decisions (pulling budget from SEO into paid because "organic isn't working" when it simply hasn't had time to work yet).

Front-load your highest-priority content. The pages that are most important to your business — your service pages, your flagship comparison content, your cornerstone educational pieces — should be published and submitted first, not at the end of your content calendar. These are the pages you want accumulating authority from the earliest possible moment.

Build internal links from day one. The biggest bottleneck for indexing is timely crawling — the faster Google crawlers discover a new web page or detect changes in existing ones, the sooner the URL is likely to appear in search results. SEOZoom Internal links are one of the primary mechanisms through which Google discovers new pages through natural crawl rather than manual submission. A site where every new page is linked from at least two existing pages gives Google a crawl path to that content independent of sitemap submission.

Earn backlinks early. Even one or two backlinks from credible external domains significantly increases a new domain's crawl demand signal. Backlinks accelerate discovery — getting a few backlinks makes crawling increase. CrawlWPGuest posts, press coverage, partnership pages, and directory listings are all viable early-stage backlink strategies for new domains that need to increase their crawl budget signal quickly.

Set your internal reporting to index lag, not just rankings. In the first three months of a new domain, ranking data is not a useful performance metric — you have no rankings yet. Index lag — the time between publishing and indexing — is the metric that tells you whether your technical foundation is working. A team that can see index lag decreasing over the first few months (going from three-week average in month one to three-day average in month three) knows its crawl budget is growing. A team that is not tracking index lag has no way to distinguish between "organic is working as expected" and "something is wrong."

Launching a new domain or going to market on a site that isn't gaining search visibility as fast as you expected?

Talk to us about what's actually happening — and what to do about it → ritnerdigital.com/#contact

Frequently Asked Questions

Why won't Google just index everything I publish immediately?

Google's index is estimated to contain over 50 billion URLs and exceeds 100,000,000 gigabytes in size. Googlebot has finite crawling capacity and allocates it based on signals of content value and site authority. New domains have no authority signals, which means they receive minimal crawling resources until they demonstrate value through backlinks, engagement, and consistent quality publishing. There is no mechanism to override this allocation — only to build the signals that increase it over time.

What is the exact daily limit for URL Inspection requests in Google Search Console?

Google does not publish an official number. Based on consistent reporting from practitioners, the limit is approximately 10 to 12 manual indexing requests per 24-hour rolling window per account. The exact number varies by account and by how the quota window aligns with your timezone. Hitting "Quota Exceeded" means you have reached this limit and need to wait for the window to reset before submitting more.

What happens to content that sits in "Discovered — currently not indexed" for weeks?

Nothing productive. A page in this status has been discovered by Google — meaning Googlebot knows the URL exists, usually from your sitemap — but has not yet been allocated the crawl resources to actually fetch and evaluate the page. It accumulates no authority signals, generates no impressions in Search Console, and has zero search visibility. Every week it sits in this status is a week of potential compounding that is permanently lost.

Can I just submit my sitemap once and let Google handle the rest?

For an established domain with significant crawl budget, yes — sitemap submission plus natural crawl is often sufficient. For a new domain with minimal crawl budget, no. Natural crawl on a new domain can take weeks to reach new pages. Active daily management of the indexing queue — combined with clean sitemap maintenance, strong internal linking, and early backlink acquisition — is what compresses that timeline to days rather than weeks.

How long before a new domain builds enough authority to not need daily indexing management?

The transition point varies, but most new domains with consistent quality publishing and active indexing management begin to see meaningful natural crawl improvement around months three to four. By month six, if authority signals have accumulated appropriately, most new content is being crawled within a few days of publication without manual intervention. At that point, daily index monitoring can transition to weekly without significant cost to visibility. Before that point, daily monitoring is the difference between a timeline measured in weeks and one measured in months.

Why can't my marketing manager just handle this alongside their other responsibilities?

They can — if daily indexing management is their only technical SEO responsibility. In practice, it rarely is. The discipline requires consistent daily action at a specific timing window, familiarity with Search Console's Coverage report, understanding of crawl budget signals, and the technical judgment to prioritize a queue correctly. When this responsibility sits alongside content creation, social media, paid campaigns, and reporting, it is systematically deprioritized — not because the person is incompetent, but because the urgency is invisible until the damage is already done.

What is the Google Indexing API and should a new domain use it?

The Indexing API is a developer tool that allows you to programmatically notify Google when pages are published or updated. Its official intended use is job postings and livestream structured data, but many SEO teams use it for general content with variable results. Its daily quota of 200 requests per project is significantly larger than the manual URL Inspection limit. For new domains publishing high volumes of content, the Indexing API setup is worth investigating — but it requires technical implementation and does not eliminate the need for Coverage report monitoring.

References

Google Search Central. (2025, December). Crawl Budget Management. Google Developers. https://developers.google.com/crawling/docs/crawl-budget

Google Search Central. (2025, October). Crawl Budget Management for Large Sites. Google Developers. https://developers.google.com/search/docs/crawling-indexing/large-site-managing-crawl-budget

CrawlWP. (2026, March). How Long Before Google Indexes a New Website and Page? CrawlWP. https://crawlwp.com/how-long-before-google-index-new-website-page/

Incremys. (2026, March). Google Search Console Quota: Managing Limits in 2026. Incremys. https://www.incremys.com/en/resources/blog/google-search-console-quota

UltimateWB. (2025). How Many URLs Can You Submit to Google Search Console Per Day? UltimateWB Blog. https://www.ultimatewb.com/blog/6242/how-many-urls-can-you-submit-to-google-search-console-per-day/

GabiDobi. (2025, October). Fix "Indexing Quota Exceeded" in Google Search Console. GabiDobi Blog. https://gabidobi.com/indexing-quota-exceeded-google-search-console/

TrySight. (2026, January). A Guide to Google Request Indexing in Search Engines. TrySight. https://www.trysight.ai/blog/google-request-indexing

Magefan. (2024, March). How to Increase Google Indexing API Requests Per Day. Magefan Blog. https://magefan.com/blog/increase-google-indexing-api-quota-per-day

SEOZoom. (2024, January). How Long Does Google Take to Index a New Page? SEOZoom. https://www.seozoom.com/google-indexing-time-seo/

Conductor. (2024). How Long Does Google Take to Index a New Website? Conductor Academy. https://www.conductor.com/academy/google-index/faq/indexing-speed/

Ritner Digital is a B2B digital marketing agency specializing in technical SEO, AI-era content strategy, and search visibility. We monitor indexing windows daily for every client — because for new domains, the compounding cost of missed quota windows is real, measurable, and completely avoidable.