How Long Does It Take to Implement a Marketing Measurement Solution and See Results?

It's one of the most common questions we hear from marketing directors and business owners who are finally ready to get serious about measurement: "If we do this right, how long before we actually know what's working?"

The honest answer requires separating two very different things — how long it takes to implement a measurement solution, and how long it takes for that solution to produce insights you can act on with confidence. They are not the same timeline, and conflating them is one of the most reliable ways to set unrealistic expectations and abandon a measurement investment before it delivers its value.

This guide walks through both timelines, phase by phase, so you can go into a measurement initiative with clear eyes.

Why This Question Is Harder Than It Looks

Marketing measurement has never been more important — or more complex. 55% of US marketers believe that a poorly integrated data environment has caused a loss of revenue, and 34% of CMOs don't trust their data. Funnel That distrust is expensive. When leadership can't rely on measurement, decisions default to opinion, budget allocation becomes political rather than analytical, and channels that look good in platform-reported metrics quietly drain money while channels that look modest do the real work.

While 30% of the measurement battle is assembling the right KPIs and toolkit, 70% is getting the people and processes in place to enable those KPIs to drive decisions. BCG

That ratio explains a lot. The technical implementation of a measurement solution — the tracking setup, the integrations, the dashboards — is often the faster part. The slower, harder, more valuable part is building the organizational infrastructure around it that turns data into decisions.

Phase 1: Audit and Discovery (Weeks 1–2)

Before anything is built, the current state has to be honestly assessed. This phase involves a full audit of your existing tracking infrastructure, analytics platforms, data integrations, and reporting processes — not to celebrate what's working, but to surface every gap, inconsistency, and broken connection that is currently corrupting your data.

A typical migration or implementation takes four to eight weeks when done properly, including parallel tracking and validation. The audit and planning phase in week one should assess technical health, integration status, and existing data quality before any new infrastructure is built. Cometly

Common discoveries during this phase: conversion events that are misconfigured or double-firing, ad platform data that doesn't reconcile with actual leads or sales, attribution models that are giving last-click credit to channels that had nothing to do with acquisition, and dashboards that report on metrics no one is actually using to make decisions.

20 to 30% of GA4 setups have validation issues such as duplicate events or missing parameters, which directly affect ad spend allocation. Trackingplan If your current measurement setup has never been audited, the assumption should be that problems exist — not that they might.

What you should have at the end of this phase: a documented picture of your current measurement state, a clear inventory of every gap that needs to be addressed, and a prioritized implementation plan that sequences fixes by business impact.

Phase 2: Technical Implementation (Weeks 2–5)

This is the phase where the infrastructure is actually built — tracking tags configured, conversion events defined and validated, integrations between ad platforms and analytics tools established, and reporting frameworks constructed.

A structured implementation breaks into phases: setup and integration in weeks two and three, parallel tracking and validation in weeks four through six, team training in week seven, and final cutover in week eight. Cometly

For most businesses, the core technical work involves:

Conversion tracking setup. Every meaningful action a visitor can take on your website — form submission, phone call, purchase, download, chat initiation — needs to be tracked as a distinct conversion event with an assigned value. At minimum, most businesses need Google Ads conversion tracking to optimize paid search campaigns and Google Analytics 4 to understand overall website behavior and traffic sources. If running Facebook or Instagram ads, Meta Pixel tracking is also required. Clicks Geek

Google Tag Manager implementation. Rather than adding tracking codes directly to your website — which creates a dependency on developers for every future change — GTM provides a centralized management layer that makes tracking infrastructure maintainable and auditable over time.

Cross-platform data integration. The goal is a single, reliable source of truth that reconciles what ad platforms report against what your CRM, your website analytics, and your actual revenue records show. Tracking systems drift over time — integrations break, tracking codes get removed during website updates, and data discrepancies creep in. The difference between accurate tracking and flawed tracking is the difference between profitable growth and expensive mistakes. Cometly

Dashboard and reporting construction. Reports need to be built before the measurement solution goes live, not after. Recreating essential reports in the new platform before cutover is critical. If your team relies on a weekly performance report showing spend, conversions, and ROAS by channel, that report needs to exist in the new platform before the switch is made. Cometly

A critical step in this phase that many implementations skip: parallel tracking. Running your old and new measurement systems simultaneously for two to four weeks, then comparing the data they generate, is the only reliable way to validate that your new setup is capturing what it claims to capture before you make it your single source of truth.

Phase 3: Validation and Calibration (Weeks 4–8)

Deploying tracking is not the same as having reliable data. This phase is where the system is stress-tested against reality.

For the first month, review your conversion data weekly. Watch for discrepancies between what your platforms report and the actual leads you receive. If Google Ads shows 20 conversions but you only received 12 actual leads, you likely have a tracking issue — maybe form spam is triggering conversions, or your thank-you page is accessible without form submission. Clicks Geek

Validation means cross-referencing your measurement system against your ground truth — your CRM records, your billing system, your actual inbound lead volume. If the numbers reconcile, you have a reliable foundation. If they don't, the discrepancy is diagnostic information that tells you exactly where the system is breaking down.

This phase is also when attribution model decisions get made and tested. Most businesses start with last-click attribution because it's the default — and most businesses who look closely at their data discover that last-click is systematically misrepresenting which channels are driving growth. Comparing first-touch, last-touch, linear, and time-decay attribution reveals whether your marketing mix is weighted toward awareness or conversion activities. Adobe

The output of this phase is not a finished measurement system — it's a validated one. There's a significant difference. A finished system looks complete. A validated system has been proven to accurately reflect reality, which is the only version worth making decisions from.

Phase 4: Early Insights and Team Adoption (Months 2–3)

Once the technical foundation is validated, the focus shifts from building the system to using it. This transition is where many measurement implementations stall — not because the data is bad, but because the organization hasn't changed its habits around how decisions get made.

While some initiatives generate immediate impact, most strategic marketing efforts require 60 to 90 days to demonstrate significant results. KEO Marketing

At this stage, you have enough reliable data to start making directional decisions. You can see which channels are generating leads at acceptable costs and which are generating clicks that never convert. You can identify landing pages that are bleeding traffic without capturing it. You can see whether your paid search is actually driving the conversions the platform claims — or whether last-click attribution has been giving it credit for customers who were already going to convert through organic or direct.

Over 60% of leading marketers work with cross-functional peers including finance and analytics teams to design experiments and align on testing timelines and success factors. BCG The measurement solution only delivers its full value when the data it produces is flowing into decisions across the organization — not sitting in a dashboard that only the marketing team sees.

This is also the phase where the discipline of measurement culture either takes root or quietly dies. Teams that treat weekly data reviews as optional, that override measurement insights with gut feel, or that use dashboards as reporting theater rather than decision tools will extract a fraction of the value their measurement infrastructure is capable of providing.

Phase 5: Mature Measurement and Compounding Insight (Months 3–12+)

The most valuable outputs of a well-implemented measurement solution don't arrive in the first few weeks. They arrive after the system has accumulated enough data to reveal patterns that no single week or month of data can surface.

Approximately 65% of businesses are expected to transition from intuition-based to data-driven decision-making by 2026. Funnel The companies making that transition successfully are the ones that built their measurement foundations early enough to have 6, 12, and 24 months of reliable, comparable data — the kind of longitudinal dataset that reveals seasonality, attribution patterns across long sales cycles, and the true relationship between brand investment and performance outcomes.

For businesses with longer sales cycles — B2B companies, high-ticket service businesses, complex purchases — this timeline extends further. Leading marketers run marketing mix models monthly, use incrementality results to establish ground truths and calibrate models, and incorporate brand health metrics in nested models. AI-powered predictive modeling then turns the marketing mix model into a proactive, real-time optimization tool. BCG

The compounding value of mature measurement is what most businesses underestimate when evaluating whether to invest in a proper solution. A measurement system that has been running reliably for 18 months doesn't just tell you what happened last week — it gives you a verified understanding of how your entire marketing system behaves across seasons, channels, and budget levels. That understanding is the foundation for every budget allocation decision, every channel investment thesis, and every test you design to improve performance going forward.

What Accelerates the Timeline — and What Extends It

Several factors meaningfully affect how quickly a measurement implementation delivers reliable, actionable insights.

Clean existing data. If your current tracking has been running reasonably well, the audit and calibration phases move faster. If your existing data is significantly corrupted — misconfigured events, inconsistent naming conventions, broken integrations — expect more time in the validation phase before the foundation is trustworthy.

Organizational alignment. The measurement battle is 70% people and processes. Marketing teams that involve finance, analytics, and leadership from the start reduce the time it takes to turn measurement outputs into organizational decisions. BCG When measurement lives only in the marketing team, insights that require cross-functional action — budget reallocation, CRM integration, product pricing — move slowly.

Sales cycle length. A business that closes deals in 24 hours gets actionable attribution data much faster than a B2B company with a six-month sales cycle. For longer cycles, the measurement system needs to track the full journey from first touch through closed revenue — which requires CRM integration and patience.

Scope of channels. A business running two marketing channels needs a simpler measurement infrastructure than one running eight channels across paid search, organic, paid social, email, display, video, events, and partnerships. More channels mean more integrations, more attribution complexity, and more time to build and validate.

Internal technical resources. Measurement implementation requires access to your website, your ad platforms, your CRM, and your analytics tools. If developer resources are constrained or internal stakeholders are slow to provide access, timelines extend regardless of how capable your implementation partner is.

A Realistic Summary Timeline

For most businesses implementing a marketing measurement solution from a modest existing baseline:

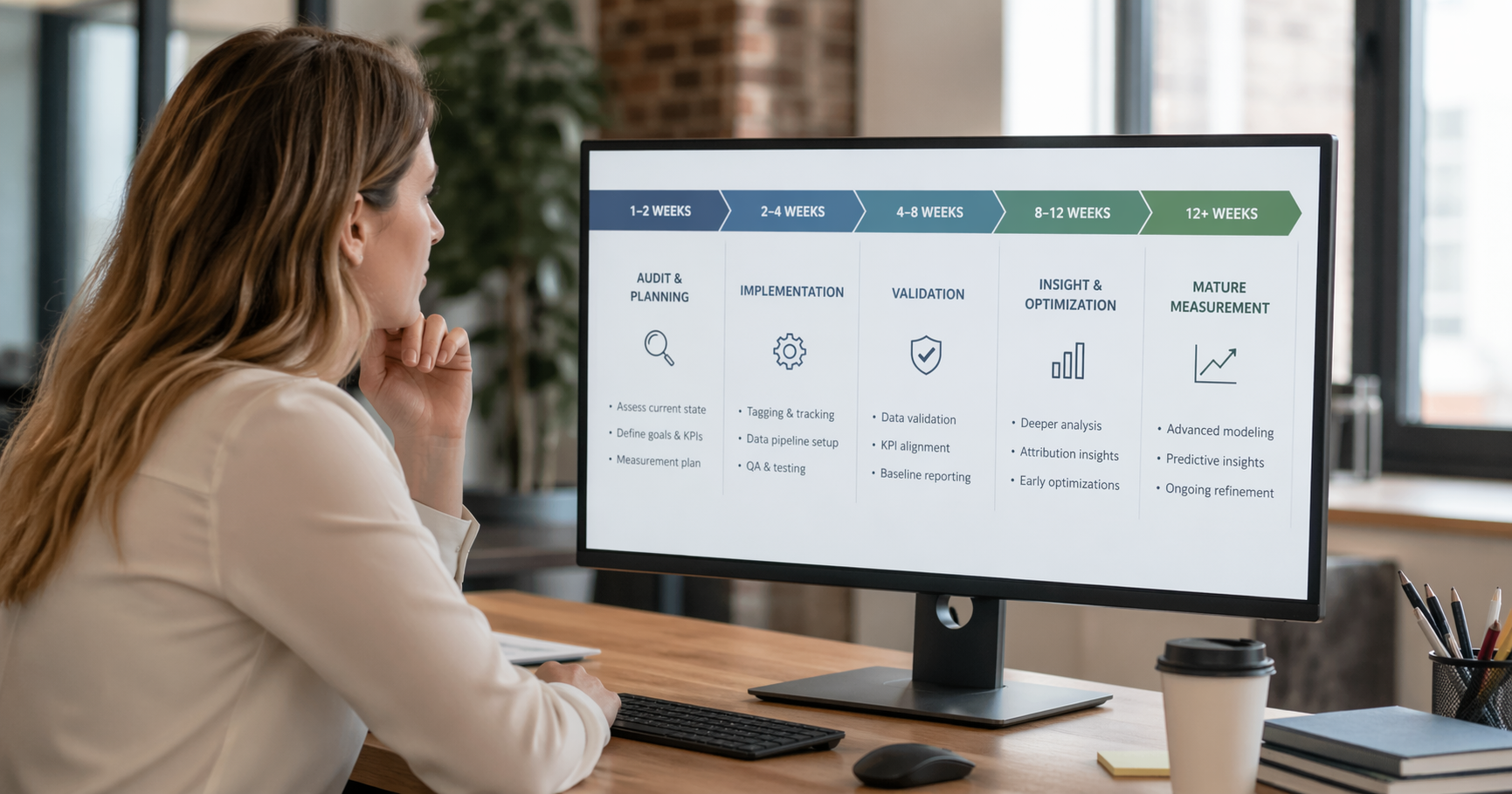

Weeks 1–2: Audit, discovery, and planning. Gap assessment complete, implementation roadmap defined.

Weeks 2–5: Technical build. Tracking infrastructure deployed, integrations configured, dashboards constructed.

Weeks 4–8: Parallel validation. New system running alongside old, data reconciled against ground truth, attribution models tested.

Months 2–3: Early directional insights. Enough reliable data to make channel-level decisions and identify the most urgent optimization opportunities.

Months 3–6: Confident optimization. Data-backed budget allocation decisions, A/B testing framework in place, cross-functional measurement culture beginning to take hold.

Months 6–12+: Mature measurement. Seasonal patterns understood, long-cycle attribution validated, compounding insight driving meaningful improvements in marketing ROI.

The Cost of Waiting

Every month a business operates without reliable marketing measurement is a month of marketing spend being allocated on the basis of incomplete, inaccurate, or simply absent data. 55% of US marketers believe a poorly integrated data environment has caused a loss of revenue Funnel — and that loss compounds. Budget that flows to channels that appear to perform but don't, and away from channels that actually drive revenue but don't get credit for it, is a systematic tax on marketing efficiency that only accurate measurement can eliminate.

The businesses winning in 2025 aren't the ones with the most data — they're the ones using data to make better decisions. PositionMySite That capability requires investment, discipline, and time. But the businesses that build it early develop a compounding advantage over competitors still guessing.

Ready to Build Marketing Measurement That Actually Works?

At Ritner Digital, we implement marketing measurement solutions that connect every channel, every campaign, and every dollar to real business outcomes — not vanity metrics and platform-reported data that doesn't reconcile with revenue.

Schedule a free measurement audit with Ritner Digital today and let's assess where your current measurement stands — and what it would take to build the foundation your marketing decisions deserve.

Frequently Asked Questions

How long does it take to set up basic conversion tracking?

For a straightforward business with a single website, a few core conversion actions — form submissions, phone calls, purchases — and standard ad platforms like Google Ads and Meta, basic conversion tracking can be configured and verified in one to two weeks. That timeline assumes clean access to your website via Google Tag Manager, no significant technical debt in your existing setup, and prompt availability of the relevant ad platform accounts. More complex environments with multiple domains, CRM integrations, e-commerce tracking, or significant existing data quality issues will take longer, typically four to six weeks for a thorough, validated implementation.

What is the difference between having tracking set up and having reliable data?

Having tracking set up means the technical infrastructure exists to capture events. Having reliable data means that infrastructure has been validated against your ground truth — that what your measurement system reports reconciles with what your CRM, billing system, and actual business records show. Many businesses have tracking set up but not reliable data. Duplicate events, misconfigured conversion actions, attribution model defaults that don't reflect actual customer journeys, and broken integrations between platforms all produce data that looks complete but is actively misleading. The validation phase of a proper implementation — running parallel systems and reconciling outputs against reality — is what converts "tracking set up" into "reliable data."

How much historical data do we need before measurement insights become actionable?

For basic channel-level optimization decisions — which campaigns are generating leads at acceptable costs, which landing pages are converting well, where budget is being wasted — three to four weeks of clean data is often sufficient to start making directional calls. For more sophisticated decisions — understanding seasonality, validating attribution across longer sales cycles, building confidence in budget reallocation recommendations — you typically need three to six months of reliable data. For marketing mix modeling and the kind of compounding insights that reveal true channel contribution across a complex marketing ecosystem, twelve months or more of consistent, validated data produces the most reliable outputs.

Should we fix our existing measurement setup or start fresh?

This depends on the extent and nature of the problems. If your existing GA4 property has moderately misconfigured events, missing conversion actions, and attribution defaults that don't reflect your customer journey, a structured audit and remediation is usually faster and less disruptive than a full rebuild. If your setup has years of data quality debt — naming convention chaos, duplicate properties, broken integrations, conversion events that have been redefined multiple times — a clean-slate rebuild with proper architecture from the start often produces better long-term outcomes than trying to fix compounding problems on a flawed foundation. The audit phase exists precisely to make this determination based on what's actually there, not assumptions.

Do we need a data scientist or analytics engineer to implement marketing measurement?

Not for foundational measurement, but complexity adds requirements quickly. Google Tag Manager, GA4, and standard ad platform conversion tracking can be implemented and maintained by a competent digital marketer or marketing operations professional with strong technical aptitude. Once measurement requirements extend to CRM integration, server-side tracking, custom attribution modeling, marketing mix modeling, or data warehouse connections, dedicated analytics engineering resources become important. The practical guidance is to start with the measurement foundation that your current team can own and maintain, build confidence in that foundation, and expand scope as both the data needs and the organizational capacity to act on more sophisticated insights grow together.

How do we know when our measurement solution is working correctly?

The clearest test is reconciliation: does what your measurement system reports align with what your business records show? If your measurement system reports 45 form submissions last week and your CRM shows 44 new leads, your tracking is working. If your measurement system reports 45 form submissions and your CRM shows 22 new leads, something is broken — either the tracking is double-counting, spam is triggering conversion events, or the CRM integration is failing. Beyond reconciliation, a working measurement solution should be producing insights that change decisions. If your team reviews measurement data weekly but never changes channel allocation, ad strategy, or budget distribution as a result, the measurement is either not reliable enough to act on or not being used as a decision tool — both of which are problems worth diagnosing.

What is the most common mistake businesses make when implementing marketing measurement?

Treating validation as optional. The implementation steps — installing tags, configuring events, building dashboards — are visible and feel like progress. Validation is slower, less visible, and easy to skip when there's pressure to show results. But a measurement system that has not been validated against reality is not a measurement system — it's a confidence machine that produces numbers that may or may not reflect what's actually happening in your marketing. Every significant business decision that gets made on the basis of unvalidated data represents a compounding risk. The businesses that get the most long-term value from measurement investment are the ones that insisted on knowing their data was right before they started using it to make decisions.

Sources: Funnel.io, BCG, Keo Marketing, Cometly, Trackingplan, Eliya, Incrmntal, Sellforte, Position My Site