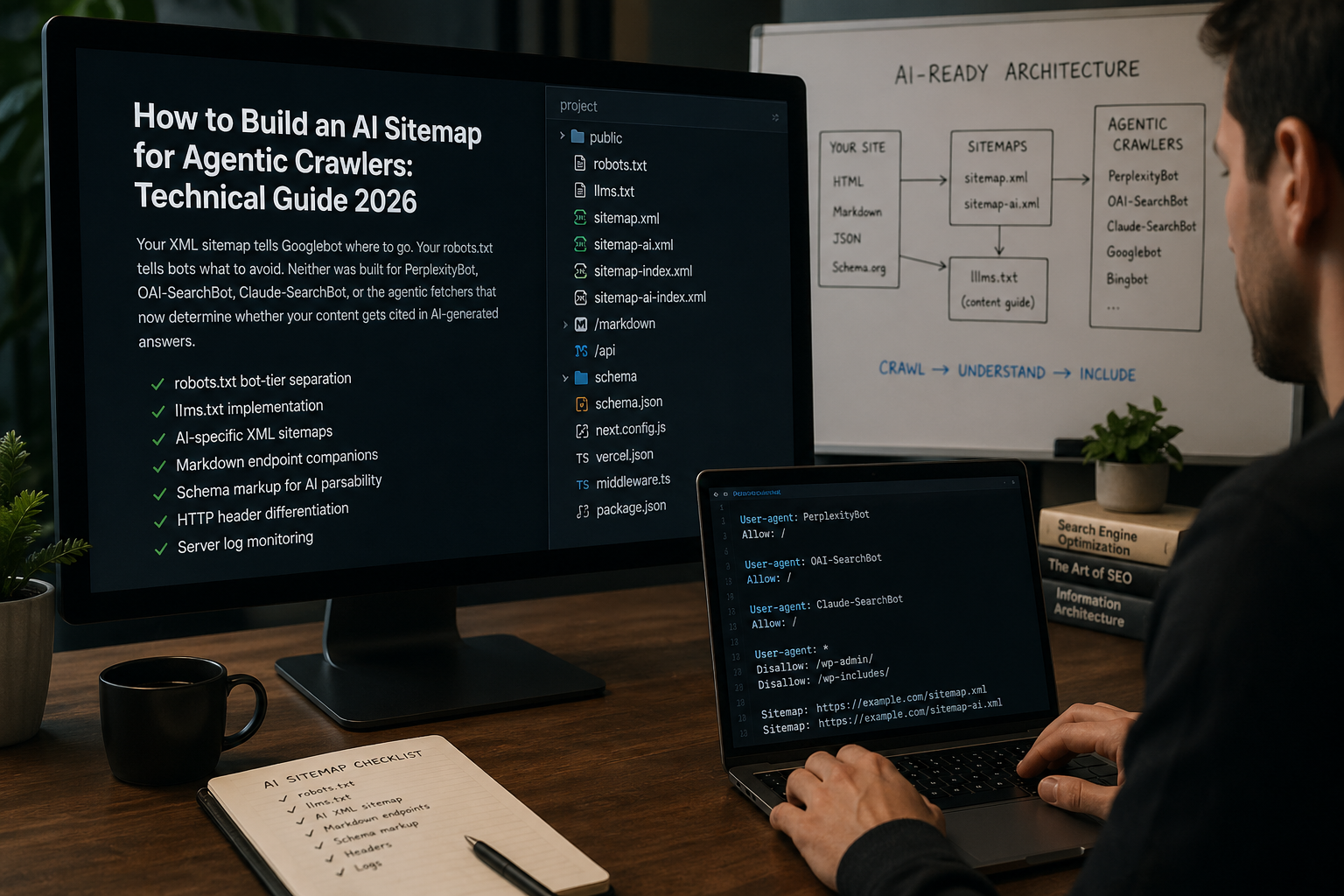

How to Build an AI Sitemap for Agentic Crawlers: A Technical Guide to Signaling Content Structure Beyond Google

Three Crawling Paradigms, One Content Architecture

Traditional web infrastructure was built around a single crawling paradigm: Googlebot and its peers request pages, parse HTML, follow links, and build an index of discrete URLs that a ranking algorithm can evaluate. Your sitemap.xml declares the pages that exist. Your robots.txt defines access rules. Your structured data helps the parser understand what it finds.

That paradigm is still operational. But it now runs in parallel with two additional crawling modes that your existing infrastructure was not designed to serve.

Retrieval-augmented generation (RAG) crawlers — PerplexityBot, OAI-SearchBot, Claude-SearchBot — build real-time or near-real-time indexes used to populate AI-generated answers at inference time. They are optimizing for different signals than Googlebot: content clarity, factual density, structural parsability, and source credibility rather than link equity and keyword relevance.

Agentic browsing — ChatGPT-User, Claude-User, Perplexity-User, and an expanding ecosystem of agent frameworks — fetches content in response to specific user queries, often with limited time to parse complex page structures. HUMAN Security telemetry shows verified AI agent traffic grew more than 6,900% year-over-year in 2025, with over 85% of agentic interactions being product-related. HUMAN Security

Your XML sitemap is designed for paradigm one. It has limited utility for paradigms two and three. This guide covers the full technical stack for serving all three — with specific implementation instructions for each layer.

Part One: The Current AI Crawler Landscape

Before you can signal content structure to non-Google bots, you need to know who those bots are, what they do, and how they differ from each other. The landscape has matured significantly in the past 18 months, and the distinctions between bot types now carry real strategic weight.

The Three-Tier Bot Architecture

The major AI platforms have each converged on a similar three-tier crawler architecture, separating training data collection from search indexing from user-triggered retrieval. Understanding which tier each bot belongs to is the prerequisite for any coherent robots.txt strategy.

Anthropic operates a three-bot system: ClaudeBot for training data collection, Claude-SearchBot for search indexing, and Claude-User for real-time user-triggered retrieval. OpenAI runs an equivalent three-bot system: GPTBot for training, OAI-SearchBot for search indexing, and ChatGPT-User for user-initiated retrieval. Perplexity operates a two-bot system: PerplexityBot for indexing and Perplexity-User for real-time retrieval. ALM Corp

Verified current user agent strings by tier:

Training crawlers (feed foundation model weights; 6–12 month lag before appearing in answers):

GPTBot — OpenAI training

ClaudeBot — Anthropic training

Google-Extended — Google generative AI training

Applebot-Extended — Apple generative AI training

CCBot — Common Crawl (feeds many smaller model providers)

Search/index crawlers (build real-time retrieval indexes; directly affect AI answer citations):

OAI-SearchBot — OpenAI search indexing

Claude-SearchBot — Anthropic search indexing

PerplexityBot — Perplexity indexing

User-triggered fetchers (retrieve content in response to a specific user query):

ChatGPT-User — OpenAI user-initiated browsing

Claude-User — Anthropic user-initiated browsing

Perplexity-User — Perplexity user-initiated retrieval

This tier distinction has immediate robots.txt implications that most sites are currently mishandling.

Scale and Crawl Volume Context

In March 2025, Cloudflare reported that AI crawlers were generating more than 50 billion requests per day across its network — just under 1% of all web requests Cloudflare observed. By January 2026, Cloudflare's analysis showed Googlebot reaching 1.70x more unique URLs than ClaudeBot, 1.76x more than GPTBot, 2.99x more than Meta-ExternalAgent, 167x more than PerplexityBot, and 714x more than CCBot. No Hacks

The volume skew toward Googlebot remains massive — which is precisely why Googlebot-optimized infrastructure doesn't automatically serve the AI crawler ecosystem well. PerplexityBot crawls at roughly 0.6% of Googlebot's URL volume, but PerplexityBot citations carry direct inference-time value that Googlebot traffic does not.

As of July 2025, almost 21% of the top 1,000 websites have explicit rules for GPTBot in their robots.txt files. Paul Calvano The majority of those rules are blanket disallows — a blunt strategy that was defensible in 2023 when the distinction between training and search crawlers did not exist, but which is now actively harmful to AI citation visibility for most B2B brands.

Part Two: Robots.txt Strategy for AI Crawlers

The foundational layer of any AI content signaling stack is robots.txt — specifically, a robots.txt configuration that makes deliberate, category-by-category decisions about training, search, and retrieval bots rather than treating all AI crawlers as a homogeneous group to block or allow.

The Core Strategic Decision

Blocking training crawlers opts content out of future model training — a privacy and IP protection decision. Blocking search and retrieval crawlers removes the website from AI answers — a visibility decision. Blocking user-triggered fetchers prevents AI assistants from completing user requests on the website — an access decision. One robots.txt rule cannot make all three decisions correctly. No Hacks

For most B2B brands whose goal is maximizing AI citation presence while maintaining some control over training data use, the appropriate baseline configuration separates these decisions explicitly:

# ============================================

# AI CRAWLER CONFIGURATION

# Last updated: Q2 2026

# Review quarterly — user agent strings change

# ============================================

# --- TRAINING CRAWLERS ---

# Block if you want to opt out of model training

# Blocking these does NOT affect search/citation visibility

User-agent: GPTBot

Disallow: /private/

Disallow: /internal/

Allow: /blog/

Allow: /research/

Allow: /guides/

# Allow core content for training if desired:

# Allow: /

User-agent: ClaudeBot

Disallow: /private/

Allow: /blog/

Allow: /research/

Allow: /

User-agent: Google-Extended

Disallow: /

User-agent: Applebot-Extended

Disallow: /

User-agent: CCBot

Disallow: /

# --- SEARCH / INDEX CRAWLERS ---

# Allow these to maximize AI citation presence

User-agent: OAI-SearchBot

Allow: /

User-agent: Claude-SearchBot

Allow: /

User-agent: PerplexityBot

Allow: /

# --- USER-TRIGGERED FETCHERS ---

# Allow for best AI assistant user experience

User-agent: ChatGPT-User

Allow: /

User-agent: Claude-User

Allow: /

User-agent: Perplexity-User

Allow: /

# --- OTHER AI CRAWLERS ---

User-agent: Amazonbot

Allow: /

User-agent: DuckAssistBot

Allow: /

User-agent: meta-externalagent

Allow: /

# --- SITEMAPS ---

Sitemap: https://yourdomain.com/sitemap.xml

Sitemap: https://yourdomain.com/ai-sitemap.xmlCritical Caveats on User-Triggered Fetchers

Anthropic says all three of its bots honor robots.txt, including Claude-User. OpenAI and Perplexity draw a sharper line for user-initiated fetchers, warning that robots.txt rules may not apply to ChatGPT-User and generally do not apply to Perplexity-User. ALM Corp This means that for user-triggered retrieval, robots.txt is a signal rather than an enforced access control — server-side authentication and rate limiting are the reliable mechanisms if you need to actually restrict access.

Verifying Bot Identity

Declared user agent strings can be spoofed. For high-value access decisions, verify bot identity against published IP ranges.

OpenAI, Google, Common Crawl, Perplexity, and Bing publish machine-readable IP range files. For vendors that do not publish IP range files — Anthropic is the notable case — reverse DNS is the fallback. Running dig -x <ip> returns a PTR record; if the hostname belongs to the claimed vendor, a forward lookup on the hostname should return the original IP. No Hacks

For log analysis, the following grep pattern surfaces declared AI traffic across major providers:

bash

grep -Ei "gptbot|oai-searchbot|chatgpt-user|claudebot|claude-searchbot|claude-user|perplexitybot|perplexity-user|google-extended|bingbot|amazonbot|duckassistbot" access.log \

| awk '{print $1, $4, $7, $12}' \

| sort | uniq -c | sort -rnRun this monthly. New user agent strings appear without announcement — Cloudflare has catalogued 226 distinct AI crawler user agents Momentic, most of which your current robots.txt does not address.

Part Three: The llms.txt File — What It Is, What It Isn't, and Whether to Build One

No technical guide to AI content signaling in 2026 can avoid llms.txt — and no honest guide can overstate its current impact. Here is the accurate picture.

What llms.txt Actually Is

llms.txt is a plain Markdown file hosted at /llms.txt on your website that provides a structured summary of your most important content for large language models. Think of it as a recommended reading list for AI: it tells LLMs what your site is about, what content matters most, and where to find it — without the noise of HTML, JavaScript, or ads. Mintlify

Your llms.txt file complements, not replaces, robots.txt or sitemap.xml. Robots.txt controls crawler access. Sitemap.xml maps all indexable pages for search engines. llms.txt curates a shortlist of high-signal pages specifically for large language models. All three can coexist in your root directory without conflict. Bluehost

The simplest accurate analogy: if your site were a library, sitemap.xml is the complete catalogue, robots.txt marks the restricted shelves, and llms.txt is the librarian's curated reading list for a researcher who only has 15 minutes.

The Format

A well-structured llms.txt file follows this pattern:

markdown

# Ritner Digital

> B2B digital marketing agency specializing in AI-era content strategy,

> entity SEO, and search visibility.

## Core Services

- [AI Visibility Audit](https://ritnerdigital.com/services/ai-audit):

Our flagship service analyzing AI citation presence across ChatGPT,

Perplexity, and Google AI Overviews.

- [Entity SEO](https://ritnerdigital.com/services/entity-seo):

Building brand entity signals for AI citation optimization.

- [Content Strategy](https://ritnerdigital.com/services/content):

Proprietary research and AI-citation-optimized content programs.

## Research & Reports

- [The AI Citation Gap](https://ritnerdigital.com/blog/ai-citation-gap):

Analysis of how often #1 Google results are cited by AI models.

- [State of AI Search: Personal Finance 2026](https://ritnerdigital.com/blog/ai-search-personal-finance-2026):

Benchmark report on AI citation patterns in financial content.

## Key Concepts We Cover

- [Entity SEO Guide](https://ritnerdigital.com/blog/what-is-entity-seo):

Complete guide to entity SEO for B2B brands.

- [AI-Influenced Conversions (AIC)](https://ritnerdigital.com/blog/ctr-vs-aic):

New KPI framework for measuring zero-click AI-driven pipeline.

## About

- [About Ritner Digital](https://ritnerdigital.com/about):

Company overview, team, and methodology.

- [Contact](https://ritnerdigital.com/#contact):

Inquiry and consultation requests.The companion llms-full.txt file — referenced in Anthropic's own documentation — provides full Markdown-rendered content of key pages rather than just a curated index, giving AI systems the complete text without HTML parsing overhead.

The Honest Assessment of Current Impact

Over 844,000 websites had implemented llms.txt as of October 2025, according to BuiltWith tracking. Major companies including Anthropic (Claude docs), Cloudflare, and Stripe are using it. Yet not a single major AI platform has officially confirmed they actively read these files. Publii

In July 2025, Google's Gary Illyes stated that Google does not support llms.txt and is not planning to support it. Engineer John Mueller compared it to the discredited keywords meta tag. BigCloudy

John Mueller also stated that none of the AI services have confirmed they extract information via llms.txt, and that server logs barely show AI crawlers requesting the file. LBHQ

However, there are meaningful counterpoints:

In November 2024, Claude listed llms.txt and llms-full.txt in its official documentation, reflecting a clear signal of interest from Anthropic. Mintlify noted 436 visits to their website from AI crawlers after applying llms.txt — most from ChatGPT. LBHQ

Google included llms.txt in their Agents to Agents (A2A) protocol, signaling at least experimental interest despite their public statements. Publii

Our recommendation: implement llms.txt as low-risk, low-cost infrastructure that takes 30–60 minutes and has no documented downside. Treat it as forward-looking positioning rather than an immediate citation driver. Every few years, a new file at the root of a website becomes table stakes — robots.txt in 1994, sitemap.xml in 2005, security.txt in the 2010s. llms.txt is the current candidate. Adoption is still early, but it is a low-cost signal worth adding. Hyperleap AI

Part Four: The AI Sitemap — Beyond llms.txt

The "AI sitemap" concept extends beyond llms.txt into a broader set of signals that help agentic crawlers understand your content structure, navigate to high-value pages efficiently, and parse what they find accurately. Think of it as a layer cake: llms.txt is the top layer, but the layers beneath it carry more structural weight.

Layer 1: Markdown Endpoint Companions

The core technical insight behind llms.txt is that AI crawlers parse Markdown significantly faster and more accurately than HTML. Your HTML page carries navigation menus, cookie banners, JavaScript payloads, sidebar content, and footer links — all of which consume context window space and parser cycles without contributing to the core content the AI needs.

The solution is serving Markdown-rendered versions of your key pages at parallel endpoints:

yoursite.com/blog/ai-citation-gap — HTML version for browsers

yoursite.com/blog/ai-citation-gap.md — Markdown version for AI crawlers

Implementation varies by stack:

Next.js:

javascript

// pages/blog/[slug].md.js

export async function getServerSideProps({ params }) {

const post = await getPostBySlug(params.slug);

return {

props: {

content: post.markdownContent,

title: post.title,

author: post.author,

publishedDate: post.publishedDate

}

};

}

export default function MarkdownEndpoint({ content, title, author, publishedDate }) {

const res = useContext(ResponseContext);

res.setHeader('Content-Type', 'text/markdown');

return `# ${title}\n\nAuthor: ${author}\nPublished: ${publishedDate}\n\n${content}`;

}Express.js:

javascript

app.get('/blog/:slug.md', async (req, res) => {

const post = await Post.findBySlug(req.params.slug);

if (!post) return res.status(404).send('Not found');

res.setHeader('Content-Type', 'text/markdown');

res.send(`# ${post.title}

Author: ${post.author.name} (${post.author.credentials})

Published: ${post.publishedAt}

Updated: ${post.updatedAt}

${post.markdownContent}

`);

});WordPress (functions.php):

php

add_action('init', function() {

add_rewrite_rule('^([^/]+)/\.md$', 'index.php?pagename=$matches[1]&format=markdown', 'top');

});

add_action('template_redirect', function() {

if (get_query_var('format') === 'markdown') {

$post = get_queried_object();

header('Content-Type: text/markdown');

echo "# " . get_the_title($post) . "\n\n";

echo "Author: " . get_the_author_meta('display_name', $post->post_author) . "\n";

echo "Published: " . get_the_date('Y-m-d', $post) . "\n\n";

echo apply_filters('the_content', $post->post_content);

exit;

}

});Reference your Markdown endpoints in your llms.txt file so crawlers know they exist:

markdown

## Research & Reports

- [The AI Citation Gap](https://ritnerdigital.com/blog/ai-citation-gap)

([markdown](https://ritnerdigital.com/blog/ai-citation-gap.md)):

Analysis of how often #1 Google results are cited by AI models.Layer 2: AI-Specific XML Sitemap

A dedicated AI sitemap — referenced in your robots.txt and separate from your standard sitemap.xml — allows you to curate the pages most valuable for AI citation separately from the complete URL inventory you maintain for Google.

The structural difference from a standard sitemap is the inclusion of AI-relevant metadata: author credentials, content type, data density indicators, and last-substantive-update dates (as distinct from trivial edits that update <lastmod> without changing content substance).

xml

<?xml version="1.0" encoding="UTF-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9"

xmlns:ai="https://ritnerdigital.com/schemas/ai-sitemap/1.0">

<url>

<loc>https://ritnerdigital.com/blog/ai-citation-gap</loc>

<lastmod>2026-03-15</lastmod>

<changefreq>monthly</changefreq>

<priority>0.9</priority>

<ai:content-type>original-research</ai:content-type>

<ai:author-credential>Digital Marketing Strategist</ai:author-credential>

<ai:data-points>47</ai:data-points>

<ai:word-count>4200</ai:word-count>

<ai:primary-claim>Google #1 results cited by AI less than 41% of the time</ai:primary-claim>

<ai:markdown-endpoint>https://ritnerdigital.com/blog/ai-citation-gap.md</ai:markdown-endpoint>

</url>

<url>

<loc>https://ritnerdigital.com/blog/entity-seo-guide</loc>

<lastmod>2026-02-10</lastmod>

<changefreq>quarterly</changefreq>

<priority>0.8</priority>

<ai:content-type>comprehensive-guide</ai:content-type>

<ai:author-credential>SEO Specialist</ai:author-credential>

<ai:word-count>6800</ai:word-count>

<ai:primary-claim>Entity SEO framework for B2B AI citation optimization</ai:primary-claim>

<ai:markdown-endpoint>https://ritnerdigital.com/blog/entity-seo-guide.md</ai:markdown-endpoint>

</url>

</urlset>Reference this sitemap in robots.txt:

Sitemap: https://yourdomain.com/sitemap.xml

Sitemap: https://yourdomain.com/ai-sitemap.xmlNote: no AI platform has officially confirmed they read custom sitemap namespace extensions. The <ai:> namespace above is a forward-looking signal, not a confirmed parsing target. The structural value of the AI sitemap is primarily in curating which pages you point high-priority crawlers to, using standard <priority> and <changefreq> signals that established crawlers do read.

Layer 3: Schema Markup Stack for AI Parsability

Schema markup is the layer of the AI content signaling stack with the most verified impact — because it is machine-readable metadata that established AI crawlers already parse when evaluating content credibility and citation worthiness.

Beyond standard sitemaps, structured data markup helps AI systems understand your site structure and prioritize valuable pages. Qwairy The full schema stack for AI citation optimization in 2026 is:

Organization schema (homepage and about page):

json

{

"@context": "https://schema.org",

"@type": "Organization",

"name": "Ritner Digital",

"url": "https://ritnerdigital.com",

"logo": "https://ritnerdigital.com/logo.png",

"description": "B2B digital marketing agency specializing in AI-era content strategy and entity SEO",

"foundingDate": "2019",

"sameAs": [

"https://www.linkedin.com/company/ritnerdigital",

"https://twitter.com/ritnerdigital"

],

"contactPoint": {

"@type": "ContactPoint",

"contactType": "sales",

"url": "https://ritnerdigital.com/#contact"

}

}Article schema with full author entity (every blog post):

json

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "The AI Citation Gap: Analysis of 1,000 B2B Search Queries",

"datePublished": "2026-01-15",

"dateModified": "2026-03-15",

"author": {

"@type": "Person",

"name": "Jane Smith",

"jobTitle": "Head of SEO Strategy",

"worksFor": {

"@type": "Organization",

"name": "Ritner Digital"

},

"sameAs": "https://www.linkedin.com/in/janesmith-seo"

},

"publisher": {

"@type": "Organization",

"name": "Ritner Digital",

"logo": {

"@type": "ImageObject",

"url": "https://ritnerdigital.com/logo.png"

}

},

"mainEntityOfPage": {

"@type": "WebPage",

"@id": "https://ritnerdigital.com/blog/ai-citation-gap"

},

"citation": [

{

"@type": "CreativeWork",

"name": "2024 Zero-Click Search Study",

"author": "SparkToro",

"url": "https://sparktoro.com/blog/2024-zero-click-search-study"

}

]

}FAQPage schema (high-value FAQ sections):

json

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What is the AI Citation Gap?",

"acceptedAnswer": {

"@type": "Answer",

"text": "The AI Citation Gap refers to the disconnect between a brand's Google search rankings and its visibility in AI-generated answers. A brand can rank #1 on Google and still never be cited by ChatGPT, Perplexity, or Google AI Overviews when a buyer asks the same question."

}

}

]

}Speakable schema (marks content optimized for voice and AI synthesis):

json

{

"@context": "https://schema.org",

"@type": "WebPage",

"speakable": {

"@type": "SpeakableSpecification",

"cssSelector": [".article-summary", ".key-findings", "h2", "h3"]

},

"url": "https://ritnerdigital.com/blog/ai-citation-gap"

}Layer 4: HTTP Headers for Crawler Differentiation

Server-side HTTP headers allow you to serve different response metadata to different crawler types — a technique that enables granular control beyond what robots.txt alone can achieve.

Vary header for content negotiation:

Vary: User-Agent, AcceptThis signals to caches that responses may differ based on the requesting agent, enabling you to serve Markdown responses to identified AI crawlers and HTML responses to browsers.

X-Robots-Tag for page-level crawler control (more granular than robots.txt):

# Allow all AI crawlers for this specific page

X-Robots-Tag: all

# Opt out of AI training for this specific page while allowing indexing

X-Robots-Tag: noai, noimageai

# Block all crawlers except search engines

X-Robots-Tag: noneNginx implementation for serving Markdown to AI crawlers:

nginx

location ~* \.(md)$ {

default_type text/markdown;

add_header Content-Type "text/markdown; charset=UTF-8";

}

# Detect AI crawlers and serve Markdown alternative

set $serve_markdown 0;

if ($http_user_agent ~* "(GPTBot|OAI-SearchBot|ClaudeBot|Claude-SearchBot|PerplexityBot)") {

set $serve_markdown 1;

}

location /blog/ {

if ($serve_markdown = 1) {

try_files $uri.md $uri $uri/ =404;

}

try_files $uri $uri/ =404;

}Part Five: Content Architecture for Agentic Parsability

Technical signals are necessary but not sufficient. The content itself needs to be structured in ways that agentic crawlers can efficiently parse, understand, and represent accurately. This section covers the content architecture decisions that complement your technical stack.

The Context Window Problem

Agentic crawlers — particularly user-triggered fetchers operating in real time — face a fundamental constraint: they have a limited context window. A dense, JavaScript-heavy page with 8,000 words of content, navigation, sidebar widgets, and footer links may fill a significant portion of that context window before the crawler reaches your core arguments.

The structural implication: put your most citable content first, clearly labeled, in the most parsable format available.

In practice this means:

Lead with a summary block that contains your primary claim, your key data points, and your source citations — before any narrative setup

Use <h2> and <h3> headings as genuine semantic labels, not keyword anchors — crawlers use heading structure to build document outlines

Keep sentences that contain citable claims short and self-contained — a claim that spans three dependent clauses is harder for a crawler to extract accurately than the same claim in a single direct sentence

Avoid burying key statistics in the middle of long paragraphs — put data points at sentence starts or in dedicated callout blocks

The Semantic HTML Layer

Semantic HTML elements provide parsability signals that AI crawlers use to weight content importance:

html

<!-- Mark your primary claim explicitly -->

<article itemscope itemtype="https://schema.org/Article">

<!-- Key findings as a distinct, labeled section -->

<section aria-label="Key Findings">

<h2>Key Findings</h2>

<ul>

<li>The #1 Google result is cited by AI models <strong>38% of the time</strong> in personal finance</li>

<li>Educational finance queries now trigger AI Overviews <strong>91% of the time</strong></li>

</ul>

</section>

<!-- Author entity with machine-readable metadata -->

<address rel="author" itemscope itemtype="https://schema.org/Person">

<span itemprop="name">Jane Smith</span>,

<span itemprop="jobTitle">Head of SEO Strategy</span>,

<a itemprop="sameAs" href="https://linkedin.com/in/janesmith-seo">LinkedIn</a>

</address>

<!-- Publication and update dates machine-readable -->

<time itemprop="datePublished" datetime="2026-01-15">January 15, 2026</time>

<time itemprop="dateModified" datetime="2026-03-15">Updated March 15, 2026</time>

</article>Inline Citation Markup

One of the strongest parsability signals you can add is explicit inline citation markup — making your sourcing machine-readable rather than just visible in the prose:

html

<p>

Zero-click search has reached a tipping point:

<span class="citation"

data-source="SparkToro"

data-year="2024"

data-url="https://sparktoro.com/blog/2024-zero-click-search-study">

58.5% of U.S. Google searches now end without a click to the open web

</span>.

</p>This is not a current schema standard — but it creates a structured, machine-readable citation signal that forward-looking crawlers can parse as corroborating evidence of content credibility.

Part Six: Monitoring and Verification

Building the AI sitemap stack is not a one-time implementation. The crawler landscape changes frequently, new user agent strings appear without announcement, and the relationship between your signals and AI citation behavior needs ongoing monitoring to be understood and improved.

Server Log Analysis Setup

The most reliable source of data on AI crawler behavior is your own server logs. Set up a dedicated parsing script that runs weekly:

bash

#!/bin/bash

# ai-crawler-report.sh

# Run weekly via cron: 0 6 * * 1 /path/to/ai-crawler-report.sh

LOG_FILE="/var/log/nginx/access.log"

REPORT_DATE=$(date +%Y-%m-%d)

echo "AI Crawler Report - Week of $REPORT_DATE"

echo "========================================="

# Identify AI crawler traffic by user agent

echo ""

echo "AI CRAWLER HITS BY BOT:"

grep -Ei "gptbot|oai-searchbot|chatgpt-user|claudebot|claude-searchbot|claude-user|perplexitybot|perplexity-user|google-extended|duckassistbot|amazonbot" "$LOG_FILE" \

| awk '{

for(i=1;i<=NF;i++) {

if($i ~ /gptbot/i) {bot="GPTBot"}

else if($i ~ /oai-searchbot/i) {bot="OAI-SearchBot"}

else if($i ~ /chatgpt-user/i) {bot="ChatGPT-User"}

else if($i ~ /claude-searchbot/i) {bot="Claude-SearchBot"}

else if($i ~ /claudebot/i) {bot="ClaudeBot"}

else if($i ~ /claude-user/i) {bot="Claude-User"}

else if($i ~ /perplexitybot/i) {bot="PerplexityBot"}

else if($i ~ /perplexity-user/i) {bot="Perplexity-User"}

}

print bot

}' \

| sort | uniq -c | sort -rn

echo ""

echo "TOP 20 PAGES CRAWLED BY AI BOTS:"

grep -Ei "gptbot|oai-searchbot|chatgpt-user|claudebot|claude-searchbot|claude-user|perplexitybot|perplexity-user" "$LOG_FILE" \

| awk '{print $7}' \

| grep -v "^/$\|\.ico\|\.css\|\.js\|\.png\|\.jpg\|robots\.txt\|sitemap" \

| sort | uniq -c | sort -rn \

| head -20

echo ""

echo "LLMS.TXT REQUESTS:"

grep "llms" "$LOG_FILE" | awk '{print $7, $9}' | sort | uniq -c | sort -rnAI Citation Monitoring

Set up a monthly manual audit across the four major AI platforms. For each priority keyword:

bash

# Document the query, platform, date, and cited sources

# Format: date | platform | query | cited_urls

2026-04-25 | ChatGPT | "what is entity seo" | moz.com/blog, searchenginejournal.com, ritnerdigital.com

2026-04-25 | Perplexity | "what is entity seo" | backlinko.com, ahrefs.com/blog

2026-04-25 | Google AIO | "what is entity seo" | searchengineland.com, moz.com/blogTrack this monthly. A spreadsheet with citation presence marked as 0/1 per query per platform gives you a citation rate baseline you can measure improvements against as you implement the stack above.

Validating Your Implementation

Verify each layer of your implementation independently:

bash

# Verify llms.txt is accessible and returns correct content type

curl -I https://yourdomain.com/llms.txt

# Should return: Content-Type: text/plain or text/markdown

# Should return: HTTP/2 200

# Verify robots.txt is correctly configured

curl https://yourdomain.com/robots.txt | grep -A2 -i "claudebot\|perplexitybot\|oai-searchbot"

# Verify Markdown endpoints serve correctly

curl -I https://yourdomain.com/blog/your-post.md

# Should return: Content-Type: text/markdown

# Should return: HTTP/2 200

# Verify schema markup is valid

# Use Google's Rich Results Test: https://search.google.com/test/rich-results

# Use Schema.org validator: https://validator.schema.org/

# Check AI sitemap is accessible

curl -I https://yourdomain.com/ai-sitemap.xml

# Should return: Content-Type: application/xml

# Should return: HTTP/2 200Implementation Priority Order

Given the varying levels of confirmed impact across these layers, here is the recommended implementation sequence ranked by verified utility versus implementation cost:

Implement immediately (high confidence, low cost):

Robots.txt audit and bot-tier separation — 2 hours, verified impact on search crawler access

Schema markup stack (Organization, Article, Person, FAQPage) — 4–8 hours, verified correlation with AI citation rates

Server log AI crawler monitoring — 1 hour setup, ongoing intelligence value

Implement in sprint 2 (moderate confidence, moderate cost): 4. llms.txt file — 1–2 hours, low risk, forward-looking positioning 5. AI-specific sitemap with priority curation — 2–4 hours, signals high-value content to all crawlers

Implement in sprint 3 (experimental, higher cost): 6. Markdown endpoint companions for top 10–20 content assets — 8–16 hours depending on stack 7. HTTP header differentiation for crawler type — 2–4 hours, Nginx/Apache configuration 8. Inline citation markup on key content — ongoing content production discipline

Want a technical audit of your current AI crawler configuration and content architecture?

Request your AI Visibility Audit → ritnerdigital.com/#contact

Frequently Asked Questions

Does llms.txt actually work?

Currently, its confirmed impact is limited. Major AI platforms have not officially stated they read llms.txt files. However, Anthropic's documentation references it, Google has included it in their A2A protocol experiments, and server log data from early adopters shows some AI crawler traffic to the file. Implement it as low-risk infrastructure, not as a primary citation strategy.

Should I block GPTBot?

It depends on your goals. Blocking GPTBot opts you out of OpenAI training data collection — a privacy and IP decision — but does not affect ChatGPT search answers, which are driven by OAI-SearchBot and ChatGPT-User. If AI citation visibility is a goal, allow OAI-SearchBot and ChatGPT-User regardless of your GPTBot decision.

How do I tell training crawlers from search crawlers in robots.txt?

Use explicit user-agent-specific entries for each bot rather than wildcard rules. Training crawlers (GPTBot, ClaudeBot, Google-Extended) and search crawlers (OAI-SearchBot, Claude-SearchBot, PerplexityBot) have distinct user agent strings and should have distinct robots.txt entries. A single User-agent: * rule cannot make this distinction.

Will Markdown endpoints hurt my Google rankings?

No, if implemented correctly. Serve HTML to Googlebot and Markdown only to identified AI crawlers, or make Markdown endpoints available at separate .md URLs that don't canonicalize against your HTML pages. Use <link rel="canonical"> in your HTML to prevent any indexing confusion.

How do I verify that AI crawlers are actually visiting my site?

Server log analysis is the most reliable method. Filter your access logs for known AI user agent strings using the grep pattern in Part Six. Check weekly — crawler visit patterns are irregular and may not appear in daily snapshots.

What is the most important single thing I can do for AI crawler accessibility?

Audit your robots.txt to ensure you are not accidentally blocking search and retrieval crawlers (OAI-SearchBot, Claude-SearchBot, PerplexityBot) while intending to block only training crawlers (GPTBot, ClaudeBot). This is the most common configuration error and the one with the most direct impact on AI citation visibility.

How often should I update my llms.txt file?

Update it whenever you publish significant new content — particularly original research, comprehensive guides, or benchmark reports that represent your highest-value citation assets. Monthly review is sufficient for most sites; quarterly is the minimum.

References

No Hacks. (2026, April). The AI User Agent Landscape in 2026: A Complete Reference. https://nohacks.co/blog/ai-user-agents-landscape-2026

Search Engine Journal. (2026, February). Anthropic's Claude Bots Make Robots.txt Decisions More Granular.https://www.searchenginejournal.com/anthropics-claude-bots-make-robots-txt-decisions-more-granular/568253/

ALM Corp. (2026, February). ClaudeBot, Claude-User & Claude-SearchBot: Anthropic's Three-Bot Framework.https://almcorp.com/blog/anthropic-claude-bots-robots-txt-strategy/

Scrunch. (2025). Guide to AI User Agents. https://scrunch.com/resources/guides/guide-to-ai-user-agents/

Paul Calvano. (2025, August). AI Bots and Robots.txt. https://paulcalvano.com/2025-08-21-ai-bots-and-robots-txt/

HUMAN Security. (2026, January). The Ultimate List of Crawlers and Known Bots for 2026.https://www.humansecurity.com/learn/blog/crawlers-list-known-bots-guide/

Mintlify. (2026, March). What is llms.txt? Breaking Down the Skepticism. https://www.mintlify.com/blog/what-is-llms-txt

Get Publii. (2026, January). The Complete Guide to llms.txt: Should You Care About This AI Standard?https://getpublii.com/blog/llms-txt-complete-guide.html

Search Engine Land. (2025, July). Meet llms.txt, a Proposed Standard for AI Website Content Crawling.https://searchengineland.com/llms-txt-proposed-standard-453676

Qwairy. (2025). AI Crawlers & Technical Optimization: The Ultimate Guide.https://www.qwairy.co/guides/complete-guide-to-robots-txt-and-llms-txt-for-ai-crawlers

Momentic Marketing. (2025). List of Top AI Search Crawlers + User Agents.https://momenticmarketing.com/blog/ai-search-crawlers-bots

BotRank AI. (2025). Robots.txt Guide for AI Ranking. https://www.botrank.ai/technical-doc/robots-txt

Ritner Digital is a B2B digital marketing agency specializing in AI-era content strategy, entity SEO, and search visibility. We help B2B brands build the technical and content infrastructure that AI models cite accurately and consistently.