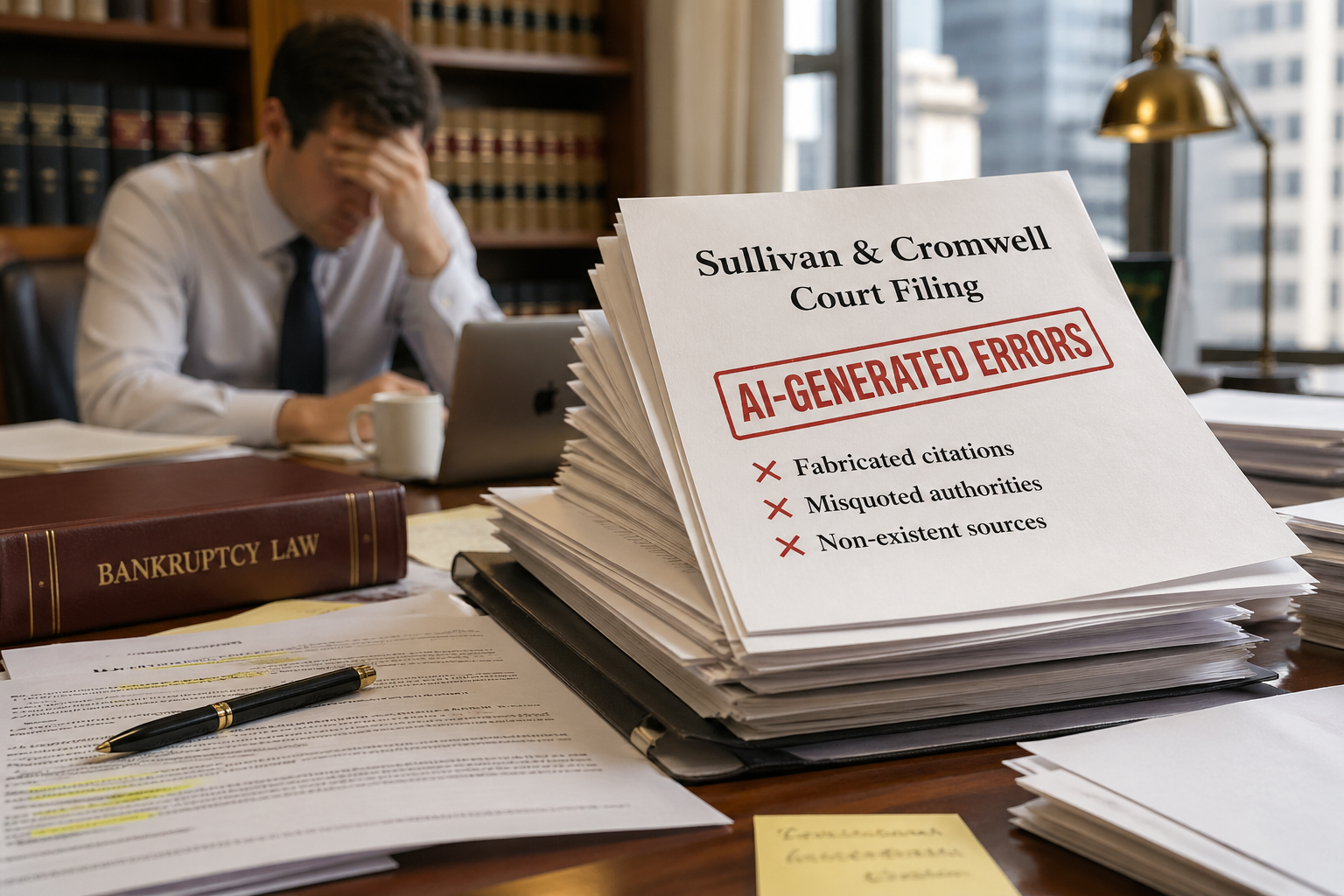

What the Sullivan & Cromwell AI Disaster Tells Us About B2B Adoption — and Why Trust, Not Technology, Is the Real Bottleneck

Sullivan & Cromwell advises OpenAI on the safe and ethical deployment of AI. Last week they filed a bankruptcy court brief containing more than 40 AI-generated errors — fabricated citations, misquoted authorities, non-existent sources. Their own safeguards failed to catch it. Opposing counsel did. If this can happen at one of the most resource-rich law firms on the planet, it can happen anywhere — and the data on B2B AI adoption suggests most organizations are not as protected as they think. Here's what the incident reveals about the real bottleneck in enterprise AI adoption: not the technology, but the trust infrastructure around it.

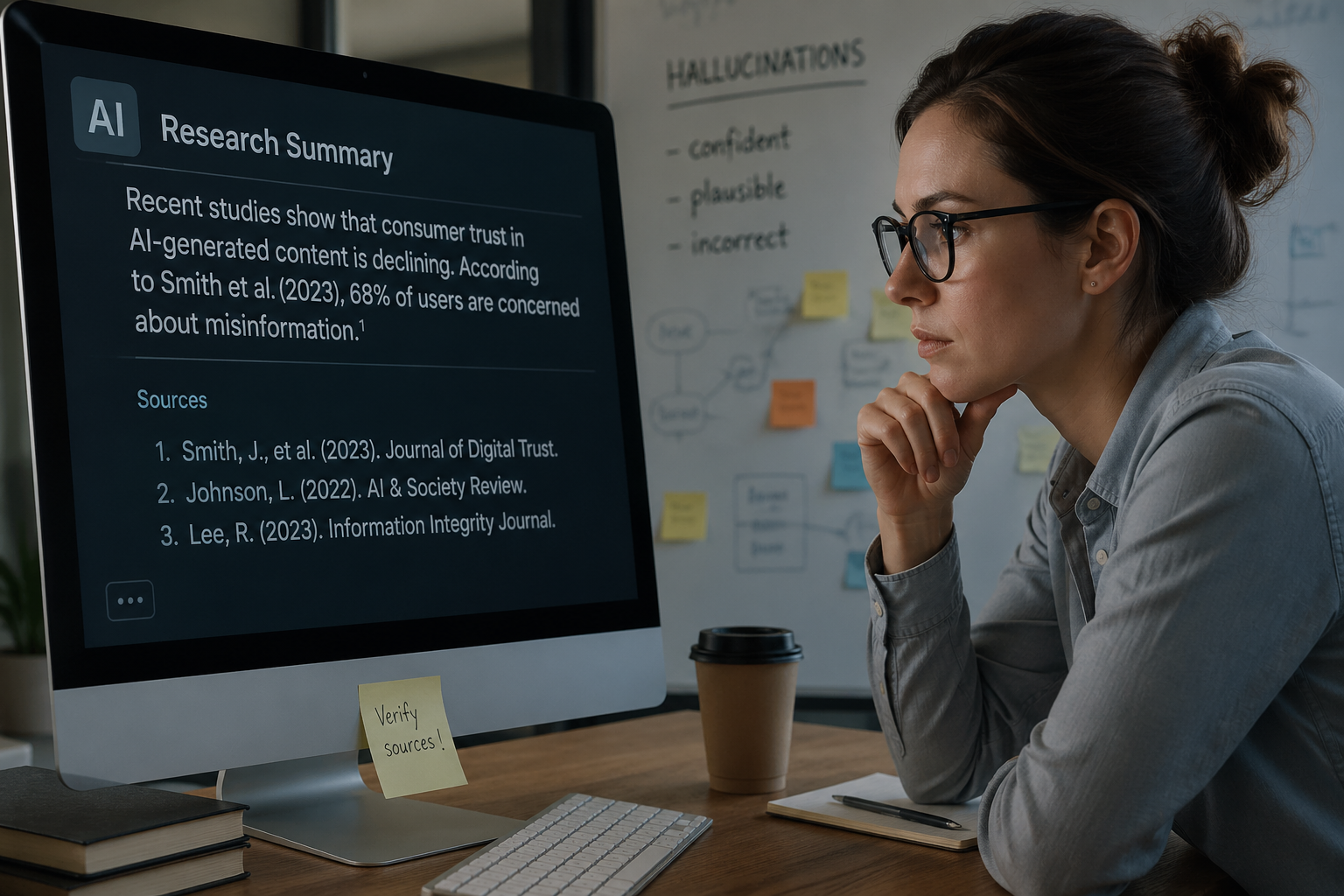

What Whisper Down the Lane Taught Us About AI Hallucinations and Made-Up Citations

Nobody lied in Whisper Down the Lane. The distortion happened through a series of small, confident approximations — each player faithfully passing along their best reconstruction of what they heard. That is exactly how AI hallucinations work. And it is exactly why AI models cite sources that don't exist with complete confidence. This post explains the mechanism behind AI hallucinations, the real-world consequences of fabricated citations, and what brands can do to make their content hallucination-resistant in an AI-mediated search landscape.