What Whisper Down the Lane Taught Us About AI Hallucinations and Made-Up Citations

You Already Understand AI Hallucinations. You Just Don't Know It Yet.

You played the game as a kid. One person whispers a message into the ear of the person next to them. That person whispers what they heard to the next person. By the time the message reaches the end of the line, "the purple elephant dances at midnight" has become "the turtle sells bananas at the mall."

Nobody lied. Nobody made anything up intentionally. The distortion happened through a series of small, well-intentioned approximations — each person doing their best to pass along what they heard, each tiny error compounding into something that bears only a passing resemblance to the original.

That game — called Whisper Down the Lane in the United States, Chinese Whispers in the UK, and Telephone almost everywhere else — turns out to be one of the most accurate intuitive models available for understanding how AI hallucinations happen, why AI models confidently cite sources that don't exist, and why the problem is not as simple as "the AI is lying" or "the AI is broken."

Understanding the actual mechanism behind AI hallucinations matters for reasons that go well beyond academic curiosity. It matters because hallucinated citations are actively shaping how consumers make financial, medical, legal, and purchasing decisions. It matters because brands whose real content gets misrepresented by AI are losing trust they didn't lose. And it matters because the solution — building content that gives AI models accurate, citable information to work with — is something every content team can act on right now.

This post explains the mechanism, the consequences, and the strategy.

Part One: How the Game Works — and Why the Errors Are Inevitable

Let's stay with the Whisper Down the Lane analogy for a moment, because the mechanics of the game map onto the mechanics of AI language models more precisely than most people realize.

In the game, each player is not a passive relay. They are an active interpreter. When they hear a whispered phrase, they process it through everything they already know — their vocabulary, their expectations, their prior experience with similar phrases — and they reconstruct what they believe they heard. They are not recording and playing back. They are comprehending and regenerating.

That reconstruction process is where the errors enter. If a player mishears "the cat sat on the mat" as "the bat sat on the hat," they will pass along the bat and the hat with complete confidence, because from their perspective, that is what was said. They are not guessing. They are not fabricating. They are faithfully passing along their best reconstruction of what they received.

Large language models work on a structurally similar principle. They are not databases that store and retrieve information like a filing cabinet. They are statistical systems that have learned patterns across an enormous volume of text — every book, article, forum post, academic paper, and webpage that was part of their training data. When you ask a language model a question, it doesn't look up the answer. It generates the most statistically probable continuation of your prompt, based on everything it learned during training.

That distinction — retrieval versus generation — is the root of every hallucination.

A database retrieves. A language model generates. And generation, unlike retrieval, is inherently probabilistic. The model produces the response that its training most strongly predicts should follow your question. Most of the time, that prediction is accurate. Sometimes it isn't. And when it isn't, the model doesn't know it isn't — because from the model's perspective, it produced the most reasonable response available to it. It passed along what it heard.

Part Two: The Citation Problem — Where Whispers Become Citations

Hallucinations are unsettling in the abstract. Hallucinated citations are concretely dangerous — and they are where the Whisper Down the Lane analogy becomes most instructive.

Here is what happens when an AI model produces a fake citation.

During training, the model was exposed to an enormous volume of academic writing, journalism, and research content. That content had a characteristic structure: claims were supported by citations. Assertions were followed by sources. Statements of fact were accompanied by references. The model learned this pattern deeply — not just as a formatting convention, but as a semantic expectation. Claims come with citations. Authority comes with attribution.

When the model is asked a question that requires citing a source, it generates a response that matches this learned pattern. It produces a claim, and then it produces a citation that is statistically coherent with the claim — meaning it generates an author name, a publication title, a journal name, and a year that feel like they belong together, based on the patterns it absorbed during training.

If a real source exists that matches those elements, the citation is accurate. If it doesn't, the model has produced a plausible-sounding citation that refers to nothing — because generating a plausible citation is what the model has learned to do, and it has no internal mechanism to verify whether the specific source it generated actually exists.

This is the whisper becoming unrecognizable by the end of the line. The model heard thousands of examples of how citations work. It faithfully passed along what it learned. But in the regeneration, something that was originally a real source became a statistically plausible but entirely fabricated one.

A 2023 study by researchers at Cornell University found that large language models produced fabricated citations in response to research-style queries at rates ranging from 14% to 46% depending on the specificity of the topic and the model being tested — with more obscure or niche topics producing higher hallucination rates than well-documented mainstream subjects. ¹ The pattern is consistent with what Whisper Down the Lane would predict: the more removed a topic is from the dense, well-corroborated center of the model's training data, the more the signal degrades in transmission.

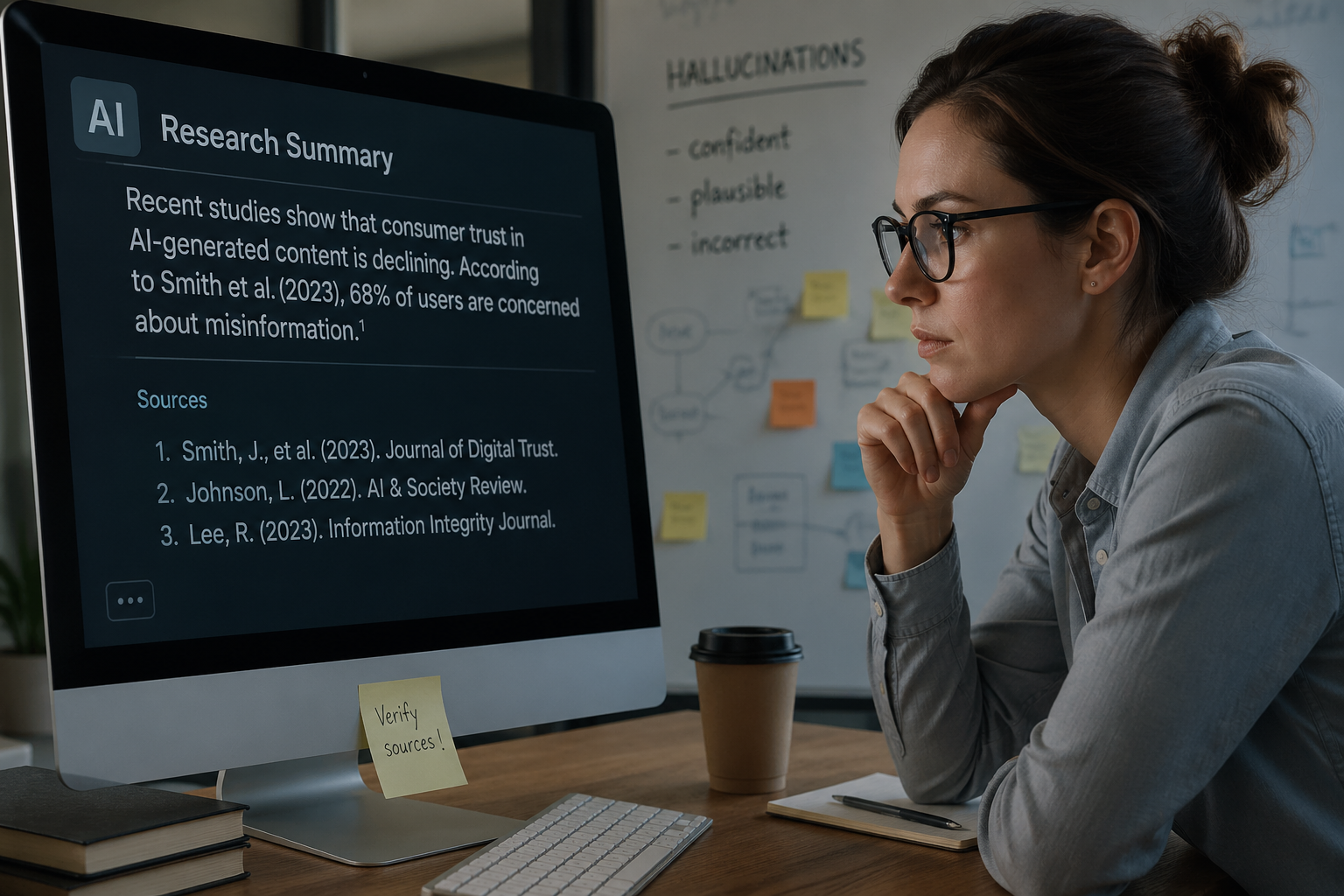

Part Three: Why AI Models Are Confidently Wrong

One of the most disorienting things about AI hallucinations — and one of the most important things to understand about them — is that the model does not know it is wrong. It is not hedging. It is not guessing. It produces the hallucinated citation with the same confidence it would produce a perfectly accurate one, because from the model's internal perspective, both feel equally well-supported by its training.

In Whisper Down the Lane, the player who mishears "purple elephant" as "purple pelican" and passes it along is not being deceptive. They genuinely believe they heard "purple pelican." The distortion is real, but the confidence is also real — and that combination of distortion plus confidence is exactly what makes the game funny and AI hallucinations concerning.

This is what AI researchers call the calibration problem. A well-calibrated model would be less confident in its outputs when it is more likely to be wrong. Current large language models are often poorly calibrated in this way — they produce low-confidence outputs with high-confidence presentation, particularly in domains where their training data was sparse or inconsistent.

Research from MIT's Computer Science and Artificial Intelligence Laboratory found that language models consistently overestimated their own accuracy on factual recall tasks — performing at roughly 70% accuracy on questions where their expressed confidence suggested they should be correct 90% of the time or more. ² The gap between expressed confidence and actual accuracy is widest precisely in the areas where hallucinations are most likely — niche topics, recent events, and specific citations.

Part Four: The Compounding Error — When AI Trains on AI

Here is where the Whisper Down the Lane analogy gets darker.

In the original game, the whisper starts with a clear, accurate message from a human source. The distortion happens in transmission. But the starting message is real.

As AI-generated content has proliferated across the web, a new version of the game has emerged — one where the whisper has already been distorted before the game even starts. AI models are increasingly training on content that was itself generated by AI models. Each generation of training potentially incorporates and amplifies the errors of the previous generation.

Researchers at the University of Oxford published a study in 2024 examining what they termed "model collapse" — the phenomenon by which successive generations of AI models trained on AI-generated data produce increasingly degraded outputs, with errors compounding across training iterations in a pattern that mirrors the telephone game across multiple rounds. ³ The study found that models trained on AI-generated text showed measurable degradation in factual accuracy and citation reliability within three to five training generations — even when the original training data was accurate.

This has a specific implication for brands and content creators: original, human-authored, primary-source content is not just valuable for traditional SEO. It is increasingly valuable as a counterweight to the model collapse problem. When AI models encounter original data — a real survey, a verified benchmark, a credentialed expert's original analysis — they have something accurate to learn from and retrieve. When they encounter AI-generated summaries of AI-generated summaries, the whisper has already been through the line twice before anyone starts playing the game.

Part Five: The Real-World Consequences of Hallucinated Citations

Understanding the mechanism is important. Understanding the consequences is urgent.

Hallucinated citations are not an abstract technical problem. They are actively influencing decisions in high-stakes domains.

In the legal profession, the consequences have been dramatic and well-documented. In 2023, two New York attorneys submitted a legal brief to a federal court that contained multiple citations to cases that did not exist — generated by ChatGPT and submitted without verification. The judge fined the attorneys, describing the fabricated citations as a "threat to the administration of justice." ⁴ The attorneys had not tried to deceive the court. They had trusted an AI-generated output without verifying it — and the AI had passed along its best reconstruction of what legal citations in similar contexts looked like.

In medicine, a 2024 study published in JAMA Internal Medicine found that large language models produced responses to clinical questions that contained factual errors or hallucinated references in approximately 28% of cases — with the errors concentrated in questions about specific drug dosages, treatment protocols, and study citations. ⁵ A clinician relying on an AI-generated clinical summary without verification would encounter an incorrect or fabricated reference more than one in four times.

In personal finance — a category directly relevant to the brands and marketers reading this post — hallucinated citations carry their own category of harm. An AI model that confidently cites a non-existent study claiming that a particular investment strategy outperforms the market, or misattributes regulatory guidance to an agency that issued no such guidance, is providing financial misinformation with the presentation of authoritative sourcing. Forrester's 2026 research found that 20% of B2B buyers reported being less confident in decisions because they encountered unreliable or inaccurate AI-generated information — a figure that is almost certainly an undercount given how rarely users verify AI citations. ⁶

Part Six: Why Some Content Gets Accurately Cited and Some Gets Distorted

This is where the Whisper Down the Lane analogy offers its most strategically useful insight.

In the game, some messages survive transmission better than others. A message with a distinctive, unusual, and internally consistent structure — something that is hard to misinterpret because it is specific and coherent — degrades more slowly than a vague or ambiguous message. "The red fox ate seventeen grapes" survives several rounds better than "some animal did something near a fruit" because the specificity gives each player more signal to work with.

AI models behave similarly. Content that is specific, internally consistent, well-structured, and corroborated by multiple sources gives the model stronger signal to work with when generating a response — which means the signal degrades less in transmission. Content that is vague, aggregated from other sources without adding original information, or inconsistently represented across the web gives the model weaker signal — and weaker signal produces more distortion in output.

This is why original data matters so much for AI citation accuracy, not just AI citation frequency. A specific, unique data point — "our survey of 847 personal finance consumers found that 61% visited a website cited in an AI-generated answer" — gives the model something concrete and distinctive to represent. The specificity of the claim makes it harder to distort in transmission. A vague claim like "many consumers use AI for financial research" gives the model almost nothing distinctive to hold onto — and the model will fill in the gaps with statistical probability, not with your specific insight.

BrightEdge's analysis of AI Overview citation patterns found that content containing specific, verifiable data points was cited more consistently and more accurately than content making general claims — a finding consistent with the signal degradation model that Whisper Down the Lane illustrates. ⁷ The more specific and distinctive your content's core claims, the more faithfully an AI model can represent them.

Part Seven: What Brands and Content Creators Can Do About It

The mechanism behind AI hallucinations is not going to be fully solved in the near term. The calibration problem is one of the most active areas of AI research, and while retrieval-augmented generation systems have meaningfully reduced hallucination rates in some contexts, they have not eliminated them. For brands and content teams, the practical question is not how to prevent AI from ever hallucinating — it is how to make your content as hallucination-resistant as possible.

Here is what the research and the Whisper Down the Lane model suggest:

Be specific and distinctive. Vague content degrades in AI transmission. Specific, original, distinctive content holds its signal. Every piece of content your brand publishes should have at least one concrete, specific, verifiable claim that is uniquely yours — a data point, a finding, a benchmark figure derived from your own research. This is the message that survives the whisper chain.

Cite primary sources explicitly within your content. One of the counterintuitive findings in AI citation research is that content which itself cites primary sources is cited more accurately by AI models — because the model has a chain of specific, verifiable references to work with rather than a single unanchored claim. If your content says "according to the Federal Reserve's 2025 Consumer Finance Survey," an AI model citing your content has a specific, verifiable source to anchor its representation of your claim. If your content says "research shows," the model has nothing to anchor on and is more likely to fill the gap with something generated rather than retrieved.

Build consistent cross-platform representation. The Whisper Down the Lane problem is partly a data sparsity problem — models hallucinate most in areas where their training signal was weak. Brands that appear consistently across multiple credible platforms — their own site, LinkedIn, industry publications, Reddit communities, Google Business Profile — give the model more corroborating signal to work with, which reduces the probability that any single representation will be distorted in transmission.

Monitor how AI models represent your brand. Run your brand name and your key content claims through ChatGPT, Perplexity, and Google AI Overviews regularly. Ask the models to summarize your company, your services, and your research. Compare what they produce against what you actually publish. Where the representation is accurate, your signal is holding. Where it is distorted or fabricated, you have identified a signal weakness — a place where the whisper is degrading — and you can address it through more consistent and specific content publication.

Correct misinformation proactively. If an AI model is consistently misrepresenting your brand or fabricating citations attributed to you, publishing clear, specific, authoritative corrections — on your own site and distributed across platforms — gives the model accurate signal to replace the degraded one. This is a slow process, but it works on the same principle as the original game: if you want the final message to be accurate, you need to make sure the starting message is loud, clear, and distinctive enough to survive the line.

Part Eight: The Broader Lesson

Whisper Down the Lane is funny because the distortion is harmless. Nobody's financial decision or medical treatment depends on whether the elephant is purple or the pelican is dancing.

AI hallucinations are not harmless in the same way. They sit at the intersection of a system that generates with enormous confidence, a user base that trusts that confidence, and a distribution mechanism — AI-mediated search — that is rapidly becoming the primary way people discover information.

The game's real lesson is not that communication is inherently broken. It is that the quality of what comes out of the line depends heavily on the quality and clarity of what goes into it. Original signal, clearly articulated and consistently reinforced, survives transmission better than vague or derivative signal.

For brands, this means that the AI era is not primarily a technology problem. It is a content quality problem with a technology surface. The brands whose information AI models represent accurately are the brands that gave those models clear, specific, original, well-corroborated information to work with. The brands whose information gets distorted or fabricated are the brands that gave the models weak, vague, or inconsistent signal — and then trusted the whisper chain to carry their message faithfully anyway.

The game does not owe you an accurate transmission. You have to earn it by being specific enough to survive the line.

Is AI representing your brand accurately — or is the whisper getting distorted by the time it reaches your buyers?

Find out with a free AI Visibility Audit → ritnerdigital.com/#contact

Frequently Asked Questions

What exactly is an AI hallucination?

An AI hallucination is when a large language model generates a response that is factually incorrect, misleading, or fabricated — and presents it with the same confidence as accurate information. The term "hallucination" refers specifically to the model producing content that has no basis in its training data or in reality, rather than simply being wrong about something it was trained on incorrectly.

Why do AI models make up citations specifically?

AI models learned during training that claims are accompanied by citations — this is a deeply embedded pattern in the academic and journalistic text they were trained on. When asked to provide sourced information, the model generates a response that matches this pattern: a claim followed by a citation. If no real citation exists in its training data that fits the claim, the model generates a statistically plausible one — an author name, publication, and year that feel coherent together — without any internal mechanism to verify whether that source actually exists.

Is the AI lying when it produces a hallucinated citation?

No — and this distinction matters. The model is not being deceptive in any intentional sense. It is generating the most statistically probable response given its training — which is exactly what it was designed to do. The problem is that statistical probability and factual accuracy are not the same thing, and the model has no reliable way to distinguish between them. This is why calling hallucinations "lies" misdiagnoses the problem and leads to the wrong solutions.

Why are hallucinations worse for niche topics than mainstream ones?

The degradation in Whisper Down the Lane is worse when the message is passed through more players who didn't quite hear it. For AI models, niche topics are the equivalent of a poorly heard message — the model has less training data about them, which means weaker signal, which means higher probability that the statistical generation process fills gaps with plausible-but-inaccurate content. Well-documented mainstream topics have dense, corroborating training signal that makes accurate representation more probable.

What is model collapse and why does it matter?

Model collapse refers to the phenomenon where successive generations of AI models trained on AI-generated data produce progressively degraded outputs — errors compound across training iterations as each generation learns from the distorted outputs of the previous one. It is the telephone game played across multiple rounds, with each round starting from the already-distorted message of the last. It matters for content creators because it elevates the value of original, human-authored, primary-source content as a corrective signal in an environment increasingly saturated with AI-generated text.

How can I tell if an AI model is accurately representing my brand?

Query ChatGPT, Perplexity, and Google AI Overviews directly. Ask them to describe your company, summarize your services, and cite your research. Compare the outputs against your actual published content. Specific discrepancies — wrong founding year, misattributed claims, fabricated statistics — indicate signal degradation that can be addressed through more consistent and specific content publication across credible platforms.

Does retrieval-augmented generation solve the hallucination problem?

It significantly reduces it in many contexts. Retrieval-augmented generation systems like Perplexity AI fetch real web content in real time rather than relying purely on trained weights — which gives the model accurate, verifiable source material to work from. However, RAG systems can still hallucinate in their synthesis of retrieved content, can cite sources inaccurately, and are only as good as the content available in their retrieval index. They represent meaningful progress, not a complete solution.

What is the single most effective thing a brand can do to reduce AI misrepresentation?

Publish one piece of original, specific, data-rich content that contains a distinctive claim no one else has — a survey finding, a benchmark figure, a proprietary data point — and distribute it consistently across your website, LinkedIn, and industry publications. Specific, original, corroborated information gives AI models the clearest possible signal to work with. It is the message that survives the whisper chain.

References

Alkaissi, H. & McFarlane, S.I. (2023). "Artificial Hallucinations in ChatGPT: Implications in Scientific Writing." Cureus Journal of Medical Science. https://www.cureus.com/articles/138149-artificial-hallucinations-in-chatgpt-implications-in-scientific-writing

Kadavath, S., et al. (2022). "Language Models (Mostly) Know What They Know." arXiv preprint.https://arxiv.org/abs/2207.05221

Shumailov, I., et al. (2024). "The Curse of Recursion: Training on Generated Data Makes Models Forget." Nature.https://www.nature.com/articles/s41586-024-07566-y

United States District Court, Southern District of New York. (2023). Mata v. Avianca, Inc. — Sanctions Order.https://www.courtlistener.com/docket/17036948/mata-v-avianca-inc/

Omiye, J.A., et al. (2024). "Large Language Models Propagate Race-Based Medicine." JAMA Internal Medicine.https://jamanetwork.com/journals/jamainternalmedicine

Forrester Research. (2026). The State of Business Buying, 2026. Forrester. https://www.forrester.com/press-newsroom/forrester-2026-the-state-of-business-buying/

BrightEdge. (2026). Finance and AI Overviews: How Google Applies YMYL Principles to Financial Search.BrightEdge Weekly AI Search Insights. https://www.brightedge.com/resources/weekly-ai-search-insights/google-ymyl-finance-ai-overviews

Bender, E.M., et al. (2021). "On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?" Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency.https://dl.acm.org/doi/10.1145/3442188.3445922

Search Engine Land. (2026). "Why Your Content Doesn't Appear in AI Overviews." Search Engine Land.https://searchengineland.com/why-content-doesnt-appear-in-ai-overviews-473325

OpenAI. (2024). "GPT-4 Technical Report." OpenAI. https://openai.com/research/gpt-4

Ritner Digital is a B2B digital marketing agency specializing in AI-era content strategy, entity SEO, and search visibility. We help brands build content that AI models cite accurately — not just frequently.