Why Google Search Console Shows Zero Clicks on a Query But the Pages Tab Shows Clicks

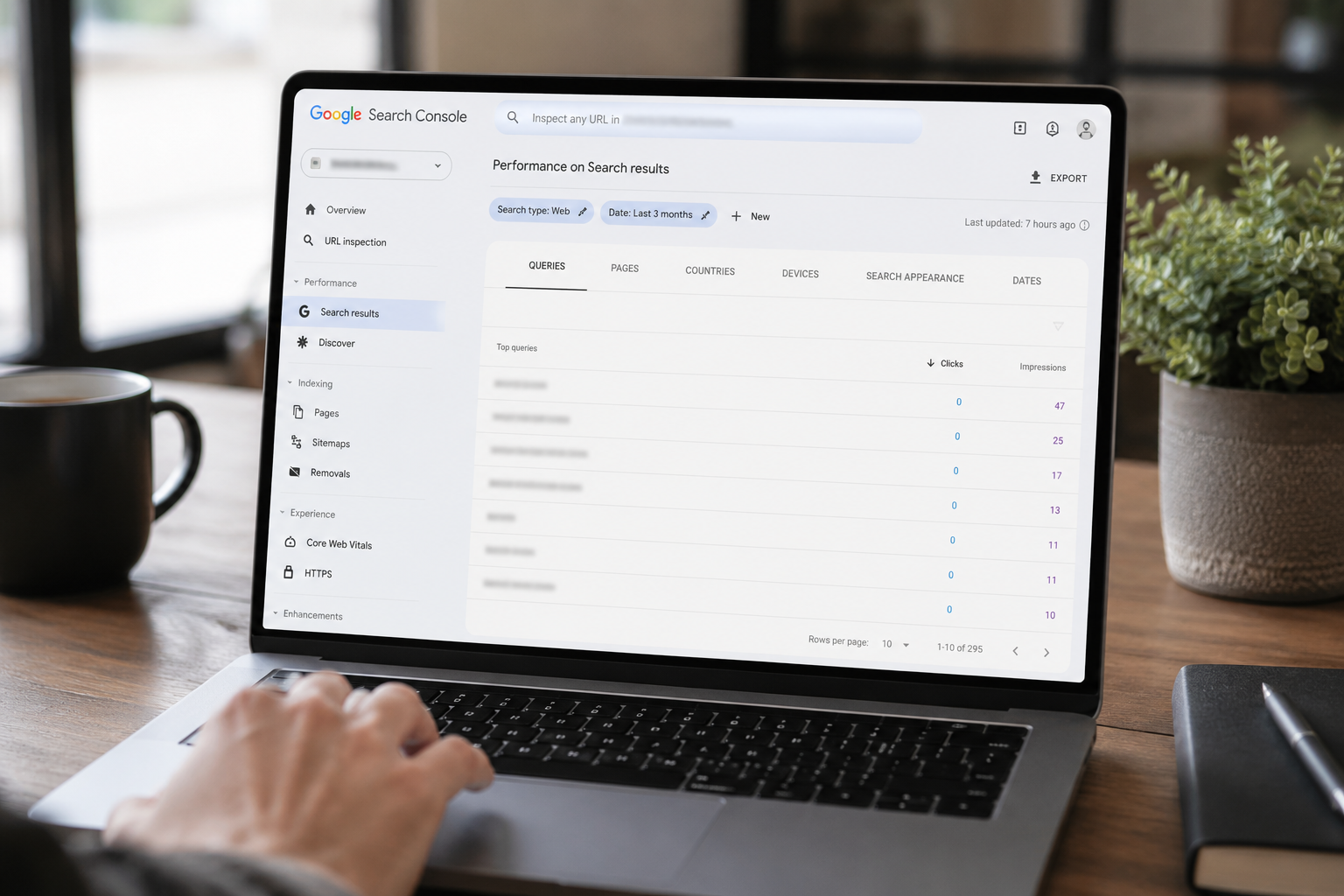

You open Google Search Console. You click into the Queries tab under Performance. You see a list of search terms — some with healthy impression counts — and next to a good number of them: zero clicks. Then you switch over to the Pages tab. The same date range. The same property. And right there on a URL you know is getting traffic, it shows clicks. Real clicks. Sometimes dozens of them.

So which number is right? Is the Queries tab broken? Is Google hiding something? Are you misreading the interface?

None of the above. What you're seeing is one of the most consistently misunderstood features of Google Search Console, and it has a specific technical explanation that once you understand it, changes how you read every piece of GSC data you'll ever look at again.

This post explains exactly what's happening, why Google built it this way, how much data you're actually missing, and what to do about it.

The Short Answer Before the Long One

The clicks you see on the Pages tab are the real total. The Queries tab is showing you a filtered, incomplete subset of what drove those clicks — because Google deliberately withholds the search queries that didn't meet a specific privacy threshold. The page still got clicked. Google just won't tell you what someone typed to find it.

This is not a bug. It is a documented feature of how Search Console handles user privacy. It has been this way for years and it is getting more pronounced as search behavior evolves.

What Google Says About It

Google's official explanation is brief and carefully worded. Anonymized queries are those that aren't issued by more than a few dozen users over a two-to-three month period. To protect privacy, the actual queries won't be shown in the Search performance data. webapex blog

Read that carefully. The threshold isn't about how many clicks a query sent to your site. It's about how many unique users across the entire web searched for that term during the window. A query could drive fifty clicks to your page. But if only twenty people globally typed that phrase into Google over a three-month period, Google considers it too identifying — too traceable back to a specific individual — and removes it from your query report entirely.

The traffic still happened. The clicks still count toward your page totals. The impressions still count toward your site totals. Google just strips out the query label and you're left with a number that has no searchable phrase attached to it.

Google updated its help documentation on this from saying "Very rare queries are not shown" to saying "Some queries are not shown" after research revealed just how widespread the issue was. That language change was quiet but significant — an acknowledgment that what Google was originally calling rare was actually affecting nearly half of all site traffic. JPK Design Co

How Big Is the Problem, Actually

This is where it stops feeling like a minor inconvenience and starts feeling like a genuine blind spot.

The most comprehensive study of this issue to date was published by Ahrefs using data from 22 billion clicks across 887,534 GSC properties. The anonymization rate came in at 46.77%. Ahrefs ran a similar study in 2022 using 9 billion clicks across 146,741 properties and found a rate of 46.08%. A follow-up data pull for April 2024 showed 45.02%. Lumar

Nearly half. Consistently, across three separate studies spanning three years, across hundreds of thousands of sites and billions of clicks, roughly half of all the clicks recorded in Search Console have no associated search query attached to them. You can see in the Pages tab that a page got clicked. You cannot see in the Queries tab what anyone typed to find it.

When Ahrefs plotted the distribution of anonymization rates across individual sites, the most common range — the mode — fell between 45% and 80%. That means many sites are experiencing significantly worse data loss than the average suggests. Lumar

And the problem is not evenly distributed. Small sites showed query visibility ranging from 0% to 37%, with an average of just 18.3%. Medium sites showed a wider range, from 21% to 61%, averaging 39.7%. Squarespace Forum

If your site is smaller, newer, or more focused on a niche topic — which describes the majority of business websites — you are likely seeing far less of your query data than the average 53% that the headline figure suggests. You may be seeing as little as one in five of the queries that actually drove your traffic.

Why Small and Niche Sites Are Hit Harder

The anonymization threshold is based on global search volume for that term — not on how much traffic it sent to you. This distinction matters enormously.

A large e-commerce site ranking for "running shoes" is safe. Millions of people search that phrase globally every month. It will never be anonymized. But a niche professional services site ranking for "transaction coordination services for real estate agents in New Jersey" is a different story. The phrase may have sent that site twelve clicks in a month — but if fewer than a few dozen people globally typed that exact phrase over a two-to-three month window, Google strips it.

Smaller sites tend to publish more niche or specific content, which attracts traffic from highly specific, rarely-searched phrases. These phrases are less likely to reach the "few dozen users" threshold required for attribution. A large e-commerce site ranking for "running shoes" sees millions of searches for that phrase. A small local retailer ranking for "wide-fit running shoes for plantar fasciitis in Austin" sees far fewer — and most of those queries will be anonymized. Lumar

This is also why long-tail content strategies — which are exactly what Google's own guidance recommends for smaller sites trying to build organic authority — produce the most invisible data. The more specific your content, the more specific the queries that find it, and the more likely those queries are to fall below the anonymization threshold and disappear from your reports.

Google has confirmed that 15% of all searches have never been seen before — they are entirely new queries that no one has ever previously typed into Google. Those novel, unique, one-time queries are almost certainly going to be anonymized. And they represent a meaningful share of the conversational, highly specific search behavior that AI-influenced search is amplifying. webapex blog

Why the Pages Tab Doesn't Have This Problem

The Pages tab and the Queries tab are looking at the same underlying data from different angles — and that difference in angle is why one shows you complete information and the other doesn't.

The Pages tab shows you the number of clicks each page has received. Google doesn't exclude any clicks when looking at the page tab, as it is able to collect total clicks here. Search Engine Journal

When Google aggregates data by page, it's counting every click that sent a visitor to that URL regardless of what query triggered it. There's no privacy risk in telling you that your /services/seo page received 47 clicks this month. That number doesn't reveal anything about any individual user's search behavior.

But when Google aggregates data by query, it's exposing what specific individuals typed into a search box. If a query was searched by only twelve people globally and one of them clicked your page, telling you that query drove one click is essentially telling you something about those twelve people. The specificity of the phrase — especially with long-tail, location-specific, or personally relevant queries — makes that data potentially identifying. So Google removes it.

Aggregating by page puts the data into bigger buckets, so user privacy is less of a concern. However, aggregating by query leads to some quite granular data, which Google withholds. Torro Media

The result is a structural mismatch between the two tabs that is permanent and working as intended. The Pages tab will always show more clicks than the sum of clicks across all visible queries. That gap is your anonymized traffic — real visitors who found your site through queries Google won't disclose.

The 1,000-Row Limit Is a Separate, Smaller Problem

People often conflate two different GSC data limitations, and it's worth being clear about which one is which.

The 1,000-row limit refers to the maximum number of rows the Search Console interface will display in any given table. If your site generates more than 1,000 unique queries, the interface will only show you the top 1,000. This can cause a discrepancy between what you see on screen and what actually exists in your data.

The anonymized query problem is different and much larger. It's not about display limits — it's about data that Google actively removes before it even reaches the interface. One analyst described seeing 79 queries in the Queries tab responsible for 708 impressions out of 1,434 total — missing detail on 51% of impressions and 100% of clicks. The site only had 79 rows, well under the 1,000-row maximum, so the limit was not the explanation. Torro Media

The 1,000-row limit is a smaller and more fixable problem — the Search Console API returns up to 25,000 rows, and BigQuery exports give you access to even more. The anonymized query problem follows you into the API and into BigQuery, though BigQuery does give you slightly better visibility. For one site analyzed through BigQuery, the API returned around 40,000 unique queries while BigQuery showed 350,000 — a huge difference that reveals just how much additional data exists that the standard interface never surfaces. Squarewebsites

What About Sensitive and Personal Queries

Google cites two reasons for anonymization: privacy of users and low volume of searches. The privacy reason gets most of the attention in documentation but is almost certainly responsible for a much smaller portion of the missing data than the volume threshold.

Google strips queries that might contain personally identifiable information — names, addresses, phone numbers, medical conditions, or financial details. If someone searches a very specific phrase containing personal information, that query may be anonymized because it contains identifiable data. This is a reasonable policy. But it doesn't explain 46% of all clicks being hidden. Most commercial and informational queries don't contain personal data. The volume threshold is where the bulk of the hidden data comes from. Backlinko

The research backs this up. Ahrefs noted that the reasoning around personal and sensitive queries doesn't account for the scale of what's being hidden. The conclusion is that the anonymization is primarily driven by the volume threshold — queries simply not being searched enough globally to clear the bar Google has set. webapex blog

This Is Getting Worse, Not Better

The anonymized query problem has been relatively stable at around 46-47% for three years. But there are structural reasons to expect it will worsen as search behavior evolves.

Ahrefs noted their April 2025 data predates the full rollout of AI Overviews, and researchers expect the anonymization rate to rise because AI Overviews are triggered primarily by longer, more conversational queries — exactly the type that falls below anonymization thresholds. As users write longer queries to get AI-generated answers, more of that traffic will be attributed to no specific phrase in GSC. Lumar

The behavioral shift is real and measurable. AI tools have trained people to ask longer, more specific, more conversational questions — and that behavior is now spilling into Google search. The more specific a query is, the less likely it is to be searched by enough people to clear Google's threshold, and the more likely it is to vanish from your query report even as the clicks it drove show up accurately on the Pages tab.

This means the structural gap between what the Pages tab shows and what the Queries tab shows will likely widen over the next several years, not narrow.

What You Can Actually Do About It

Accepting the limitation is the first step. The second step is building a workflow that uses the data you do have as effectively as possible.

Use the Pages tab as your source of truth for traffic measurement. The click counts on the Pages tab are complete and reliable. When you want to know how a piece of content is performing, look at the page-level data, not the query-level data. The query data tells you directional information about search intent, not a complete accounting of traffic.

Treat visible queries as a representative sample, not a census. Always remember that any traffic analysis you do based on visible queries only applies to about half your actual search traffic. If you decide to focus on a keyword that drives "20% of your traffic" based on visible data, you're actually focusing on something that might only drive 10% of your real traffic. The queries you can see are biased toward higher-volume, less-specific phrases. The ones you can't see are biased toward long-tail, highly specific, often high-intent searches. Ryan Tronier

Infer the invisible queries from the content of your pages. If a page is getting clicks that the Queries tab can't explain, you know what that page is about. Use that knowledge to infer what people are likely searching to find it. The keywords you can see give you strong hints about the ones you can't see — they're usually just longer, more specific versions of the same topics. Ryan Tronier

Use third-party tools to cross-reference. Tools like Ahrefs and Semrush track rankings independently of GSC and often surface queries that GSC anonymizes. In one example, GSC showed 327 terms driving traffic to a page while a third-party tool showed 426. Only 178 appeared in both data sources — meaning each source contained unique information the other didn't have. Neither tool is complete on its own. Together they give you a more complete picture. Backlinko

Focus on trends and percentage changes rather than absolute numbers. As the number of anonymous queries doesn't tend to fluctuate much over time, you can assume that the data you are looking at is relevant for each time period. By looking at this, you can still use comparisons across time to get an understanding of any lift or drop in performance. A 20% increase in clicks on the Pages tab is meaningful and real even if you can't attribute all of those clicks to specific queries. Search Engine Journal

For power users: explore the BigQuery export. Google Search Console data can be connected to BigQuery, which gives you access to anonymized query rows — shown as NULL values — that let you at least quantify how much traffic is unattributed even if you still can't see the specific phrases. Using multiple data sources — GSC, GA4, third-party rank trackers, BigQuery — provides a more complete picture than any single tool. Lumar

How This Appears in Our Own Data

We covered this in our own April 2026 SEO Benchmark Report when we noted several high-impression pages with zero or near-zero clicks in the query data. The marketing retainer pricing page had 2,394 impressions and 2 clicks visible in query data. The social media monitoring cost guide had 1,276 impressions and zero visible clicks. The Asana vs. ClickUp comparison post had 1,242 impressions and zero visible clicks.

Those pages are not dead. They are generating real impressions from real searches. The zero-click problem in our case is a combination of two things: some is the AI Overview effect (Google answering the question before anyone clicks) and some is the anonymized query effect (Google recording impressions from queries it won't show us). The Pages tab, by contrast, shows real session counts for those pages because people are arriving — just through queries that don't make it into the visible query report.

Reading both tabs together, understanding what each one actually measures, and not panicking when they don't match is the practical skill that separates people who use GSC effectively from people who get confused and stop trusting the data.

The Bottom Line

The Queries tab in Google Search Console is not showing you all of your traffic. It never has been. On average, across hundreds of thousands of sites and billions of clicks, anonymized queries made up 46.77% of website traffic in April 2025 — and that figure is expected to grow as search queries get longer and more conversational in the AI era. webapex blog

The Pages tab shows you the real, complete click count for each URL. The Queries tab shows you a privacy-filtered subset of the phrases that drove those clicks — the ones Google considers high-volume enough to disclose safely. The gap between the two is not a reporting error. It is a documented architectural feature of how Search Console handles user privacy.

Understanding this changes how you use the tool. It means you stop trusting the query totals as a complete accounting of your traffic. It means you use the Pages tab for performance measurement and the Queries tab for directional intent signals. And it means you supplement GSC with other data sources rather than treating it as the only truth.

The data is incomplete by design. The skill is learning to work effectively with the data you have.

Want Help Making Sense of What Your Search Console Data Is Actually Telling You

If you're looking at your GSC reports and not sure whether a number represents a real trend or a limitation of how the tool reports data, that's exactly the kind of question we spend a lot of time on.

Talk to Ritner Digital about your search data →

Frequently Asked Questions

Is my Google Search Console data broken if the Queries tab doesn't match the Pages tab?

No. This is working exactly as Google designed it. The two tabs are measuring the same underlying traffic from different angles and with different privacy filters applied. The Pages tab shows you the complete click count for every URL on your site — every click that landed someone on a page gets counted there regardless of what query triggered it. The Queries tab shows you a filtered subset of the phrases that drove those clicks, specifically the ones that cleared Google's privacy threshold. The mismatch between them is not a glitch or a configuration problem. It is a structural feature of how Search Console handles user data, and it exists on every GSC property in existence.

What exactly is an anonymized query and why does Google hide it?

An anonymized query is a search phrase that drove clicks or impressions to your site but does not appear in your GSC Performance report because it didn't meet Google's privacy threshold. Google's stated threshold is that a query must be searched by more than a few dozen unique users globally over a two-to-three month window to be shown in your data. If fewer people than that typed a specific phrase into Google during that period, Google considers the phrase too identifying — too traceable back to a small group of specific individuals — and strips it from your report entirely. The traffic those queries generated still counts in your totals. The specific phrase that generated it does not appear. Two reasons are cited: volume threshold (the query wasn't searched often enough globally) and privacy threshold (the query contains sensitive or personally identifiable information). Research strongly suggests the volume threshold is responsible for the vast majority of hidden queries, not the privacy one.

How much of my GSC data is actually missing from the Queries tab?

On average, close to half. Ahrefs analyzed 22 billion clicks across 887,534 GSC properties in April 2025 and found an anonymization rate of 46.77% — meaning roughly one in every two clicks your site receives has no associated query shown in the Queries tab. The figure was 46.08% in a 2022 study and 45.02% in April 2024, showing the problem has been consistently present for years. But the average obscures wide variation. The most common range across individual sites falls between 45% and 80% missing. Small sites with niche content can have query visibility as low as 0% to 37%, meaning they may only be seeing one in five of the queries that actually drove their traffic. The more specific your content, the worse the problem tends to be.

Why does the problem affect smaller and more niche sites more than large ones?

Because the anonymization threshold is based on global search volume for a phrase — not on how much traffic it sent to your site. A query has to be searched by more than a few dozen unique users across the entire web during a two-to-three month window, regardless of how many clicks it sent specifically to you. Large sites ranking for high-volume head terms like "marketing agency" or "SEO services" are safe — millions of people search those phrases. Smaller sites that have earned rankings for highly specific, long-tail queries like "transaction coordinator for real estate agents in New Jersey" are not. That phrase might have sent a small site thirty clicks in a month, but if fewer than a few dozen people globally typed it during the window, it's anonymized. The more niche and specific your content strategy — which is exactly what most small business SEO advice recommends — the more of your query data gets hidden.

Does using the Search Console API or exporting to CSV fix this problem?

No. The anonymized query problem follows you into the API and into CSV exports. The data that is removed from the interface is removed from the API response as well — you will see the same missing queries whether you access the data through the interface or programmatically. What the API does help with is the separate 1,000-row display limit in the interface. The standard GSC interface only shows the top 1,000 queries in a table, but the API can return up to 25,000 rows. For sites generating more than 1,000 distinct queries, the API gives you significantly more query data. But the anonymized portions of your traffic — the queries that fell below Google's threshold — are still missing regardless of how you access the data. BigQuery exports give you slightly better access in that you can see NULL-value rows representing anonymized traffic, which at least lets you quantify how much is unattributed, but the actual phrases still don't appear.

Is this the same as the "not provided" problem in Google Analytics?

Related in spirit but different in mechanism. The "not provided" problem in Google Analytics — which became pervasive after Google switched to encrypted search in 2011 — means that GA stopped passing organic search query data into Analytics, so you couldn't see what people searched for when they arrived at your site from organic search. That problem is about the connection between Analytics and Search Console being severed. The anonymized query problem in GSC is different — it's about GSC itself withholding query data at the source before you even get to see it, based on Google's privacy threshold. They both result in you not being able to see what people searched, but for different technical reasons and through different mechanisms.

Why is the Pages tab considered more reliable than the Queries tab?

Because Google applies no privacy filtering to page-level data. Telling you that a specific URL on your site received 47 clicks this month reveals nothing about any individual user's behavior or search history. The number doesn't identify anyone. But telling you that the phrase "therapist who specializes in divorce anxiety in Austin Texas" drove one click to your site is potentially identifying, because if that phrase was only searched twelve times globally, each of those twelve people is a small enough group that disclosure creates a privacy risk. Google can safely show you complete page-level click counts without compromising user privacy. It cannot safely show you complete query-level click data without potentially exposing rare, specific, sensitive, or personally identifiable search behavior. The Pages tab will always be a more complete accounting of your traffic than the Queries tab will ever be.

If I can't trust the Queries tab to show all my traffic, what should I actually use it for?

Use it for directional intent signals, not for complete traffic accounting. The queries you can see in GSC are a real and useful sample of your traffic — they just aren't the whole picture. They tell you what categories of search intent are reaching your site, which topic clusters are driving visibility, what kinds of questions your audience is asking, and which pages are earning rankings for meaningful phrases. The bias in visible query data runs toward higher-volume, less-specific phrases — which means the queries you can see tend to be the broader, more competitive ones, while the highly specific long-tail queries that drove real conversions are more likely to be hidden. Use the visible queries to understand the shape of your traffic and inform content strategy. Use the Pages tab for actual performance measurement. Use third-party tools like Ahrefs or Semrush to cross-reference queries that GSC doesn't surface, since those tools track rankings independently and often see queries that GSC anonymizes.

If a page shows zero clicks in the Queries tab but real clicks in the Pages tab, does that mean it's only getting traffic from anonymized queries?

Yes, essentially. If a page shows impressions and clicks on the Pages tab but the Queries tab shows no clicks against any query associated with that page, it means every query that sent someone to that page fell below Google's anonymization threshold — none of them were searched by enough unique users globally to be shown in your report. This is actually more common than it sounds, especially for newer pages targeting very specific topics, pages earning rankings for ultra-long-tail conversational queries, or pages on very new sites where traffic is concentrated in low-volume searches. The page is performing — it's getting real traffic from real searches. You just can't see what those searches were. The practical response is to look at what the page is about, make an educated inference about what someone would search to find it, and use that inference to guide related content rather than panicking about what looks like phantom traffic.

Will this problem get better or worse over time?

The research suggests it will get worse, not better. The anonymization rate has been stable at around 46-47% for three years across multiple large-scale studies. But that stability predates the full rollout of AI Overviews and the broader shift toward longer, more conversational search queries that AI tools have driven. AI Overviews are triggered primarily by longer, more specific, more conversational queries — exactly the kind that are most likely to fall below Google's anonymization threshold because they are rarely searched by enough unique users to clear the bar. As users increasingly type full questions and detailed requests into Google rather than short keyword strings, more of that traffic will land in the anonymized bucket. The expectation across the research community is that the percentage of hidden query data will increase meaningfully over the next few years, making the gap between the Queries tab and the Pages tab wider. The practical implication is that building your SEO strategy around the directional signals in visible query data — rather than treating it as a complete accounting of your traffic — becomes more important over time, not less.

What's the most common mistake people make when they see this discrepancy?

Assuming the data is wrong rather than incomplete. Most people who notice the gap between the Queries tab and the Pages tab either conclude that GSC has a reporting bug, that their analytics are misconfigured, or that something is broken and needs to be fixed. Almost nobody concludes on their own that Google is deliberately withholding nearly half the query data as a documented privacy feature — because that's not an intuitive thing for a tool that's supposed to help you understand your traffic to do. The second most common mistake is treating the queries you can see as a representative sample of equal value to a complete dataset, and making content and optimization decisions based on partial data without accounting for how much is missing. Both mistakes lead to misreading what's actually happening with your organic search performance. Understanding the structural reason for the gap — and that it's permanent and working as intended — is the prerequisite for using GSC effectively rather than being confused by it.